Continuous Batching and KV Caching: Maximizing LLM Throughput

Apr, 25 2026

Apr, 25 2026

The Memory Tax: Understanding KV Caching

To understand why we need complex batching, we first have to look at how Transformers actually "remember" things. LLMs generate text one token at a time. For every new word produced, the model has to look back at everything that came before it to maintain context. Without a cache, the model would have to re-calculate the mathematical representations of every previous token over and over again. This creates a computational nightmare where the cost grows quadratically-O(n²)-as the sentence gets longer. KV Caching is a mechanism that stores the Key and Value vectors of previous tokens in GPU memory to avoid redundant attention computations. By saving these vectors, the model only needs to compute the new token, reducing the computational cost to a linear O(n). While this sounds like a win, it introduces a "memory tax." For a model with L layers and H attention heads, each token requires a specific amount of space (2 * L * A * H). As your conversation grows, the cache expands. If you have thousands of concurrent users, this memory pressure becomes the primary bottleneck, often leading to "Out of Memory" (OOM) errors or forcing the system to limit the number of users it can handle simultaneously.The Efficiency Gap: Static vs. Continuous Batching

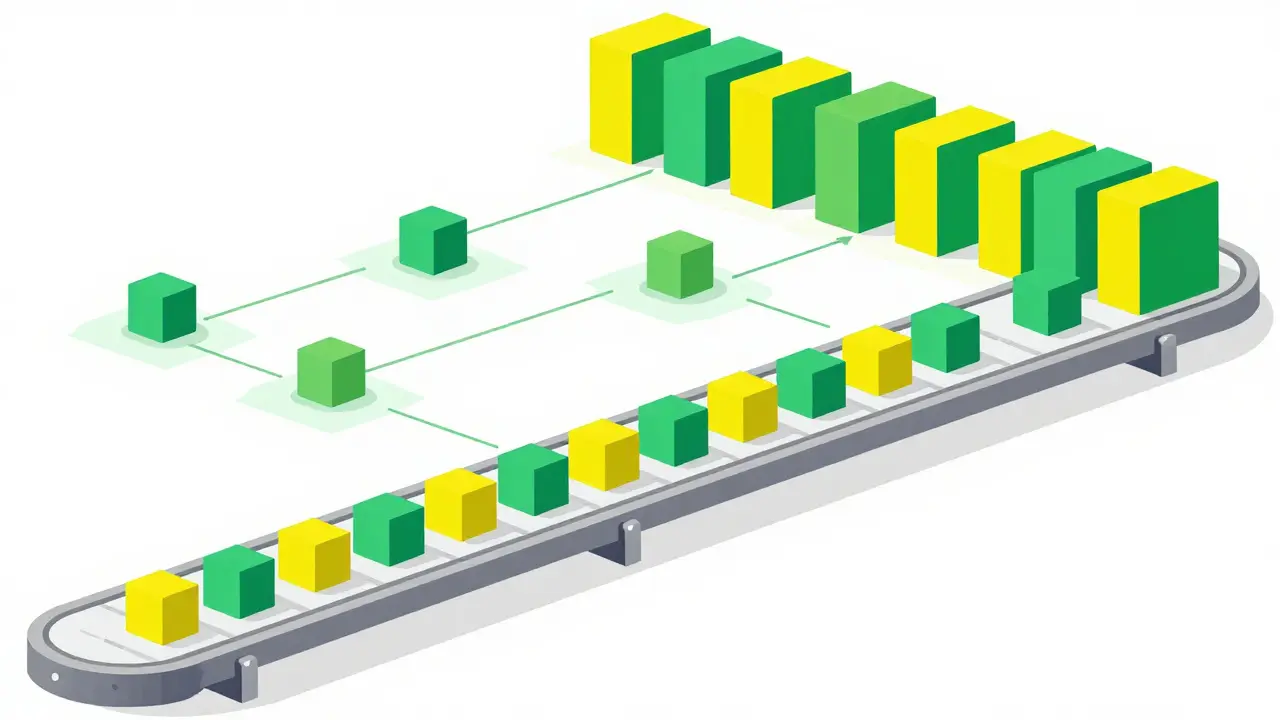

Most early LLM deployments used static batching. The system would wait for a group of requests (say, 4 or 8), run them all through the GPU, and wait until the very last token of the longest request was finished before starting the next batch. This is where the "restaurant problem" happens. If three requests finish in 10 tokens but one request needs 500 tokens, your GPU cores sit idle for 490 cycles, wasting precious compute cycles. Continuous batching, often called iteration-level batching, solves this by operating at the token level. Instead of waiting for the whole batch to finish, the scheduler checks the state of every request after every single token generation. As soon as a request hits its stop token or reaches its length limit, it is evicted from the batch, and a new request from the queue is slotted in immediately.| Feature | Static Batching | Continuous Batching |

|---|---|---|

| Scheduling Unit | Request Level | Token/Iteration Level |

| GPU Utilization | Low (waits for longest request) | High (constant filling of slots) |

| Latency | Higher for short requests | Lower, more consistent |

| Throughput | Baseline | Typically 2x to 23x higher |

Solving Memory Fragmentation with PagedAttention

Even with continuous batching, we hit a wall: memory fragmentation. Traditionally, systems reserved a large, contiguous block of memory for the maximum possible sequence length. If you reserved space for 2,048 tokens but the user only wrote a 10-word prompt, the remaining space sat empty and unusable-this is called internal fragmentation. PagedAttention is a memory management technique that allocates KV cache in non-contiguous fixed-size pages, similar to virtual memory in operating systems. Instead of one giant block, PagedAttention breaks the cache into small blocks. These blocks are allocated on demand. If a sequence grows, the system just assigns another page from the pool. This approach allows multiple requests to share the same physical memory blocks if they have the same prefix (like a common system prompt). By eliminating the need to reserve maximum capacity upfront, PagedAttention dramatically increases the number of requests a single GPU can handle, acting as the foundational engine for systems like vLLM.

Advanced Optimizations: Chunked Prefill and Prefix Sharing

One of the biggest challenges in high-throughput systems is the "prefill" phase. When a user sends a long prompt, the model must process all those tokens at once before it can start generating. This creates a massive spike in compute that can stall the generation of tokens for other users already in the batch. To fix this, modern systems use chunked prefill. Instead of processing a 2,000-token prompt in one go, the system breaks it into smaller chunks (e.g., 512 tokens). This allows the scheduler to interleave the prefill of a new request with the decoding tokens of existing requests, smoothing out the compute load and preventing "stuttering" in the user experience. Furthermore, many production environments use a global prefix tree. If 1,000 users are all chatting with a bot that has the same 500-word "You are a helpful assistant..." system prompt, the system shouldn't calculate and store that prompt 1,000 times. By hashing the prefix and mapping it to a single set of KV blocks, the system saves gigabytes of memory and reduces the Time to First Token (TTFT).Real-World Performance Gains

These aren't just theoretical tweaks; the numbers are staggering. In benchmarks conducted by the vLLM team, continuous batching delivered 10-20x throughput improvements over traditional static methods. Other industry leaders, such as Anyscale, have reported gains as high as 23x. For a business, this is the difference between needing 20 H100 GPUs to serve a user base or needing only 2. Since GPU compute is one of the highest operational costs in AI, these optimizations directly impact the bottom line. The ability to maximize the "tokens per second per dollar" ratio is what makes large-scale LLM products commercially viable.

The Trade-offs: Memory Pressure and Latency

Nothing is free in systems engineering. While these techniques maximize throughput, they can introduce new pressures. As you increase the batch size to saturate the GPU, you consume more memory for the KV cache. If the memory fills up completely, the system may have to pause new requests or even evict existing ones to make room. When memory pressure peaks, you'll notice a decline in two key metrics:- Time to First Token (TTFT): The delay between hitting "send" and seeing the first word appear. High memory contention increases this delay.

- Tokens Per Second (TPS): The actual speed of the text streaming. If the GPU is overwhelmed by a massive batch, the generation speed for each individual user may drop.

What is the main difference between static and continuous batching?

Static batching waits for all requests in a batch to finish before starting a new one, meaning the shortest requests are held hostage by the longest one. Continuous batching operates at the token level, evicting finished requests and adding new ones instantly, which keeps the GPU fully utilized at all times.

Does KV caching increase memory usage?

Yes. While KV caching drastically speeds up generation by avoiding redundant calculations, it requires storing key and value vectors for every token in GPU memory. As the sequence length and batch size increase, the memory demand grows linearly, which can lead to memory exhaustion if not managed with techniques like PagedAttention.

How does PagedAttention reduce fragmentation?

PagedAttention treats GPU memory like virtual memory in an OS. Instead of reserving a massive, contiguous block for the maximum possible prompt length, it allocates small, fixed-size pages on demand. This prevents "wasted" space when a user's request is shorter than the pre-allocated maximum.

What is chunked prefill and why is it useful?

Chunked prefill breaks long initial prompts into smaller pieces. This prevents a single massive prompt from hogging the GPU and pausing the token generation for other users in the batch, leading to a smoother and more consistent user experience.

Which libraries implement these techniques?

Many of these optimizations are found in open-source serving frameworks like vLLM and NVIDIA's TensorRT-LLM. These libraries implement continuous batching, PagedAttention, and prefix caching to help developers maximize their hardware efficiency.

Patrick Sieber

April 26, 2026 AT 05:22This is such a great breakdown of a complex topic. I've always found the restaurant analogy really helpful for visualizing how the GPU cycles are actually wasted in static batching.

Kieran Danagher

April 27, 2026 AT 00:51Oh look, someone discovered vLLM. Groundbreaking. It's basically just OS paging but for people who think linear algebra is magic.

Shivam Mogha

April 27, 2026 AT 04:28Very clear explanation.

poonam upadhyay

April 28, 2026 AT 10:20Honestly... this is barely scratching the surface!!! The sheer audacity to ignore the hardware-level cache incoherence that comes with these "optimizations" is just... laughable!!! Purely academic fluff!!!

OONAGH Ffrench

April 28, 2026 AT 23:35the true cost of memory is often an invisible burden in these architectures we simply trade compute for space and call it progress

Sheetal Srivastava

April 30, 2026 AT 18:10While the basic premise of PagedAttention is cute, any serious practitioner knows that the stochastic nature of token generation renders these deterministic memory allocations practically obsolete when dealing with extreme-scale multimodal latent spaces. It's quite quaint to see this presented as a revolutionary leap when the underlying tensor parallelism bottlenecks are still fundamentally unaddressed in this narrative.

Patrick Sieber

May 1, 2026 AT 11:37I think you're being a bit hard on it. Even if it's not a perfect solve for everything, the throughput gains are pretty undeniable for most production use cases!

Eka Prabha

May 3, 2026 AT 02:16One must wonder why these "efficiency gains" are being pushed so aggressively by the hardware giants. It is likely a coordinated effort to obscure the diminishing returns of the current transformer paradigm and keep the H100s selling while the real breakthroughs in non-Euclidean geometry are suppressed by the industry's gatekeepers. The reliance on KV caches is just a bandage on a leaking ship of architectural redundancy.

Rahul Borole

May 4, 2026 AT 10:59It is absolutely imperative that we continue to optimize these pipelines to democratize access to high-performance AI! The integration of chunked prefill is a magnificent step toward a seamless user experience. Let us all strive to implement these frameworks to maximize our operational efficiency!

rahul shrimali

May 6, 2026 AT 09:47keep pushing limits!! great stuff