Prompting for Docs: How to Generate READMEs, ADRs, and Code Comments with AI

Feb, 19 2026

Feb, 19 2026

Ever spent hours writing a README just to realize it’s missing the most basic setup steps? Or tried to explain a complex decision in an Architecture Decision Record (ADR) and ended up with a vague paragraph that doesn’t help anyone? You’re not alone. Teams across the industry are turning to prompt engineering to generate documentation on demand - not as a replacement for human judgment, but as a force multiplier for clarity and consistency.

AI doesn’t write docs because it understands them. It writes them because you told it exactly how to. And when you get the prompt right, you save hours. When you don’t, you get dangerously wrong advice disguised as helpful guidance. This isn’t magic. It’s a skill. And it’s becoming as essential as writing clean code.

READMEs: Your Project’s First Impression

A README is the first thing a new developer sees. If it’s confusing, they walk away. If it’s clear, they start contributing. The best AI-generated READMEs don’t just list commands - they map out a journey.

Here’s what works: Start with the objective - "Generate a README for a Python web app built with FastAPI." Then add context: "The app uses PostgreSQL, Docker, and Redis. Target audience: junior developers with basic Python knowledge." Include instructions: "Outline installation in 5 steps. Show a working API call example. Add a section on how to run tests and contribute. Use a friendly but professional tone. Format in Markdown."

GitHub’s own internal benchmarks show that prompts with these five components - objective, context, instructions, tone, and format - cut README creation time from over three hours to under 25 minutes. But here’s the catch: 52% of those AI-generated READMEs still had broken code samples or outdated dependency versions. Why? Because the AI doesn’t know your project. It only knows what you tell it.

That’s why few-shot prompting matters. Include one or two real examples of READMEs from similar projects. Don’t just say "make it like this" - paste the actual text. A study from ScoutOS found that developers who used just two well-written examples improved accuracy by 48%. It’s not about quantity. It’s about quality of reference.

ADRs: Capturing Decisions That Matter

ADRs are where teams make architecture decisions stick. They’re not just notes. They’re legal records of why you chose Kafka over RabbitMQ, or why you moved from monolith to microservices. Get this wrong, and someone five years from now will waste weeks undoing a bad call.

Most AI-generated ADRs fail because they’re superficial. "We chose Option A because it’s faster." That’s not an ADR. That’s a guess.

The winning prompt structure follows Microsoft’s ADR template: Context - what problem are you solving? Alternatives - list at least three options you considered. Decision - what did you pick? Consequences - what’s the impact on performance, cost, or maintainability?

But here’s the real trick: force the AI to reason. Add this line: "Explain your reasoning step-by-step for each alternative." MIT Sloan’s 2023 study found that prompts with this phrase improved ADR quality by 37%. Why? Because it makes the AI simulate deliberation - not just regurgitate facts.

One team at Shopify used this method to document their switch from Redis to PostgreSQL for session storage. The AI, prompted with their exact trade-offs, listed latency, backup complexity, and scaling costs. It even flagged a hidden risk: their monitoring tool didn’t support PostgreSQL metrics. They hadn’t thought of that. The AI did - because they gave it enough context to think.

Still, 63% of negative feedback on developer forums mentions "hallucinated" ADRs - where the AI invents technical details that don’t exist. A Google Cloud study showed that without explicit domain context (like "this is a blockchain payment system using Ethereum smart contracts"), inaccuracies jumped to 41%. That’s not a bug. It’s a risk.

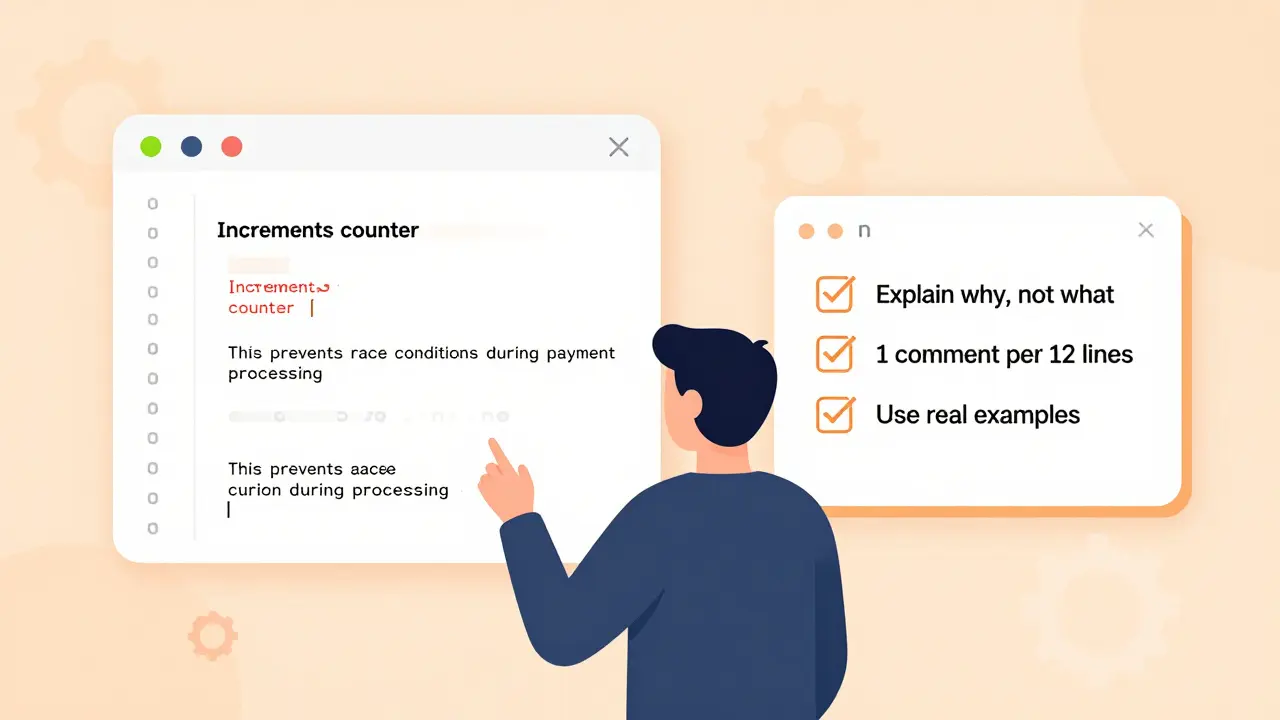

Code Comments: Explain the Why, Not the What

Everyone knows code should be commented. But most comments are useless. "Increments counter." Really? I can see that. What’s the *purpose*?

Effective comment prompts focus on why. The prompt should say: "Add comments to explain the business logic behind this function. Assume the reader knows Python syntax but not our domain. Focus on edge cases and hidden assumptions. One comment per 12 lines of complex logic. Do not repeat what the code says."

ScoutOS’ March 2024 whitepaper found that comments generated with this "why over what" rule were 51% more useful to new team members. A developer at Cloudflare reported that after implementing this across 80 repos, onboarding time dropped by 30%.

Again, few-shot prompting helps. Include a before-and-after example: show a poorly commented function, then show the same function with high-quality comments. The AI learns the pattern. It doesn’t memorize. It generalizes.

But here’s the trap: over-commenting. Some teams ask for comments on every line. That’s noise. The sweet spot? 1 comment per 10-15 lines of complex logic. Anything more becomes a maintenance burden. The AI will follow your rule - if you give it one.

Why This Works Better Than Tools Like Sphinx or JSDoc

Tools like Sphinx and JSDoc are template engines. They auto-generate docs from code structure. But they can’t explain why you chose a specific algorithm. They don’t know your team’s culture. They can’t adapt tone for beginners vs. experts.

Prompt-based documentation is different. It’s customizable. You can say: "Write this like a senior engineer explaining to a new hire." Or: "Use bullet points. No paragraphs. Include a diagram in Mermaid syntax."

Microsoft’s Azure team tested both approaches. Prompt-engineered READMEs were 43% faster to generate. But the real win? Consistency. Teams using prompts reported 71% fewer style variations across repositories. That’s huge when you’re managing 50+ microservices.

Traditional tools give you structure. Prompt engineering gives you voice.

The Hidden Cost: Prompt Engineering Takes Time

You can’t just type "Write a README" and call it a day. Crafting good prompts takes practice. MIT Sloan’s data shows developers need 8-12 hours of hands-on work to reliably produce accurate documentation prompts. ADRs take the longest - an average of 47 minutes per prompt to get right.

That’s why teams are building prompt libraries. GitHub’s public repository for documentation prompts has over 14,800 stars. It’s full of templates: "ADR for database migration," "README for React Native app," "comment style for legacy Java."

Start there. Don’t build from scratch. Find a prompt that matches your project type. Test it. Then tweak it. Save it. Share it. Turn your best prompts into team standards.

What’s Next? Integration and Automation

The next wave is automation. GitLab’s 2024 release now regenerates documentation every time a prompt file changes. JetBrains is embedding AI doc generation into IntelliJ IDEA. Google Cloud’s Document AI offers pre-built templates for READMEs and ADRs.

But automation doesn’t remove the need for skill. It just shifts it. Instead of writing docs, you’re now managing prompts. You’re curating examples. You’re reviewing outputs. You’re still the gatekeeper.

The teams winning here aren’t the ones using AI the most. They’re the ones who understand it the best. They know when to trust the AI. And when to say: "No. That’s wrong. Let’s fix the prompt."

Can AI-generated READMEs replace human-written ones completely?

No. AI can generate a solid draft in minutes, but it can’t replace human judgment. It doesn’t know your team’s culture, your legacy constraints, or your unspoken assumptions. Always review AI-generated READMEs for accuracy, especially around setup steps, dependencies, and contribution guidelines. The best practice is to use AI for the first draft, then edit it like you would any important document.

Why are ADRs so hard for AI to get right?

ADRs require reasoning, trade-offs, and context - not just facts. AI can list options, but it can’t truly understand the real-world consequences of choosing one technology over another. For example, it might not know that your team has no experience with Kubernetes, or that your compliance rules forbid certain cloud services. Without explicit, detailed context, AI often generates plausible-sounding but dangerously incomplete decisions. Always validate ADRs with senior engineers before approving.

How do I avoid hallucinated technical details in AI-generated docs?

Provide specific context. Don’t say "a Python app" - say "a Python 3.12 app using FastAPI 0.110, Docker Compose v2, and PostgreSQL 15, deployed on AWS ECS." Include exact versions, tools, and constraints. The more precise you are, the less the AI has to guess. Also, use few-shot prompting: include real examples from your own codebase. This anchors the AI in reality, not imagination.

Should I use zero-shot or few-shot prompting for documentation?

Use few-shot prompting for ADRs and code comments. Zero-shot (no examples) works okay for simple READMEs, but it fails on anything requiring nuance. Few-shot - where you give the AI 1-3 real examples of good output - improves accuracy by 40-50%. Yes, it takes longer to set up. But the quality gain is worth it. Start with one example from a project you’re proud of.

Is this just a trend, or is it here to stay?

It’s here to stay. Gartner predicts 87% of teams will use AI-generated READMEs by 2026. The real question isn’t whether - it’s how well you’ll do it. Teams that treat prompt engineering as a skill - not a shortcut - will outperform those that treat it as magic. The future belongs to developers who can write clear instructions, not just clean code.

Next Steps: Start Small, Scale Smart

Don’t try to automate everything at once. Pick one README from a new project. Generate it with a well-crafted prompt. Edit it. Save the prompt. Use it again next time.

Then try one ADR. Use the "explain your reasoning step-by-step" trick. Compare the output to what you’d write yourself. Notice where the AI missed things. Adjust your prompt.

Finally, build a library. Create a folder in your repo called "/prompts/docs". Save your best prompts. Label them: "README - Python API", "ADR - Database Migration", "Comments - Legacy Java". Share them. Use them. Improve them.

This isn’t about replacing humans. It’s about giving them back time. Time to think. Time to design. Time to fix the real problems - not write boilerplate.

allison berroteran

February 20, 2026 AT 19:29It’s wild how much of this comes down to the quality of the input, isn’t it? We treat AI like a magic box that spits out brilliance if you just whisper the right incantation-but it’s more like training a very smart intern who’s never set foot in your office. You have to give them the coffee, the whiteboard sketches, the inside jokes about the legacy system no one talks about anymore. The ‘why’ matters more than the ‘what’ because documentation isn’t just instructions-it’s institutional memory. And if you don’t feed it real context, you’re not generating docs, you’re generating time bombs.

One team I worked with used to just throw generic prompts at GPT and wonder why their ADRs said things like ‘we chose Kafka because it’s scalable.’ No shit, Sherlock. But when they started pasting in actual Slack threads where the decision was debated, or screenshots of their monitoring dashboards, suddenly the AI started catching things like ‘wait, we don’t have Kafka operators on-call’ or ‘our dev environment can’t handle the partitioning.’ That’s not magic. That’s empathy engineered into a prompt.

It’s funny how much we’ve outsourced our thinking to tools without realizing we’re just outsourcing the *effort*, not the *judgment*. The real skill isn’t in prompting-it’s in knowing what to leave out, what to emphasize, and when to say ‘no, that’s not right’ and rewrite the whole thing. We’re not replacing writers. We’re becoming editors of a new kind of text-one that’s half machine, half human, and 100% responsibility.

And honestly? I think this is the future of engineering. Not writing code. Not even reviewing code. But curating the context around it. The code runs. The docs explain. But the *why*? That’s ours. And if we stop caring about it, we’ve already lost.

So yeah. Keep building those prompt libraries. But don’t forget to keep writing the stories behind them too.

Gabby Love

February 21, 2026 AT 13:08Just wanted to say the part about few-shot prompting for code comments was spot on. I tried it last week on a legacy Python module-gave the AI one example of a good comment from our codebase, and boom, suddenly the output stopped saying ‘increments counter’ and started explaining why the counter was needed in the first place (it was to avoid duplicate API calls during rate limit windows). Saved me 3 hours of manual editing. Also, never again will I ask for comments on every line. One per 12 lines is perfect. Less is more, especially when the code’s already clear.

Jen Kay

February 23, 2026 AT 02:27Oh, so now we’re calling prompt engineering a ‘skill’? Funny, because it’s basically just writing very specific instructions for a robot that doesn’t understand sarcasm, context, or the fact that your team’s ‘simple’ setup script is actually a 17-step nightmare wrapped in Docker. I’ve seen teams spend 47 minutes crafting the perfect ADR prompt… only to have the AI invent a ‘highly scalable microservice architecture’ that doesn’t exist in their codebase. And then they *trust* it. Because ‘the AI said so.’

Let’s be real: this isn’t about efficiency. It’s about avoiding accountability. You don’t want to write the ADR? Fine. But don’t blame the AI when someone’s production system crashes because the AI ‘reasoned’ that ‘PostgreSQL is better for session storage’-when your entire ops team has never touched it.

Tools like Sphinx and JSDoc? At least they don’t lie. They just give you dry, templated garbage. This? This is elegant garbage with a PhD. And we’re calling it progress?

Michael Thomas

February 24, 2026 AT 13:36AI docs are just lazy. Real devs write their own docs. If you can’t explain your code in 10 minutes, you shouldn’t be writing it. Stop outsourcing your brain to bots.

Abert Canada

February 25, 2026 AT 08:51Man, I love how this article doesn’t even mention how much cultural context matters. Like, in Canada, we don’t just say ‘use Docker’-we say ‘use Docker, but make sure it runs on OpenShift because that’s what our IT dept actually supports.’ AI doesn’t know that unless you tell it. I tried prompting for a README once without mentioning our internal compliance rules… and the AI suggested using a public AWS S3 bucket for logs. Yeah. That didn’t fly.

So yeah, few-shot prompting? Absolutely. But also-give it your team’s internal wiki snippets, your Slack threads, your ‘don’t do this’ list. That’s the real gold. The AI’s not smart. It’s just really good at pattern matching. And if you feed it your culture, it’ll spit out docs that actually work here.

Also, I’ve started saving my best prompts as Markdown files in a /prompts/docs folder. Now my whole team uses them. No more ‘what’s the right way to write this?’ debates. Just ‘use the template.’ Simple. Efficient. Human.

Xavier Lévesque

February 26, 2026 AT 07:12So we’re all just prompt janitors now? Clean the input, scrub the output, hope it doesn’t hallucinate a new database engine in the middle of your ADR? I mean… I get it. It’s faster. But I also miss the days when we actually talked through decisions instead of feeding them to a black box and saying ‘trust the algorithm.’

That Shopify example? Yeah, cool. But what if the AI had missed that monitoring gap? Would they have deployed anyway? Probably. Because ‘the prompt said so.’

I’m not against AI. I’m against pretending it’s not a glorified autocomplete with a confidence complex. Use it. But never stop reading the output. And never, ever let it sign off on your architecture. That’s not innovation. That’s negligence dressed up as efficiency.