RAG System Design for Generative AI: Indexing, Chunking, and Relevance Scoring

Apr, 28 2026

Apr, 28 2026

The real magic isn't just in the retrieval, but in how you organize the data. If you just dump a thousand PDFs into a database, the AI will struggle to find the specific needle in the haystack. To stop hallucinations and get high-accuracy answers, you have to master the trio of indexing, chunking, and relevance scoring. Here is how to actually build it.

The Indexing Engine: Turning Text into Math

You can't just search for keywords anymore. To make RAG work, you need a way to represent the meaning of a sentence. This is where Embedding Models are specialized neural networks that convert text into numerical vectors (lists of numbers) in a high-dimensional space . When two pieces of text have similar meanings, their vectors sit close to each other in this mathematical space.

To store these vectors, you need a Vector Database is a specialized storage system designed to handle and index vector embeddings for lightning-fast similarity searches . While traditional databases look for exact matches, vector databases like Pinecone, Weaviate, or Milvus look for "nearby" concepts. For instance, a query about "company holidays" should pull up a document titled "Annual Leave Policy" even if the word "holiday" never appears in that document.

Most pro setups don't rely on semantic search alone. They use hybrid indexing. This blends semantic search with traditional keyword search (BM25). Why? Because semantic search sometimes misses specific part numbers or unique product IDs that a simple keyword search would catch instantly. Combining both can boost your recall by nearly 30%.

Chunking Strategies: Finding the Goldilocks Zone

You can't feed a 50-page manual into an embedding model as a single block. It's too much noise. You have to break the data into smaller pieces, or "chunks." But here is the problem: if your chunks are too small, the AI loses the context. If they are too large, you bring in too much irrelevant "filler" text, which confuses the model and increases the risk of hallucinations.

A common rule of thumb is to aim for 256 to 512 tokens per chunk. However, the way you cut the text matters more than the size. Instead of hard-cutting at 500 characters, use recursive character splitting. This method tries to break the text at natural points like paragraphs or sentences first, keeping the meaning intact.

For those handling complex technical docs, adaptive chunking is the new gold standard. This approach looks at the semantic shifts in the text and adjusts the chunk size dynamically. If a section is a dense list of specifications, it keeps the chunk tight. If it's a broad conceptual explanation, it lets the chunk expand. This prevents the AI from cutting a critical instruction in half, which is a primary cause of factual errors in RAG systems.

| Strategy | Best For | Pro | Con |

|---|---|---|---|

| Fixed-Size | Simple text blobs | Fast and easy to implement | Cuts sentences in half |

| Recursive | General documents | Preserves structural meaning | Slightly slower processing |

| Adaptive | Technical manuals | Highest contextual precision | Computationally expensive |

Relevance Scoring: Filtering the Noise

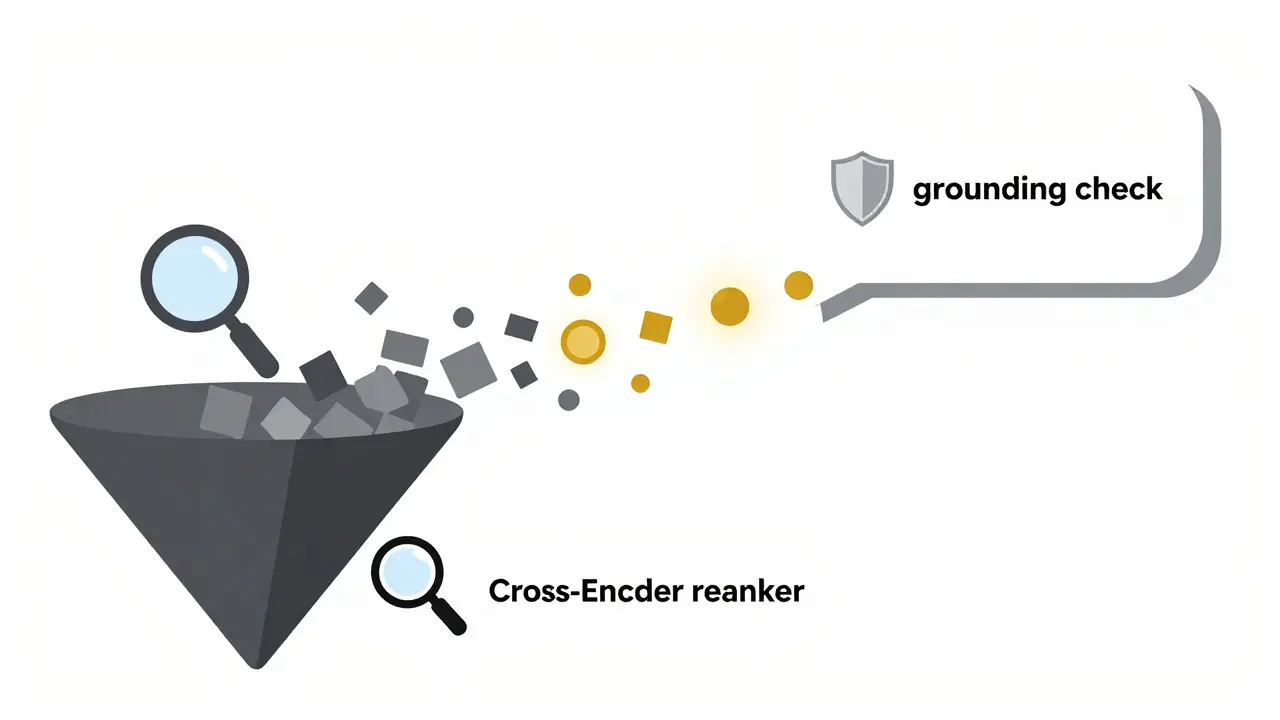

Retrieval isn't perfect. Your vector database will always return the "top K" results, but that doesn't mean all those results are actually useful. If you pass five irrelevant chunks to the LLM, the model might try to force a connection between them, leading to hallucination amplification. This is where relevance scoring comes in.

A powerful technique here is the Reranking step. After the initial fast retrieval from the vector database, you pass the top 20 results through a more computationally expensive Cross-Encoder is a model that compares the query and the document side-by-side to calculate a precise relevance score . The reranker throws away the fluff and only sends the most pertinent 3-5 chunks to the generative model.

To know if your scoring is working, track two metrics: Precision (how many retrieved chunks were actually useful) and Recall (did we find all the necessary info?). If your recall is low, your indexing or chunking is the problem. If your precision is low, your relevance scoring needs a tune-up.

Avoiding the Hallucination Trap

RAG isn't a magic bullet. If you implement it poorly, you can actually make the AI lie more confidently because it thinks it has "evidence." One of the biggest traps is the multi-hop reasoning failure. This happens when the answer requires combining a fact from Document A and a fact from Document B. Standard RAG often fails here because it retrieves chunks in isolation.

To solve this, move toward graph-based retrieval. By using a knowledge graph, the system understands the relationship between entities (e.g., "Product X" is manufactured by "Company Y"). This allows the AI to traverse links between documents rather than just hoping the right chunks happen to be mathematically similar.

Another critical guardrail is the grounding check. Instead of just asking the AI to answer, tell it: "Answer using ONLY the provided context. If the answer is not in the context, say you don't know." This forces the model to be honest and prevents it from filling gaps with its own pre-trained (and potentially outdated) knowledge.

Practical Implementation Workflow

If you are building this today, don't start with a monolithic script. Use a modular pattern. Separate your Retriever (the part that finds the data) from your Generator (the LLM that writes the answer). This lets you swap out your vector database or upgrade your embedding model without rewriting the whole pipeline.

- Data Ingestion: Stream your data from sources like Confluent or operational databases to keep your index fresh.

- Embedding: Use a model tailored to your domain (e.g., a medical-specific model for healthcare data).

- Indexing: Store vectors in a database with hybrid search capabilities.

- Retrieval: Fetch the top 20 candidates using a mix of semantic and keyword search.

- Reranking: Use a cross-encoder to narrow those 20 candidates down to the top 5.

- Generation: Pass the filtered context to the LLM with a strict grounding prompt.

Does RAG replace fine-tuning?

Not necessarily, but for factual accuracy, yes. Fine-tuning is great for teaching a model a specific style or vocabulary, but it's terrible for teaching it new facts because the data becomes static the moment training ends. RAG is the better choice for dynamic data and reducing hallucinations.

What is the best chunk size for RAG?

There is no single perfect number, but 256-512 tokens is a safe starting point. The key is to use recursive splitting or adaptive chunking to ensure you aren't cutting off a sentence in the middle of a crucial point.

How do I stop my RAG system from hallucinating?

Combine three things: a high-quality reranker to remove irrelevant noise, strict "grounding" prompts that forbid the AI from using outside knowledge, and a verification step where the model must cite the specific chunk it used for the answer.

What is a vector database?

It is a database that stores data as embeddings (vectors) rather than rows and columns. This allows the system to perform similarity searches, finding data that is conceptually related to a query even if the exact words don't match.

Why use hybrid search instead of just semantic search?

Semantic search is great for concepts but bad for specific identifiers. If you search for "Order #88291", a semantic search might return "other orders" because they are conceptually similar. Hybrid search uses keyword matching to find the exact ID and semantic search to find the context.