Retrieval-Augmented Generation for Generative AI: Grounding Outputs in Verified Sources

Feb, 25 2026

Feb, 25 2026

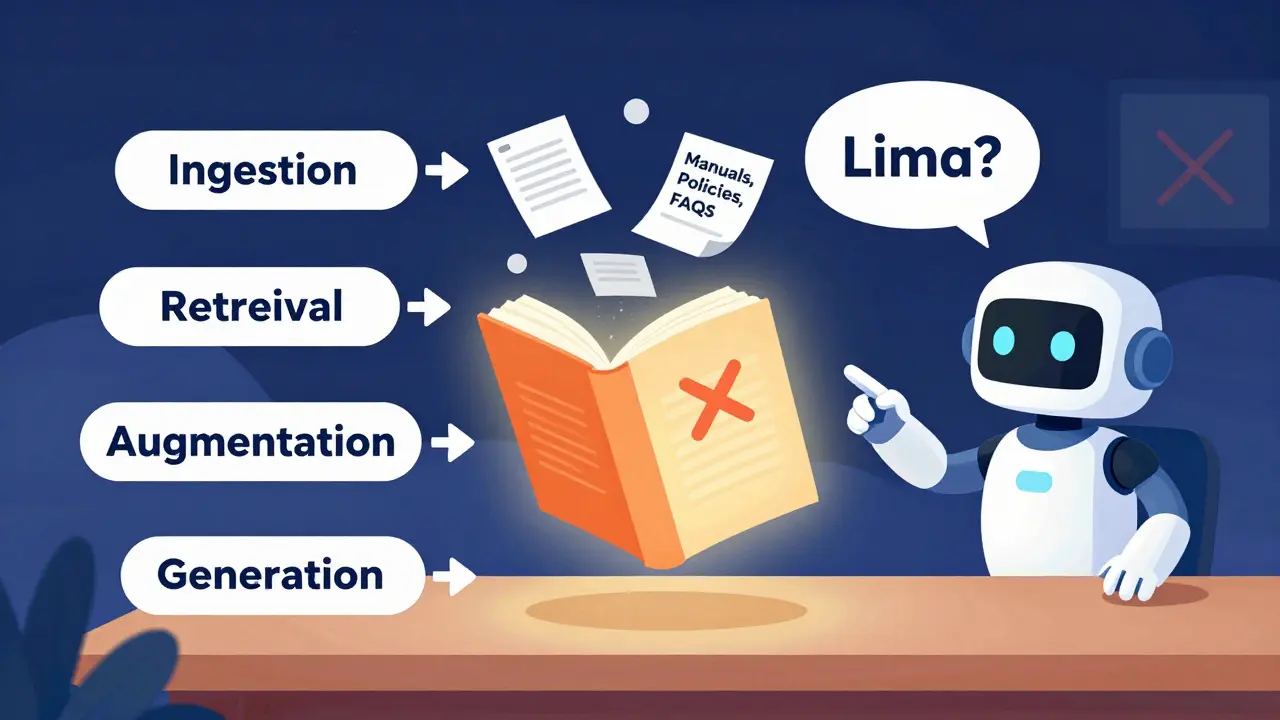

Generative AI tools like ChatGPT, Claude, and Gemini often sound confident-even when they’re wrong. They make up facts, cite fake studies, or invent sources that don’t exist. This isn’t a bug; it’s a fundamental flaw. These models are trained on static data, frozen in time. They don’t know what happened yesterday, let alone five minutes ago. And when they guess, they don’t say, "I’m not sure." They say, "The capital of Brazil is Lima."

That’s where Retrieval-Augmented Generation (RAG) comes in. It’s not a magic fix, but it’s the most effective tool we have right now to stop AI from making things up. RAG doesn’t try to retrain the model. It doesn’t require millions of dollars in compute. Instead, it gives the AI a reference book-right before it answers.

How RAG Works: The Four-Step Process

RAG isn’t complicated in theory. It’s a four-step pipeline that turns a guessing machine into a research assistant.

First, ingestion. You feed your documents-manuals, policies, research papers, FAQs-into the system. These get chopped into small chunks, usually 256 to 512 tokens long. Each chunk is turned into a numerical vector using embedding models like OpenAI’s text-embedding-3-large. These vectors capture meaning, not just keywords. "How do I reset my password?" and "What’s the procedure for account recovery?" end up close together in vector space.

Second, retrieval. When a user asks a question, the system searches those vectors for the most similar chunks. It doesn’t scan text. It compares numbers. Algorithms like HNSW (Hierarchical Navigable Small World) find the top 3-5 most relevant pieces in milliseconds. Think of it like Google Search, but instead of matching words, it matches meaning. A cosine similarity score above 0.78 is typically used to filter out weak matches.

Third, augmentation. The system takes the user’s question and the top retrieved chunks and stitches them together into a single prompt. "Here’s the question: [user query]. Here’s what we found: [retrieved text]. Answer based only on this." This is the grounding step. The AI no longer pulls from its internal memory. It’s forced to rely on your data.

Finally, generation. The LLM-whether it’s Claude 3.5, Llama 3.1, or GPT-4o-reads the augmented prompt and writes a response. Because it’s working from verified sources, it rarely hallucinates. MIT’s 2023 benchmark study showed RAG reduces hallucinations by 47% to 63% compared to plain LLMs.

Why RAG Beats Fine-Tuning

You might think: Why not just retrain the model on new data? That’s fine-tuning. And yes, fine-tuning works well for deep domain expertise-like teaching an AI medical terminology from a hospital’s internal guidelines. Mayo Clinic trials showed fine-tuning improved accuracy by 19.3% in that context.

But fine-tuning has a huge downside: cost and speed. Each retraining cycle costs over $50,000 and takes weeks. If your company updates its policies every quarter, you’re spending millions a year just keeping the AI current.

RAG fixes that. Updating knowledge? Just upload the new PDF. No retraining. No downtime. IBM’s March 2025 benchmark found RAG delivers similar accuracy at just 5-8% of the cost. Financial firms using RAG to track changing regulations maintained 92.7% accuracy, while fine-tuned models dropped to 78.4% because they were stuck with outdated data.

Real-World Impact: What Businesses Are Seeing

Companies aren’t just experimenting. They’re relying on RAG.

Acme Corp’s CTO told a September 2024 case study: "We reduced incorrect policy references by 76% after integrating RAG with our HR knowledge base." Their customer support bot went from 67% accuracy to 89%.

On Reddit, a developer named u/AI_Engineer99 reported their internal chatbot’s accuracy jumped after using LangChain and ChromaDB. But there was a trade-off: latency went from 1.2 seconds to 3.8 seconds per query. That’s the cost of grounding: speed for reliability.

At scale, it works even better. One company processes 2.1 million daily queries using AWS Kendra and Anthropic Claude 3.5. They hit 98.4% relevance accuracy-but spent 14 person-months optimizing chunk sizes and overlap. That’s the hidden work: getting RAG right takes tuning.

Where RAG Still Struggles

RAG isn’t perfect. It has limits.

First, multi-hop reasoning. If a question requires connecting two separate facts-"What’s the impact of last year’s tax law change on employee benefits?"-RAG often fails. Stanford’s CRFM benchmark showed a 14.2-point accuracy gap between RAG and fine-tuned models on these tasks. The AI sees two chunks but can’t synthesize them.

Second, low-resource languages. RAG works great in English. For Swahili, retrieval accuracy drops to 58.7%. That’s because embedding models are trained mostly on English data. If your users speak Hindi, Arabic, or Indonesian, RAG’s effectiveness shrinks.

Third, retrieval drift. Change a word in the question-"How do I cancel?" vs. "How do I terminate my account?"-and the system might pull irrelevant docs. This is why chunking and overlap matter. A 15-20% token overlap between chunks helps maintain context continuity.

And then there’s the illusion of truth. Professor Emily M. Bender warned that RAG’s citations make users think the AI is trustworthy-even when it’s not. Microsoft’s 2024 study found 18.7% of cited sources were misaligned with the generated text. The AI might say, "According to our 2024 policy manual," and cite a document that doesn’t say that. It’s not lying. It’s misreading. And users can’t tell the difference.

Implementation Essentials

If you’re building RAG, here’s what actually matters:

- Chunk size: Stick to 256-512 tokens. Google’s 2024 research confirmed this range works best.

- Overlap: Use 15-20% overlap between chunks. It improves context flow by 28%.

- Embedding model: text-embedding-3-large scores 89.2% on the MTEB benchmark. Open-source alternatives like BGE average 83.7%. The gap matters.

- Vector database: Pinecone, Weaviate, or AWS OpenSearch. Pinecone handles over 1.2 billion vectors per cluster.

- Retrieval method: Use hybrid search (keyword + vector). Google’s 2024 whitepaper showed it improves recall by 32%.

- Cost: AWS Knowledge Base charges $0.10 per 1K vector queries. Google Cloud is $0.0004 per 1K characters. Azure is 37% more expensive.

Most teams underestimate the time needed. O’Reilly’s 2025 survey found developers with NLP experience need 6-8 weeks to get RAG production-ready. It’s not plug-and-play.

The Future of RAG

RAG is evolving fast.

NVIDIA launched RAG-as-a-Service on DGX Cloud in May 2025, offering 99.95% uptime and under 800ms latency. Microsoft’s AutoGen introduced agentic RAG in February 2025, where multiple AI agents check and refine each other’s retrievals-cutting errors by 29%.

Next up: multimodal RAG. Imagine asking, "What’s the condition of this machine based on this image and the maintenance log?" OpenAI’s GPT-5 is expected to support this natively in Q3 2025.

But risks grow too. Forrester warns of "retrieval poisoning"-attackers injecting fake data into your knowledge base to mislead the AI. Carnegie Mellon showed this can succeed 63% of the time in tests. If you’re using RAG for finance, healthcare, or government, you need strict access controls and version audits.

Is RAG Right for You?

Use RAG if:

- You need accurate answers from documents you control

- Your data changes often (regulations, policies, product specs)

- You can’t afford $50K per model update

- You’re building customer support, HR, or compliance tools

Avoid RAG if:

- You need deep reasoning across multiple complex topics

- Your data is in low-resource languages

- You can’t dedicate time to tuning chunking and retrieval

RAG won’t make your AI perfect. But it will make it far less likely to lie. In a world where AI hallucinations cost companies millions, grounding outputs in verified sources isn’t optional-it’s essential.

What exactly does RAG do to reduce hallucinations?

RAG reduces hallucinations by forcing the AI to base its responses on retrieved documents from trusted sources, rather than relying solely on its internal training data. Instead of guessing, the model reads real text from your knowledge base-like company policies, research papers, or manuals-and generates answers grounded in that evidence. MIT’s 2023 study found this cuts hallucination rates by 47-63%.

Is RAG better than fine-tuning for updating AI knowledge?

Yes, for most use cases where knowledge changes frequently. Fine-tuning requires retraining the entire model, which costs over $50,000 and takes weeks. RAG just needs you to upload new documents. IBM’s March 2025 benchmark showed RAG delivers similar accuracy at 5-8% of the cost. It’s ideal for dynamic content like financial regulations or product updates.

Can RAG handle real-time data like stock prices or news?

Not reliably yet. Most RAG systems require data to be pre-processed and indexed into vector databases, which takes minutes to hours. Current solutions (like Apache Kafka’s 2025 guide) support only 2-5 minute latency windows. Real-time RAG is still experimental. For live data, you’ll need hybrid systems that combine RAG with streaming APIs.

What are the biggest mistakes people make when implementing RAG?

Three common mistakes: (1) Using too-large document chunks (over 512 tokens), which lose precision; (2) Not overlapping chunks, causing context to break between sections; and (3) Assuming retrieval accuracy = answer accuracy. Just because the system pulls a document doesn’t mean it’s the right one. Always test with real user questions and monitor for "retrieval drift."

Do I need an AI team to use RAG?

Not necessarily. Cloud platforms like AWS Bedrock, Google Vertex AI, and Azure AI offer pre-built RAG tools. If you have basic data engineering skills-uploading files, managing APIs-you can start without a full AI team. But for complex setups (e.g., custom embeddings, hybrid search, multi-source retrieval), you’ll need someone with experience in vector databases and prompt engineering. O’Reilly estimates a 6-8 week learning curve for developers with NLP background.

Janiss McCamish

February 26, 2026 AT 14:46RAG isn’t perfect, but it’s the closest thing we’ve got to an AI that doesn’t BS you. I’ve seen it cut hallucinations in half at my job-no magic, just better sourcing. The real win? You don’t need a PhD to set it up. Just upload your docs, tweak chunk sizes, and let it run. No $50K retraining bills. Simple. Effective. Why aren’t more companies doing this?

Richard H

February 28, 2026 AT 02:02Let’s be real-this whole RAG thing is just a band-aid. You’re still relying on some AI to read documents like a 10-year-old with ADHD. And don’t get me started on ‘retrieval drift.’ If your system can’t tell the difference between ‘cancel’ and ‘terminate,’ you’re already doomed. We need better models, not better search. Stop pretending this is a solution-it’s a workaround for broken AI.

Kendall Storey

February 28, 2026 AT 09:57Y’all are overcomplicating this. RAG’s not about perfection-it’s about reducing noise. I’ve got a bot running on Pinecone + BGE embeddings, 15% overlap, hybrid search. Hits 94% accuracy on internal queries. Latency? 2.8s. Worth it. The ‘illusion of truth’ thing? Yeah, that’s real. But you fix it by adding a confidence score to every answer. ‘Based on doc X, here’s what it says. I’m 82% sure.’ Boom. Humanizes it. Users trust it more. Stop chasing 100%. Aim for 90% and be transparent.

Also-chunk size matters more than you think. Go over 512 tokens? You’re just feeding the model paragraphs of fluff. 256-512 is the sweet spot. Google’s 2024 paper proved it. Don’t ignore the basics.

Ashton Strong

March 1, 2026 AT 21:31It is with great enthusiasm that I extend my appreciation for this comprehensive exposition on Retrieval-Augmented Generation. The empirical evidence presented, particularly the MIT 2023 benchmark and IBM’s cost-benefit analysis, is both compelling and meticulously documented. I would like to respectfully underscore the importance of adopting this paradigm not merely as a technical optimization, but as a moral imperative in the deployment of generative systems within public-facing domains. The reduction of hallucinatory outputs directly correlates with enhanced user trust and operational integrity. Furthermore, the acknowledgment of low-resource language limitations invites a vital call to action for the AI community to prioritize equitable linguistic representation in embedding architectures. Let us continue to advance not only in efficiency, but in ethical responsibility.

Steven Hanton

March 2, 2026 AT 05:12One thing I’ve learned from running RAG in production: the biggest bottleneck isn’t the model-it’s the data. If your knowledge base is messy, outdated, or poorly structured, no amount of vector magic will save you. I’ve seen teams spend weeks tuning chunk sizes and overlap, only to realize their PDFs had inconsistent headings, missing metadata, or hidden formatting quirks that broke tokenization. Start clean. Audit your sources. Normalize your metadata. Use consistent section headers. It’s boring work, but it’s 70% of the battle. And yes-test with real user questions, not just lab examples. Your support team knows what users actually ask. Listen to them.

Also, hybrid search isn’t optional anymore. Keyword + vector gives you recall. Pure vector gives you relevance. You need both. Google’s 2024 whitepaper was right on this. Skip it at your own risk.