Task Decomposition Strategies for LLM Agents: A Guide to Complex Planning

Apr, 7 2026

Apr, 7 2026

Ever feel like your AI agent hits a wall when you give it a complex, multi-step project? You're not alone. Most Large Language Models (LLMs) struggle with a cognitive ceiling; they can chat effortlessly but often trip over their own feet when a task requires deep reasoning or a long sequence of precise steps. This is where task decomposition is the process of breaking down a complex problem into smaller, manageable subtasks that an AI can solve with higher reliability. Instead of asking the model to "build a whole website," you're teaching it to first map the requirements, then design the schema, then write the HTML, and finally the CSS.

If you've ever seen an agent "hallucinate" a solution halfway through a complex query, it's likely because the task was too big for its context window or reasoning capacity. By slicing the problem into pieces, we don't just make the AI faster-we make it fundamentally more accurate. In some high-stakes benchmarks, like database querying, this approach has boosted accuracy by as much as 40%.

The Core Frameworks Changing the Game

We've moved past simple prompts. Today, developers use structured frameworks to handle the heavy lifting. One of the most advanced is ACONIC (Analysis of CONstraint-Induced Complexity). Introduced in 2025, ACONIC doesn't just guess where to split a task; it treats the problem as a constraint satisfaction problem. By using a technical measure called "treewidth," it identifies the most complex parts of a task and isolates them, which significantly helps when dealing with combinatorial reasoning or the SATBench benchmarks.

Then there's the Task Navigator framework, which is a lifesaver for multimodal agents. If you're working with an AI that needs to "see" images and answer complex questions, Task Navigator breaks a single visual query into several smaller, image-related sub-questions. It then refines these answers to ensure the final conclusion actually makes sense based on the visual evidence.

For those focused on logic and math, Recursion of Thought (RoT) is the go-to. It's designed to fight the context limit by recursively breaking down problems-perfect for those nightmare multi-digit arithmetic tasks that usually make LLMs sweat. If you need a blend of language and execution, Chain-of-Code (CoC) integrates actual code execution into the reasoning loop, ensuring the AI doesn't just "predict" a math answer but actually calculates it.

| Strategy | Primary Use Case | Key Benefit | Trade-off |

|---|---|---|---|

| ACONIC | Constraint/Database tasks | High precision (up to 40% gain) | High implementation complexity |

| Task Navigator | Multimodal (Image/Text) | Improved visual reasoning | Requires refinement loop |

| Recursion of Thought | Deep Math/Logic | Overcomes context limits | Increased latency |

| Chain-of-Code | Calculations/Data Ops | Eliminates calculation errors | Requires a secure code sandbox |

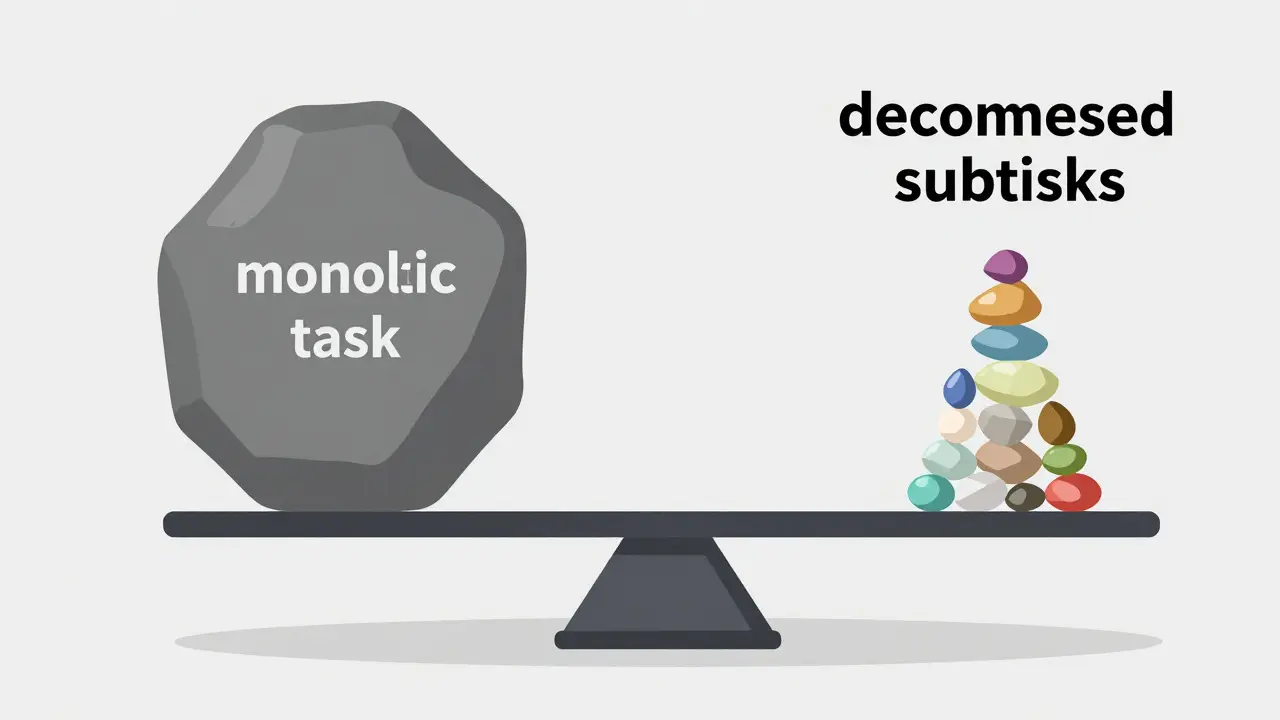

Finding the "Goldilocks" Zone of Granularity

One of the biggest traps developers fall into is over-decomposition. You might think that breaking a task into 50 tiny steps is the safest bet, but that's where "coordination overhead" kicks in. Every time you create a new subtask, you add a layer of communication. If the subtasks are too small, the AI spends more time managing the list than actually solving the problem.

Think of it like this: if you're baking a cake, telling the AI to "crack an egg" is a subtask. Telling it to "move the egg 2 inches to the left, then 1 inch down, then release it" is over-decomposition. You've fragmented the context so much that the AI might forget it's even making a cake.

Amazon Science research from 2025 highlights a fascinating trade-off. While a single monolithic task grows in complexity linearly O(n), parallel decomposition can bring the complexity of individual subtasks down to O(n/k). The goal is to keep subtasks specific enough to be actionable but broad enough to maintain the original intent. According to experts like Dr. Yisong Yue, finding this balance is currently more of an art than a science.

The Practical Cost of Better Reliability

Let's be real: decomposition isn't free. The most immediate hit is latency. Because these agents often work sequentially-meaning step B can't start until step A finishes-you're looking at a potential 35% increase in response time. If you're building a real-time chat bot, that's a huge deal. However, for a financial analysis tool or a healthcare diagnostic assistant, a few extra seconds are a fair price to pay for a massive drop in hallucinations.

There's also the engineering tax. Many developers using LangChain have reported that while reliability goes up, debugging becomes a nightmare. When an agent fails on step 7 of a 12-step decomposed plan, you have to trace back through a chain of dependencies to find out where the logic derailed. It's not as simple as tweaking a single prompt anymore; you're now managing a workflow.

Despite the friction, the ROI is hard to ignore. Some companies have reported reducing infrastructure costs by 62% by using smaller, cheaper models to handle decomposed subtasks instead of relying on one massive, expensive model to do everything. It's the AI equivalent of hiring a team of specialized juniors instead of one incredibly expensive, overworked consultant.

Implementing Decomposition in Your Workflow

If you're ready to move from monolithic prompts to a decomposed agent architecture, don't try to automate everything on day one. Start by mapping your "natural decomposition points." These are the logical breaks where a human would naturally pause to check their work.

- Analyze the Task: Look for dependencies. Does step B require the output of step A? If so, you have a sequential chain. Can step B and C happen at the same time? That's a parallel opportunity.

- Choose Your Handler: Use a high-reasoning model (like GPT-4 or Claude 3.5) for the initial decomposition (the "Planner") and smaller, faster models for the execution of subtasks (the "Workers").

- Build a Feedback Loop: Don't just pass the output of one subtask to the next. Implement a verification step where the agent asks, "Does this answer satisfy the requirements of the subtask?"

- Manage Context: Use context summarization. Instead of passing the entire history of five subtasks to the sixth, pass a condensed summary of the key findings.

A common pitfall is ignoring conditional branching. Real-world tasks aren't straight lines. If a subtask fails, your agent needs a way to loop back or try an alternative path. Using LLM-driven dynamic decomposition allows the agent to change its plan on the fly if it discovers new information during the process.

Will task decomposition always improve accuracy?

Not necessarily. For simple, creative, or short-form tasks, decomposition can actually hurt. It introduces coordination overhead and can fragment the creative flow. It's most effective for tasks with high logical density, like coding, math, or complex data extraction.

How do I handle errors that propagate through subtasks?

This is known as the "cascading error" problem. The best way to mitigate this is through a Select-Then-Decompose approach or by adding a validation layer after each subtask. If the output of a subtask is flagged as incorrect, the agent should be programmed to re-attempt that specific step before moving forward.

Which frameworks are best for beginners?

LangChain and LlamaIndex are the best starting points because they have huge communities and pre-built modules for decomposition. They significantly reduce the initial setup time, often bringing it down from dozens of hours of custom coding to just a few hours of configuration.

What is the difference between Chain-of-Thought and Task Decomposition?

Chain-of-Thought is essentially a "stream of consciousness" where the model thinks out loud in one go. Task Decomposition is more structural; it separates the planning of the steps from the execution of the steps. While CoT is a prompt technique, decomposition is an architectural strategy.

Does ACONIC work for non-technical tasks?

ACONIC is specifically designed for tasks that can be modeled as constraint satisfaction problems. While it's a powerhouse for database queries and logical puzzles, it's overkill (and likely ineffective) for tasks like writing a poem or summarizing a meeting.

Moving Forward: The Future of Agent Planning

We're heading toward a world where decomposition isn't something you manually program, but something the AI optimizes in real-time. Future updates to frameworks like ACONIC are already introducing automated treewidth calculation, and Google Research is eyeing automated boundary detection.

If you're building in this space, the most important takeaway is to stop treating the LLM as a magic box and start treating it as a manager of a workflow. Whether you're using LlamaIndex for data retrieval or a custom ACONIC implementation for logic, the key to reliability is structural discipline. Break the problem down, validate the pieces, and only then assemble the final answer.