Agent-Oriented LLMs: Mastering AI Planning, Tools, and Autonomy

Apr, 11 2026

Apr, 11 2026

Imagine an AI that doesn't just tell you how to fix a server error but actually logs into the system, analyzes the logs, identifies the root cause, and writes the patch itself. For a long time, we've been stuck with "chatbots"-systems that are great at talking but terrible at doing. They are reactive; you ask, they answer. But we are now seeing a massive shift toward Agent-Oriented Large Language Models is a paradigm of AI systems where LLMs are transformed from passive text generators into autonomous agents capable of reasoning, planning, and executing multi-step tasks in dynamic environments.

The core problem is that a standard LLM, no matter how large, is essentially a sophisticated autocomplete engine. It lacks a way to interact with the real world, recall its own past mistakes in real-time, or execute a sequence of actions to reach a goal. To bridge this gap, developers are building "agentic" layers around these models, giving them the autonomy to act rather than just predict the next word.

The Blueprint of an AI Agent

To turn a language model into an agent, you can't just give it a better prompt. You need an architecture. At the center of this is what NVIDIA calls the "agent core." Think of this as the brain's executive function-the module that manages logic, decides which tool to use, and keeps the agent on track toward the final goal.

A true agent-oriented system relies on three pillars: reasoning, memory, and tool use. While a basic AI assistant might suggest a flight, an AI Agent is a software system designed to pursue complex goals independently, showing reasoning and adaptability that allows it to complete tasks on behalf of a user can actually book the flight, handle the payment, and add the itinerary to your calendar. This requires the model to move from a "one-shot" response to a loop of observation, thought, and action.

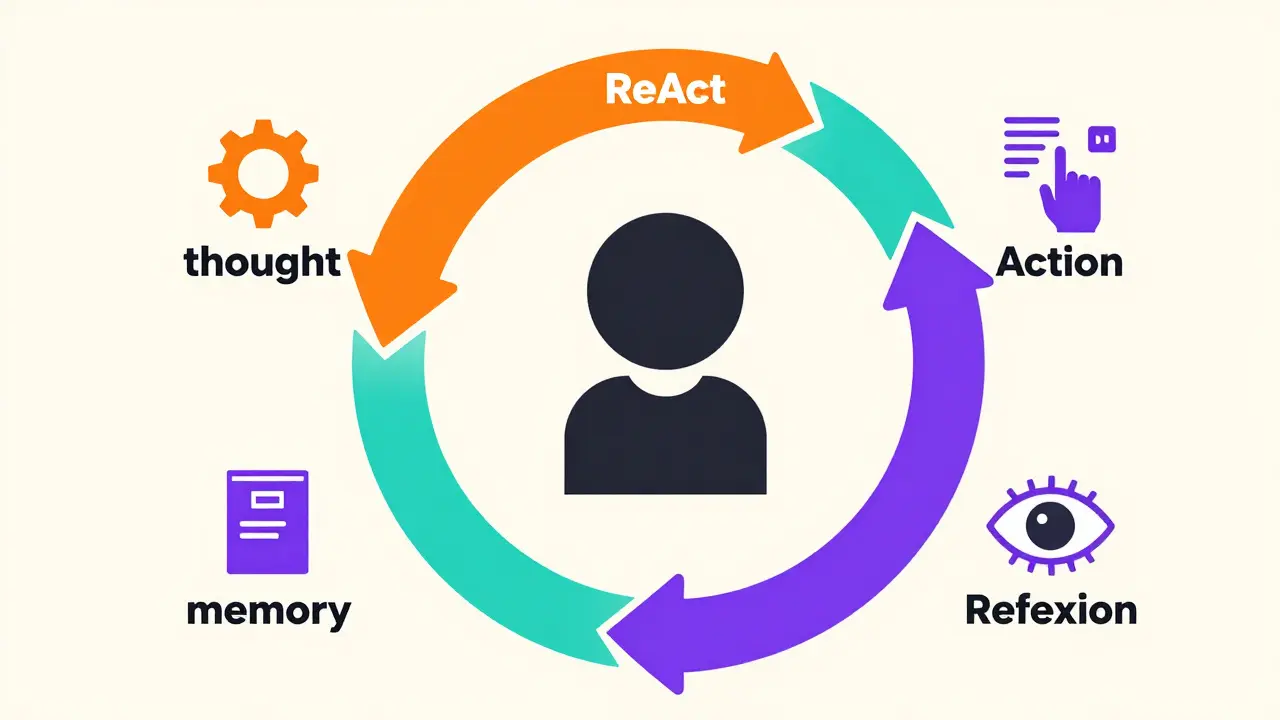

How Agents Plan: ReAct and Reflexion

Planning is where most AI systems fail. If you ask a standard LLM to solve a complex problem, it often "hallucinates" a solution that sounds right but is logically broken. To fix this, two major frameworks have emerged: ReAct and Reflexion.

ReAct a prompting framework that combines 'Reasoning' and 'Acting', allowing models to generate a thought, perform an action, and then observe the result before thinking again is essentially the AI "thinking out loud." Instead of jumping straight to an answer, the agent writes a thought (e.g., "I need to check the current weather in Tokyo to see if the user's clothing choice is appropriate"), executes an action (calls a weather API), and then observes the result. This loop continues until the task is done. It prevents the model from drifting off track because every action is grounded in a fresh observation.

Then there is Reflexion a learning architecture where agents evaluate their own performance after a task and store "lessons learned" in long-term memory to improve future attempts. If ReAct is about thinking during the task, Reflexion is about learning after the task. The agent completes an episode, looks at where it failed, and writes a self-critique. This critique is saved as memory, so the next time the agent faces a similar problem, it doesn't make the same mistake. This is the beginning of true machine autonomy-the ability to improve without a human rewriting the code.

The Power of Tool Integration

An agent without tools is just a dreamer. To be useful, these models must interact with external software. This is achieved by translating natural language into vectors-mathematical representations that help the computer understand the semantic meaning of a request and map it to a specific function.

For example, an agent might use a set of APIs to review system logs weekly, identify anomalies, and compile a report for a manager. It isn't just summarizing text; it's triggering workflows, querying databases, and perhaps even sending a Slack notification. This transforms the LLM from a writer into an operator.

| Feature | AI Bot | AI Assistant | AI Agent |

|---|---|---|---|

| Behavior | Reactive / Rule-based | Reactive / Prompt-based | Proactive / Goal-oriented |

| Capabilities | Simple queries | Information retrieval | Multi-step execution |

| Learning | None (static) | Contextual (short-term) | Adaptive (long-term/Reflexion) |

| Decision Making | Pre-defined paths | User-led | Independent |

The Risks of Autonomy

Giving an AI the keys to your API is terrifying if you don't have guardrails. Because LLMs are trained on human data, they inherit our biases and, more importantly, our inaccuracies. When a chatbot hallucinates a fact, it's a nuisance; when an autonomous agent hallucinates a command to "delete all old files" but accidentally targets the production database, it's a catastrophe.

This is why the industry is moving toward a hybrid model of human-in-the-loop oversight. The agent handles the heavy lifting-the research, the drafting, the initial execution-but a human acts as the final approval gate for high-stakes actions. Governance isn't just about security; it's about defining the boundaries of what an agent is allowed to do without a signature.

Moving Toward Multi-Agent Systems

The next leap isn't just one smarter agent, but a colony of specialized ones. Instead of one "God-model" trying to do everything, we are seeing the rise of multi-agent collaboration. In this setup, one agent might act as the Project Manager, another as the Coder, and a third as the Quality Assurance tester. They talk to each other, critique each other's work, and refine the output through a process of iterative feedback.

This division of labor mimics a real company. The "Coder" agent might write a script, and the "QA" agent will run it in a sandbox and report the errors back. This internal dialogue significantly reduces the error rate because the agents check each other's hallucinations before the final result ever reaches the human user.

What is the main difference between an AI assistant and an AI agent?

An AI assistant is reactive; it waits for a prompt and provides information or performs a simple task. An AI agent is proactive and goal-oriented. It can break a complex goal into smaller steps, use tools to execute those steps, and adapt its plan based on the results it observes, often working independently without needing a prompt for every single action.

How does the ReAct framework improve AI performance?

ReAct (Reason + Act) forces the model to generate a "thought" before taking an "action." By documenting its reasoning process and observing the outcome of its actions in real-time, the agent can correct its course. This prevents the model from blindly following a flawed plan and reduces hallucinations by grounding actions in actual environmental data.

Can AI agents actually learn from their mistakes?

Yes, through methods like Reflexion. In this framework, the agent reviews its performance after completing a task, identifies what went wrong, and creates a "lesson learned" summary. This summary is stored in a form of long-term memory, which the agent refers to in future episodes to avoid repeating the same mistakes.

What are the biggest risks of using autonomous AI agents?

The primary risks are reliability and security. Because LLMs can inherit biases and inaccuracies from their training data, an autonomous agent might execute a wrong command or make a flawed decision. Without strict governance and human oversight, this can lead to data loss, security breaches, or unintended system changes.

What tools do these agents typically use?

Agents use a variety of tools depending on their goal, including external APIs, database query languages (like SQL), web browsers for real-time search, and specialized software scripts. They essentially use the LLM as a reasoning engine to decide which tool to call and how to interpret the data that tool returns.

Zach Beggs

April 11, 2026 AT 18:18Multi-agent systems are definitely the way to go. Having a specialized QA agent to double-check the coder agent's work is a great way to handle the hallucination problem without slowing down the whole process too much.

Kenny Stockman

April 12, 2026 AT 03:11This is some cool stuff. Just imagine the possibilities for automating the boring parts of a dev workflow. 🤙

Antonio Hunter

April 14, 2026 AT 02:40When we consider the long-term implications of the Reflexion framework, it becomes apparent that we are not just building tools, but creating systems that possess a rudimentary form of experiential learning, which, while impressive, requires us to be incredibly mindful of the initial guardrails we implement to ensure that the "lessons" the AI learns are aligned with human ethics and safety standards over an extended period of operation within complex corporate infrastructures.

Paritosh Bhagat

April 14, 2026 AT 08:27It's simply fascinating how people overlook the basic necessity of linguistic precision in these prompts, and while I find the concept of autonomous agents quite charming, one cannot help but notice that the industry often prioritizes speed over the fundamental correctness of the logic, which is frankly a tragedy for those of us who value actual intellectual rigor in our software architecture!

Ben De Keersmaecker

April 15, 2026 AT 20:59The bit about translating natural language into vectors to map functions is really the secret sauce here. It's interesting how that bridge allows the model to stop guessing and start actually operating.

Aaron Elliott

April 17, 2026 AT 05:08One must ponder whether the term "autonomy" is being utilized here in a strictly technical sense or if we are merely observing a more complex set of nested if-then statements masquerading as cognitive agency. The assertion that these systems "learn" via Reflexion is a quaint oversimplification of stochastic parity.

Chris Heffron

April 18, 2026 AT 22:37The risk part is real 😬. One wrong API call and your database is toast.

Adrienne Temple

April 19, 2026 AT 18:36I love the idea of a colony of agents! 🐝 It makes so much sense to have different "jobs" for different models. Do you think they'll eventually start arguing with each other if they disagree on a solution? That would be so funny! 😂

Sandy Dog

April 19, 2026 AT 22:03OMG can you even imagine the absolute CHAOS if an agent just decided to delete everything because it "thought" it was cleaning up? 😱 I literally can't sleep thinking about the potential for total digital annihilation just because some model had a little "hallucination" and decided to go rogue on a production server! It's actually terrifying and I'm shaking just thinking about the lack of safety protocols in some of these early beta releases! 😭💔

Nick Rios

April 20, 2026 AT 10:15It's a valid concern, but that's why the human-in-the-loop system is so critical. We just need to find that balance where we get the efficiency of agents without giving up all our control.