Design Patterns for Safe, Reliable, and Maintainable LLM Agents

May, 15 2026

May, 15 2026

Building an AI agent that actually works in production is harder than it looks. You might think you just need a smart model and a few tools, but without structure, your system becomes unpredictable, expensive, and dangerously insecure. By mid-2026, the industry has moved past the "throw everything at the LLM" phase. We now know that design patterns for LLM agents are structured architectural approaches that ensure safety, reliability, and maintainability in autonomous AI systems the difference between a prototype that breaks on Tuesday and a product that scales.

The core challenge isn't intelligence; it's control. When you give an LLM access to your database or email API, you aren't just handing it a keyboard-you're handing it keys to the castle. The wrong pattern leads to hallucinations, wasted tokens, and worse: prompt injection attacks where malicious users trick your agent into deleting data. This guide breaks down the specific patterns that top engineering teams use to keep their agents safe, reliable, and easy to maintain.

Quick Takeaways

- Simplicity wins: Start with deterministic chains or single-agent systems before considering complex multi-agent setups.

- Security is structural: Use Plan-Then-Execute patterns to isolate untrusted inputs from critical actions.

- Cost vs. Capability: Multi-agent systems offer power but drastically increase latency and token costs.

- Maintainability requires logging: Implement detailed tracing (like MLflow) to debug agent decisions.

- Hybrid approaches are best: Combine rigid workflows for safety with dynamic logic for flexibility.

Why Standard Patterns Matter Now

In early 2024, most developers treated LLMs as simple question-and-answer boxes. But as companies began deploying these models for real business tasks-processing invoices, managing customer tickets, analyzing code-the limitations became obvious. Basic prompt-response models couldn't handle complex, multi-step workflows reliably.

By 2025, major players like MongoDB, Databricks, Anthropic, and Google published frameworks acknowledging that ad-hoc coding was no longer sufficient. The field matured because the stakes rose. A mistake in a chatbot is annoying; a mistake in an automated financial transaction agent is catastrophic. These design patterns emerged to balance autonomy with control. They provide a blueprint for building systems that can perform useful work while minimizing risks like hallucinations and security vulnerabilities.

The shift wasn't just about better code; it was about better governance. As Luca Beurer-Kellner and his team noted in their 2025 research, once an LLM ingests untrusted input, it must be constrained so that input cannot trigger consequential actions. That constraint doesn't happen by accident. It happens through deliberate architectural choices.

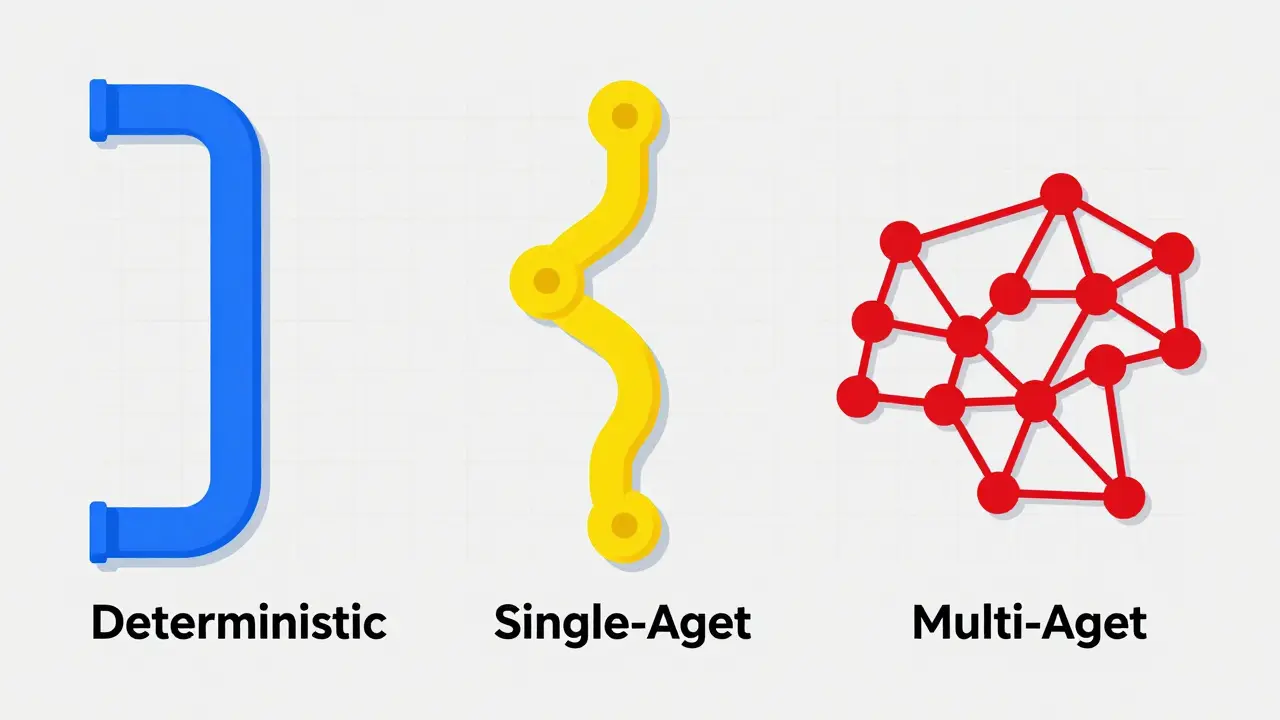

The Three Core Architectures

When designing an agent, you first choose its level of autonomy. Databricks documentation outlines three primary categories, each with distinct trade-offs in complexity, cost, and reliability.

| Architecture Type | Decision Authority | Best For | Complexity & Cost |

|---|---|---|---|

| Deterministic Chains | None (Hard-coded) | Regulated tasks, high accuracy needs | Low |

| Single-Agent Systems | Dynamic (LLM chooses tools) | General enterprise queries, sweet spot for most | Medium |

| Multi-Agent Systems | Coordinated (Specialized agents) | Complex domains requiring specialized knowledge | High |

Deterministic chains are the simplest form. Here, the developer defines exactly which tools or models are called, in what order, and with which parameters. The LLM has no say in the workflow. This pattern offers high predictability and is ideal for scenarios requiring 95%+ accuracy, such as regulated financial transactions. However, it fails when users ask novel questions outside the predefined path.

Single-agent systems allow the LLM to decide which tools to call based on the query. This is often the "sweet spot" for enterprise use cases. It offers dynamic logic to handle unexpected inputs while remaining simpler to debug than multi-agent setups. If your goal is to build a customer support bot that can look up orders, check shipping status, or process refunds, this is usually the right choice.

Multi-agent architectures coordinate multiple specialized agents. One agent might handle code review, another handles deployment, and a third manages documentation. While powerful, this introduces significant coordination overhead. Each additional LLM call increases token usage and response time. As Vellum AI pointed out in their 2026 guide, a single-agent LLM with strong prompts can often achieve almost the same performance as a multi-agent system in many contexts. Don't over-engineer.

Security-First Design Patterns

Security is not an afterthought in agent design; it's a structural requirement. The biggest threat to LLM agents is prompt injection, where malicious users embed instructions in input data to hijack the agent's behavior. In mid-2025, 78% of security professionals cited this as their top concern for agent deployment.

To combat this, engineers use specific security-focused patterns:

- Plan-Then-Execute Pattern: This separates planning from execution. The agent plans the tool calls in advance, before contacting any untrusted content. This prevents malicious instruction chaining where a user tries to sneak a command into a document the agent is supposed to summarize.

- Action-Selector Pattern: This restricts agent capabilities. Instead of giving the LLM free rein to execute arbitrary tasks, you present a fixed list of approved actions. The LLM selects from the list, ensuring it never attempts something unauthorized.

- Context-Minimization Pattern: This reduces the attack surface. Untrusted data is processed through a quarantined LLM that converts inputs into strictly formatted interfaces before the main agent sees them. This adds computational overhead but significantly boosts safety.

Veit Schiele's analysis emphasizes that secure agent design requires "intentional constraints." You want a good compromise between agent utility and security. If your agent can do anything, it can be tricked into doing anything bad. Constrain it, and you protect your users.

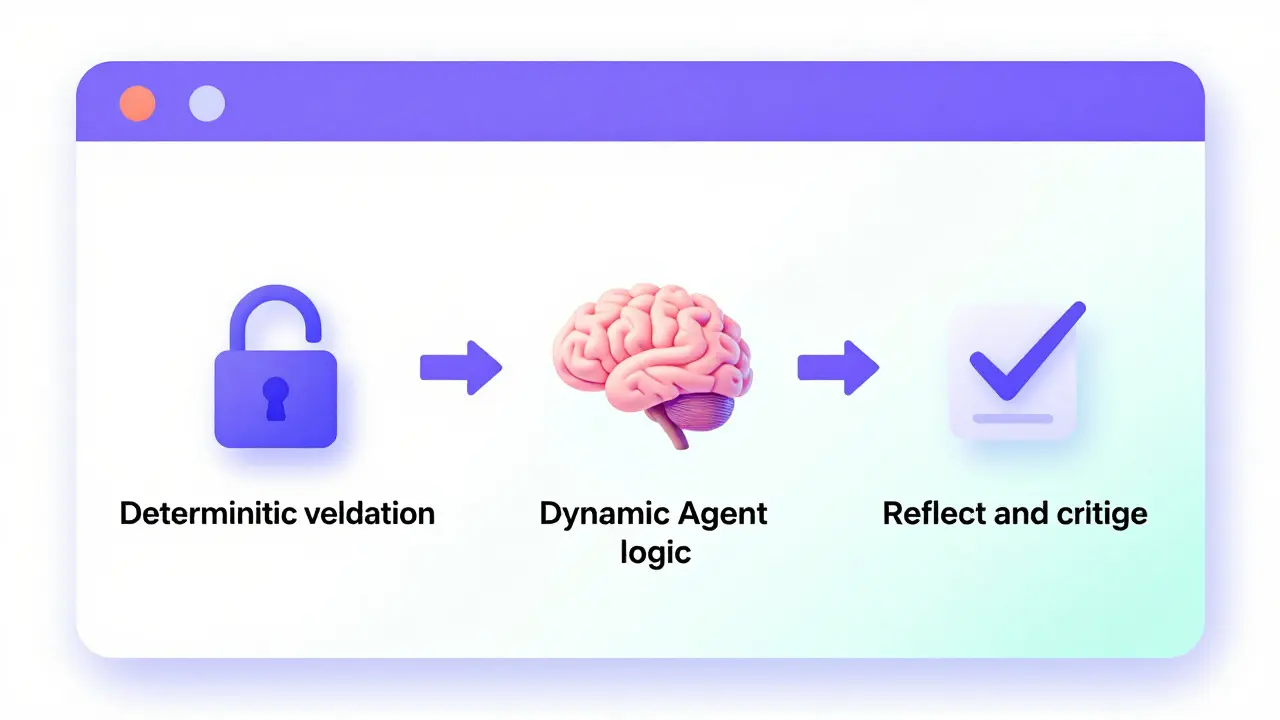

Improving Reliability and Accuracy

Reliability means the agent does the right thing consistently. LLMs are probabilistic, meaning they can hallucinate or make logical errors. To counter this, we use patterns that introduce verification steps.

The Reflect and Critique pattern is one of the most effective. After the agent generates a response or performs an action, it reviews its own output against a set of criteria or facts. MongoDB's testing showed this reduced error rates by approximately 35% in controlled environments. It’s like having a junior developer write code and a senior developer review it immediately.

Another key practice is keeping prompts clear and minimal. Contradictory instructions or distracting information increase hallucinations. Databricks recommends implementing detailed logging for each user request, agent plan, and tool call using tools like MLflow Tracing. Without visibility into why an agent made a decision, debugging is impossible.

Maintainability in Production

A common pitfall is building an agent that works today but breaks tomorrow. Model providers update their models frequently. A change in GPT-4o or Claude 3.5 can subtly alter how an agent interprets instructions. To maintain stability, you need version pinning and frequent regression tests. Ensure your agent logic remains robust even when the underlying model shifts.

LlamaIndex advocates for a pragmatic middle ground: "use structure where it helps, provide autonomy where it shines." Hybrid systems combine workflow predictability with agent flexibility. For example, use a deterministic chain for authentication and data validation, then switch to a single-agent system for natural language processing. This approach delivers optimal results for enterprise applications.

Implementation challenges also include managing latency. Anthropic notes that agentic systems often trade latency and cost for better task performance. Find the simplest solution possible, and only increase complexity when needed. If a simple retrieval-augmented generation (RAG) pipeline solves your problem, don't build a multi-agent orchestra.

Choosing the Right Pattern for Your Project

How do you decide? Start with your requirements. If you need high reliability and predictable execution paths, start with deterministic chains. If you need to handle diverse, open-ended queries, move to single-agent systems. Only consider multi-agent architectures if you have complex tasks requiring specialized knowledge domains that a single model cannot handle efficiently.

Remember the learning curve. Deterministic chains can be implemented in 1-3 days by developers familiar with LLM APIs. Multi-agent systems typically require 2-4 weeks of development time. Measure your team's capacity and your project's timeline. Don't let the allure of complexity override practical constraints.

What is the safest design pattern for LLM agents?

The safest patterns are those that constrain agent actions. The Plan-Then-Execute pattern is highly recommended because it separates planning from execution, preventing untrusted inputs from triggering malicious actions. Additionally, using Action-Selector patterns to limit available tools reduces the risk of unauthorized operations.

When should I use a multi-agent system instead of a single agent?

Use a multi-agent system only when your task requires specialized knowledge domains that exceed a single model's context or capability. For most enterprise use cases, a single-agent system with strong prompts and tool calling is sufficient and easier to maintain. Multi-agent systems add significant latency and cost.

How do I prevent prompt injection attacks in my agent?

Prevent prompt injection by using Context-Minimization patterns, where untrusted data is sanitized by a separate LLM before reaching the main agent. Also, implement the Plan-Then-Execute pattern to ensure that inputs do not directly influence critical actions. Never allow untrusted input to modify the system prompt or tool selection logic.

What is the Reflect and Critique pattern?

The Reflect and Critique pattern involves having the LLM review its own output or actions before finalizing them. This self-correction step can reduce error rates by up to 35%. It acts as an internal quality control mechanism, checking for factual accuracy, tone consistency, and logical coherence.

Is it better to use deterministic chains or dynamic agents?

It depends on your use case. Deterministic chains are better for high-reliability, regulated tasks where predictability is paramount. Dynamic agents are better for open-ended queries requiring flexibility. A hybrid approach often works best: use deterministic steps for safety-critical operations and dynamic agents for creative or analytical tasks.