Diverse Teams in Generative AI Development: How Inclusion Cuts Bias

Mar, 13 2026

Mar, 13 2026

When a facial recognition system fails to see a young Black woman’s face but works perfectly for a white man, it’s not a glitch. It’s a team problem. The people who built that system didn’t see the world the way she does. And that’s why diversity isn’t just a nice-to-have in generative AI - it’s the only way to stop bias before it ships.

Generative AI doesn’t invent bias out of thin air. It learns it. From the data it’s fed. From the assumptions its creators make. From the blind spots in their lived experience. A 2018 study from MIT Media Lab found facial analysis systems had error rates up to 34.7% higher for darker-skinned women than for light-skinned men. That’s not a technical limitation. That’s a human one. And the fix isn’t more code. It’s more voices.

Why Homogeneous Teams Build Biased AI

Imagine a team of five engineers. All men. All from similar backgrounds. All educated at the same handful of top universities. They train a hiring tool to scan resumes. They optimize for “best fit.” What happens? The system starts favoring names that sound familiar - names like John, Emily, or Michael. It penalizes candidates with names like Latoya, Mohammed, or Nguyen. Why? Because the team never asked: “Who are we leaving out?”

This isn’t hypothetical. A 2023 audit of a major AI recruiting platform found resume screening tools penalized candidates with traditionally Black names by 28%. The team had no Black engineers. No HR professionals with experience in equitable hiring. No one who’d ever been passed over for a job because of their name.

Harvard’s research confirms this pattern: young Black women aged 18-30 faced facial recognition error rates 34% higher than lighter-skinned males. Stanford found AI tools flagged non-native English speakers’ writing as “AI-generated” - even when it wasn’t. Why? Because the training data was built by people who spoke English as a first language. The system didn’t understand dialects, accents, or cultural writing styles. It saw difference as error.

These aren’t edge cases. They’re predictable outcomes. When your team looks the same, your AI sees the world the same way. And that means it fails the people who don’t fit that mold.

How Diverse Teams Fix AI Before It Breaks

Now imagine a different team. A woman who grew up in rural Guatemala. A Black data scientist from Detroit. A non-binary ethicist who worked in refugee resettlement. A 70-year-old veteran who’s seen three generations of tech change. They’re building the same hiring tool.

Within weeks, they spot problems the first team missed:

- The training data overrepresented resumes from Ivy League schools - ignoring skilled candidates from community colleges.

- The language filters were too strict on non-native English, mistaking clear, concise writing for “robotic” output.

- The scoring model rewarded “confidence” in interviews - a trait culturally coded as masculine, not universally accurate.

This team didn’t just add diversity. They added perspective. And that changed everything.

Generative Group AI’s analysis found diverse teams produce 1.7 times more innovation than homogeneous ones. Why? Because they challenge assumptions. They ask: “What if we’re wrong?” They catch bias before it’s baked into the model.

SAP’s SuccessFactors uses generative AI to help HR teams write inclusive job descriptions. The AI flags words like “rockstar” or “ninja” - terms that deter women and older workers from applying. It suggests alternatives like “collaborative” or “results-driven.” This tool wasn’t built by engineers alone. It was shaped by sociologists, DE&I specialists, and former recruiters who’d seen how language shaped hiring.

Lenovo’s Product Diversity Office reduced product exclusion incidents by 63% in 2023. How? By embedding diverse voices in every stage - from early design to user testing. One team caught that a voice assistant didn’t recognize African American Vernacular English. Another realized a health app’s symptom checker ignored pain patterns common in Asian populations. These weren’t bugs. They were blind spots. And they were fixed before launch.

What Real Diversity Looks Like in AI Teams

Diversity isn’t a checkbox. It’s not just hiring one woman or one person of color and calling it done. Real diversity means:

- Gender: At least 30% women, as recommended by EU AI Ethics Guidelines. Right now, women make up only 26% of global AI professionals, according to UNESCO.

- Race and ethnicity: Proportional representation. Black professionals hold just 3.1% of technical roles at major tech firms, per the 2023 Tech Leavers Study.

- Experience: You need more than coders. You need ethicists, sociologists, linguists, disability advocates, and domain experts from healthcare, education, and criminal justice.

- Cultural background: Someone who grew up in Nairobi, Manila, or São Paulo brings different insights than someone from Silicon Valley.

And it’s not enough to just have these people on the team. You need to listen to them.

Dr. Rumman Chowdhury, responsible AI lead at Accenture, put it bluntly: “Diversity alone isn’t sufficient - we need structured processes to ensure diverse voices are heard and integrated.”

That means:

- Inclusive meetings: No more “last to speak” syndrome. Use round-robin formats.

- Feedback channels: Anonymous systems where team members can flag bias without fear.

- Decision power: Diverse members must have real influence over model design, data selection, and testing criteria.

One Reddit user shared how their team changed after adding two female engineers and a cultural anthropologist. In 18 months, their all-male team had missed 17 bias points. The new team found them in three weeks.

The Tools That Make Inclusion Work

Diversity without structure is noise. You need tools to turn intention into action.

IBM’s AI Fairness 360 and Google’s What-If Tool let developers test models for bias across demographic groups. You can ask: “How does accuracy drop for users over 65?” or “Does this loan approval model treat applicants from zip code 90210 differently than those from 90011?”

SAP SuccessFactors doesn’t just use AI - it uses it to audit team behavior. Its generative AI analyzes meeting transcripts to spot who’s being interrupted, who’s not speaking, and whether feedback is evenly distributed. That’s not surveillance. It’s fairness engineering.

Model Cards, pioneered by Google in 2018, require teams to document: who built the model, what data was used, what biases were tested, and how performance varies across groups. Only 28% of major AI companies use them today - according to AlgorithmWatch’s 2024 report. That’s not progress. That’s negligence.

And then there’s inclusive analytics - a new approach from the 2024 PMC10950550 study. It doesn’t just measure output. It measures participation. Who’s asking questions? Who’s challenging assumptions? Who’s being ignored? This turns team dynamics into data - and makes inclusion measurable.

The Cost of Ignoring Diversity

There’s a real price to ignoring this.

A healthcare AI startup in 2024 shut down after its diagnostic tool showed 40% lower accuracy for Asian patients. Why? The team had no Asian members. No one thought to test for conditions like hemochromatosis, which presents differently in East Asian populations. The model flagged symptoms as “normal” - when they were early signs of disease. People died because no one on the team had lived it.

And then there’s “diversity theater.” A company hires a Black engineer, puts them on a poster, and calls it a win. But they’re not invited to key meetings. Their feedback is ignored. Their ideas are stolen. In one Trustpilot review, a company claimed diverse development - but had zero Black engineers. Their resume screener still penalized Black names by 28%. That’s not inclusion. It’s exploitation.

McKinsey’s 2023 survey found 37% of companies attempting diversity initiatives fell into this trap. They checked the box. They didn’t change the system.

Regulations Are Coming - And They’re Not Optional

The EU AI Act requires high-risk AI systems to demonstrate “appropriate levels of diversity in development teams” by 2025. New York City’s Local Law 144 mandates bias audits for hiring tools - and requires transparency in team composition.

The White House’s AI Bill of Rights (2022) includes “the right to protection against algorithmic discrimination.” Seventeen states have launched initiatives to enforce it.

By 2027, 68% of AI ethics experts predict regulators will mandate minimum diversity thresholds for AI teams. This isn’t coming. It’s here.

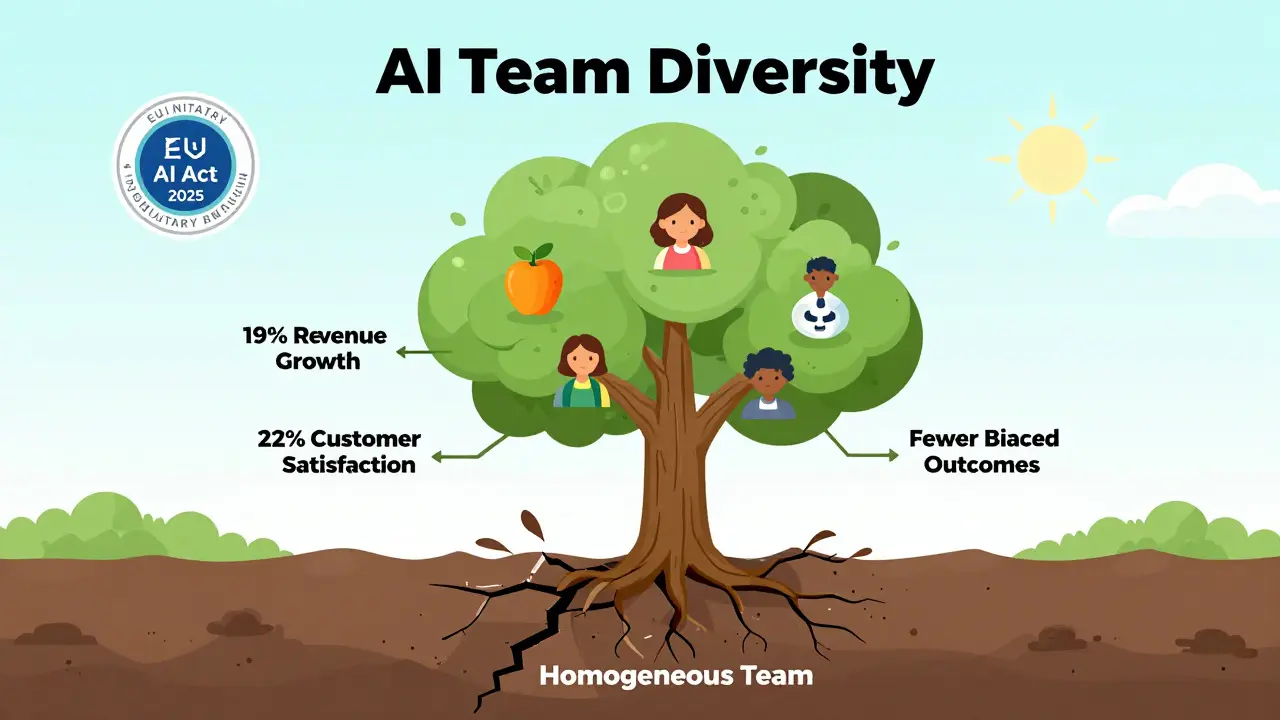

And the business case is clear: companies with diverse AI teams report 19% higher revenue growth, according to BCG’s 2024 analysis. They also score 22% higher in customer satisfaction, per Forrester.

Diversity isn’t charity. It’s competitive advantage.

How to Start Building a Diverse AI Team Today

You don’t need to wait for a policy change. You don’t need a billion-dollar budget. You just need to start.

- Do a team audit. Compare your team’s demographics to national labor data. Are women, Black, Latino, or disabled engineers underrepresented? Don’t guess. Measure.

- Expand your hiring pool. Stop recruiting only from Stanford, MIT, or Carnegie Mellon. Look at HBCUs. Community colleges. Bootcamps. Non-traditional paths.

- Bring in non-engineers. Hire an ethicist. A sociologist. A disability advocate. They don’t need to code. They need to ask: “Who does this hurt?”

- Train your team. Require 16+ hours of unconscious bias and cultural competency training. The IEEE recommends it. So should you.

- Use bias testing tools. Run your models through AI Fairness 360 or What-If Tool. Test across age, gender, race, language, and disability.

- Document everything. Build a Model Card. List your team’s composition. The data sources. The bias tests. The fixes. Transparency isn’t optional anymore.

It takes 6-12 months to make real change. But the first step? It takes five minutes. Ask your team: “Who didn’t we think about?”

Frequently Asked Questions

Why can’t we just fix bias with better data?

Better data helps, but it’s not enough. Bias isn’t just in the data - it’s in the questions we ask. If your team is homogenous, you’ll miss what data to collect, how to label it, and what outcomes matter. A diverse team asks: “Are we measuring the right things?” and “Who are we excluding by accident?” That’s why diversity in the team is the first line of defense.

Isn’t it harder to manage diverse teams?

It can feel harder at first - different communication styles, perspectives, and priorities can create friction. But that friction is productive. It forces you to explain assumptions, challenge norms, and build stronger solutions. Homogeneous teams agree quickly. Diverse teams build smarter. The long-term result? Fewer mistakes, better products, and faster innovation.

What if we can’t find diverse candidates?

You’re not looking in the right places. Most companies recruit from the same 10 schools or tech hubs. Expand your search. Partner with organizations like Black Girls Code, Out in Tech, or the National Society of Black Engineers. Attend job fairs at HBCUs. Offer internships to non-traditional candidates. Diversity isn’t a pipeline problem - it’s a recruitment problem.

Can AI itself help build diverse teams?

Yes - but only if it’s built by diverse teams. Tools like SAP SuccessFactors can analyze team dynamics, flag bias in job postings, and recommend inclusive language. But if the AI was trained by a homogenous team, it might reinforce bias instead of fixing it. The key is using AI as a tool - not a replacement - for human judgment and diverse input.

Is this just about ethics, or is there a business reason?

It’s both. Ethically, biased AI harms people. But financially, it also loses money. Companies with diverse AI teams see 19% higher revenue growth. They get 22% higher customer satisfaction. They avoid costly lawsuits, regulatory fines, and brand damage. Diversity isn’t a cost center - it’s a growth engine.

Buddy Faith

March 14, 2026 AT 00:34Scott Perlman

March 16, 2026 AT 00:14Sandi Johnson

March 17, 2026 AT 17:56Eva Monhaut

March 18, 2026 AT 21:39mark nine

March 19, 2026 AT 07:37Tony Smith

March 20, 2026 AT 02:29Rakesh Kumar

March 20, 2026 AT 06:23Bill Castanier

March 22, 2026 AT 05:00