Evaluating Reasoning Models: Think Tokens, Steps, and Accuracy Tradeoffs

Mar, 11 2026

Mar, 11 2026

When you ask a reasoning model a complex question-like "What are the implications of a 15% interest rate hike on mid-cap tech startups over 18 months?"-it doesn’t just spit out an answer. It pauses. It thinks. It generates dozens, sometimes hundreds, of hidden tokens: internal steps, scratchpad logic, false starts, and corrections. This is the promise of Large Reasoning Models (LRMs): better answers by thinking out loud. But here’s the catch: every extra thought costs money, time, and energy. And sometimes, it doesn’t even help.

What Are Reasoning Tokens, Really?

Reasoning tokens aren’t visible to you. They’re the internal chatter a model generates before giving you a final answer. Think of them like a student solving a math problem on scratch paper. You only see the final answer, but the teacher grades the work. These tokens are the model’s scratch paper. OpenAI’s o1 and o3 models, Anthropic’s Claude 3.7, and Qwen2.5-14B-Instruct all use them. The idea is simple: break hard problems into smaller steps, reason through each one, then combine the results.

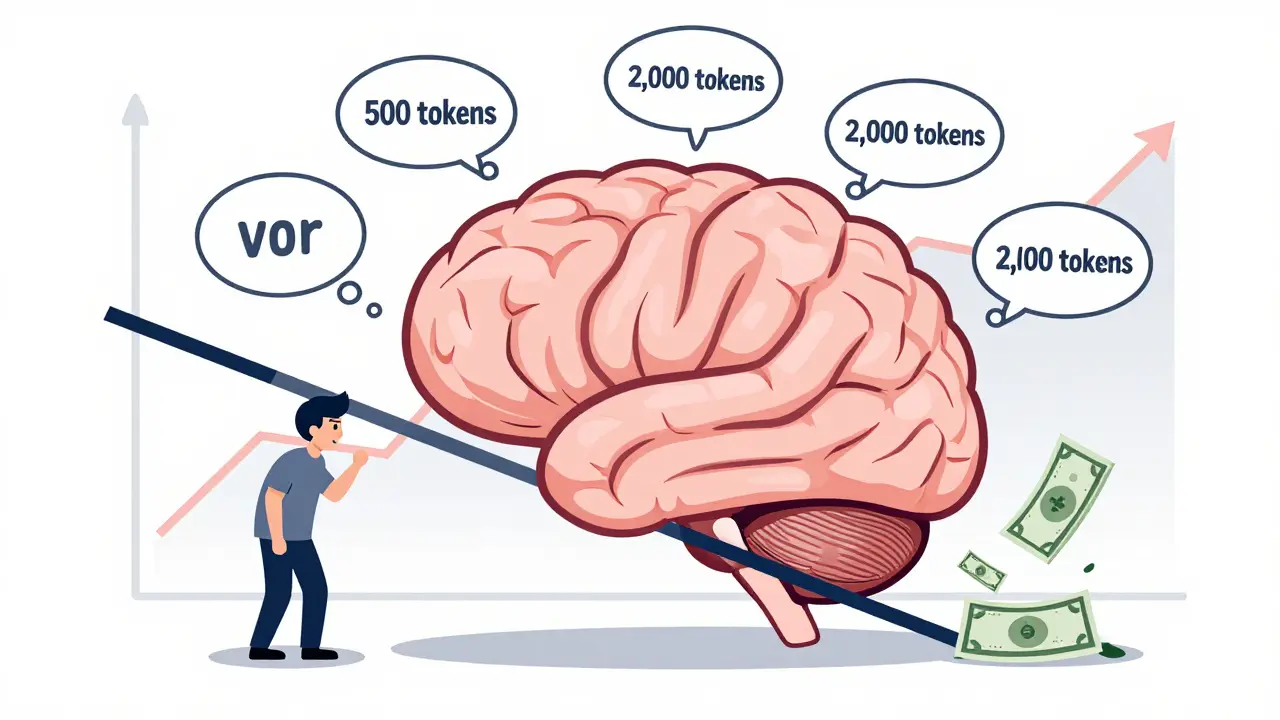

But here’s the data: for a single complex question, a standard model might use 500 tokens. A reasoning model? It often uses 1,500 to 2,000. That’s 3 to 5 times more. According to OpenAI’s API docs (2024), this extra token usage isn’t optional-it’s baked into the architecture. The model needs space to simulate thinking. And it’s not just OpenAI. Qwen2.5-14B-Instruct generates 1,200-1,800 reasoning tokens on the GPQA benchmark, a test designed to measure deep reasoning in science and technical domains.

Accuracy Gains? Yes. But Only Up to a Point

Do these extra tokens actually improve answers? Yes-but only for certain types of problems.

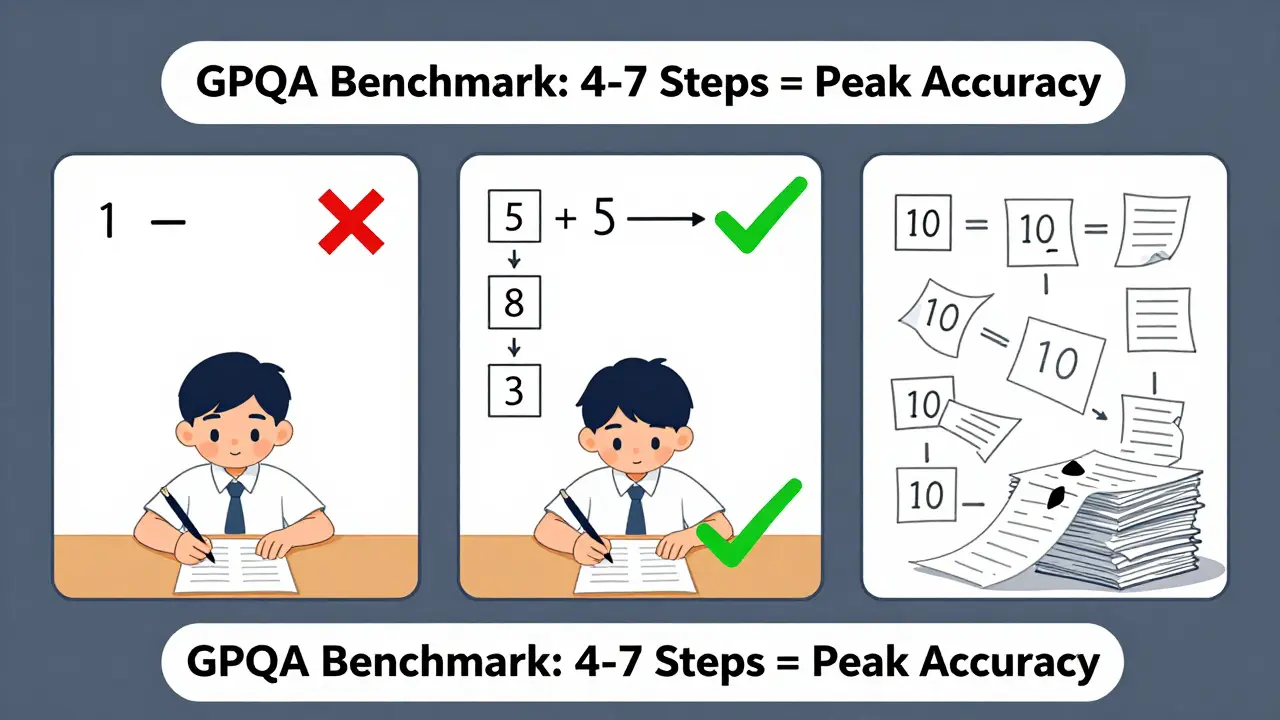

On simple tasks-like identifying the subject of a sentence or summarizing a short paragraph-reasoning models actually perform worse. Why? Because the extra steps introduce noise. Apple’s research (August 2024) found that for tasks requiring fewer than three logical steps, standard models beat reasoning models by 4.7% to 8.2% in accuracy. The model overthinks.

Where reasoning shines is in medium-complexity tasks: 4 to 7 steps. On GPQA, Qwen2.5-14B-Instruct jumps from 38.2% accuracy without reasoning to 47.3% with it. That’s a 9.1-point gain. OpenAI’s o3 model shows similar gains on medical diagnostics and legal reasoning benchmarks. But here’s the twist: beyond 7 steps, both standard and reasoning models collapse. Apple’s team found that when a problem requires 8 or more logical steps, accuracy drops below 5%-no matter how many tokens you throw at it. The model just gives up.

The Hidden Cost: Money, Latency, and Waste

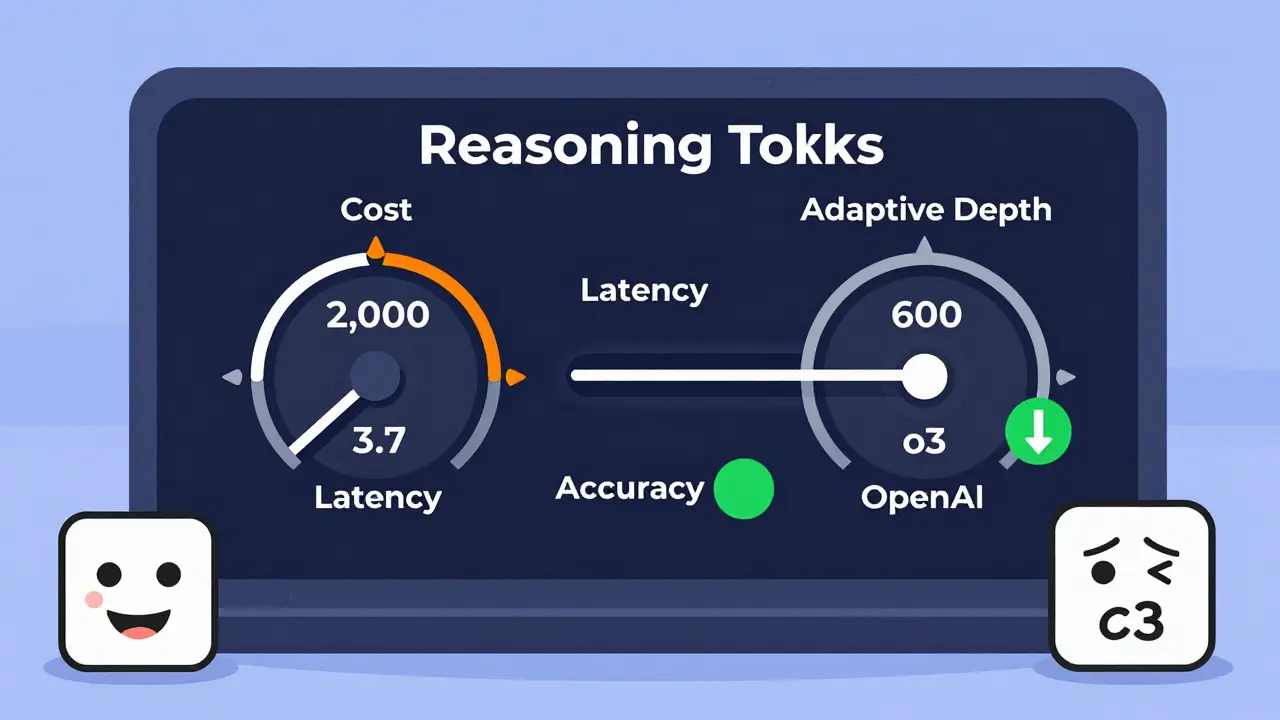

Every reasoning token costs money. OpenAI charges $0.015 per 1,000 reasoning tokens. Standard GPT-4? $0.003 per 1,000 tokens for the same output. That’s a 5x price jump just to get a slightly better answer. One developer on Reddit reported their monthly API bill jumped from $1,200 to $6,800 after switching to reasoning mode for financial analysis. That’s not sustainable for most startups.

Latency is another issue. Reasoning models don’t just take longer to answer-they spike unpredictably. One healthcare AI system saw inference times jump from 1.2 seconds to 8.7 seconds during peak hours. That breaks SLAs. Users get frustrated. Systems time out.

And it gets worse. Fine-tuning a model with reasoning traces doesn’t always help. A GitHub user found that applying reasoning fine-tuning to a standard Llama-3 model actually dropped accuracy by 12%. The model wasn’t trained for this style of thinking. It’s like teaching a sprinter to run a marathon using a different stride-they slow down, not speed up.

Efficiency Is the New Frontier

Here’s the good news: we’re getting smarter about this. The biggest breakthrough isn’t more tokens-it’s fewer.

Conditional Token Selection (CTS), developed by Zhang et al. (2025), cuts reasoning token usage by 75.8% with only a 5% drop in accuracy on GPQA. That’s huge. It works by identifying which tokens actually matter. Most of the reasoning chain is noise. CTS learns to skip the fluff. One company using CTS on Qwen2.5-14B-Instruct reduced costs by 42% while keeping 96% of the accuracy gain.

OpenAI is moving in this direction too. Their upcoming o4 model will use adaptive reasoning depth-dynamically adjusting how many tokens it uses based on how hard the question is. No point in using 2,000 tokens for a simple yes/no question.

And then there’s the human factor. A LessWrong analysis found that 50% of OpenAI’s reasoning chains were rated "largely inscrutable" by human reviewers. Claude 3.7? Only 15%. Qwen? 28%. The better models don’t just generate more tokens-they generate clearer ones. Structure matters. Clarity matters. More tokens ≠ better thinking.

Who Should Use Reasoning Models?

Not everyone. Here’s who benefits:

- Financial services: Risk modeling, fraud detection, portfolio stress tests. 78% of Fortune 500 firms use them here.

- Pharmaceuticals: Drug discovery pathways, molecular interaction predictions. 63% adoption rate.

- Legal tech: Case law analysis, precedent mapping. 41% of platforms use reasoning models.

Who shouldn’t? Small businesses. Content moderation. Customer service chatbots. If your task takes less than 4 steps, you’re paying for overkill. One survey found that 82% of users worry about cost, and 41% have already implemented token compression techniques to cut expenses.

The Bigger Question: Are We Just Simulating Thinking?

Dr. Michael Wooldridge from Oxford put it bluntly: "These models don’t reason. They pattern-match. The reasoning chain is a steganographic artifact-something that emerged from training, not from true logic."

Think of it like a chess engine. It doesn’t "understand" chess. It simulates millions of moves and picks the best one. Reasoning models do the same. They’ve learned that when they generate a chain of "thoughts," the final answer is more likely to be right. It’s correlation, not causation.

Dr. Jane Chen at Stanford HAI adds: "80% of the accuracy gains come from the first 25% of the reasoning tokens." That means 75% of the tokens you’re paying for are doing almost nothing. You’re buying a Ferrari to drive to the grocery store.

Practical Tips for Implementation

If you’re thinking about deploying reasoning models, here’s what actually works:

- Start small. Test on 100 medium-complexity tasks. Measure accuracy gain versus token cost.

- Use dynamic budgeting. Don’t use 2,000 tokens for every question. Set thresholds: if the question is short and factual, use 500. If it’s multi-step, allow 1,500.

- Try CTS. Even if you’re using OpenAI, you can pre-process prompts to reduce token waste. Tools like Refuel.ai’s SDK help identify critical reasoning paths.

- Monitor latency. Build fallbacks. If a reasoning request takes over 5 seconds, drop back to a standard model.

- Don’t fine-tune standard models. Only use reasoning fine-tuning on models explicitly trained for it.

One healthcare AI team reduced diagnostic errors by 37% for rare conditions by using reasoning models-but only after they cut token usage by 60% with dynamic budgeting. They didn’t add more power. They used less, smarter.

What’s Next?

By 2026, Gartner predicts 80% of enterprise reasoning deployments will use token compression. The race isn’t about bigger models. It’s about leaner thinking.

The future isn’t more tokens. It’s smarter tokens. Better structure. Adaptive depth. And maybe, just maybe, models that know when to stop thinking.

Do reasoning models always improve accuracy?

No. For simple tasks-those requiring fewer than three logical steps-reasoning models often perform worse than standard models. They introduce noise, not clarity. Accuracy gains only appear reliably in medium-complexity tasks (4-7 steps). Beyond 7 steps, both types of models collapse, with accuracy dropping below 5%.

How much more expensive are reasoning models?

Reasoning models can cost 5 times more than standard models for the same output. OpenAI charges $0.015 per 1,000 reasoning tokens, compared to $0.003 for standard GPT-4. A single complex query might use 1,500-2,000 tokens instead of 500, multiplying costs. One company saw monthly API costs jump from $1,200 to $6,800 after switching to reasoning mode.

Can I reduce reasoning token usage without losing accuracy?

Yes. Conditional Token Selection (CTS), developed by Zhang et al., reduces reasoning tokens by 75.8% with only a 5% accuracy drop on GPQA. Other techniques include dynamic token budgeting-limiting token use based on task complexity-and using reference models to identify which tokens actually matter. Companies using these methods report 30-45% cost savings while keeping 95% of accuracy gains.

Are reasoning models worth it for small businesses?

Usually not. Only 22% of small-to-medium businesses use reasoning models, despite 68% recognizing their potential. The cost and complexity outweigh the benefits unless you’re doing high-stakes, multi-step tasks like risk analysis, drug discovery, or legal precedent mapping. For customer service, content moderation, or simple Q&A, standard models are faster and cheaper.

Why do some reasoning chains look nonsensical?

Because they’re not human reasoning-they’re learned patterns. OpenAI’s o3 model produces reasoning chains that 50% of human evaluators found "largely inscrutable." The model has learned that generating long, structured chains correlates with higher accuracy, but the content isn’t always logically coherent. Models like Claude 3.7 and Qwen2.5-14B-Instruct produce clearer chains because they were trained differently. Clarity matters more than length.

What’s the future of reasoning models?

The future is efficiency, not scale. OpenAI’s upcoming o4 model will adjust reasoning depth automatically based on task complexity. Gartner predicts that by 2026, 80% of enterprise deployments will use token compression techniques like CTS. The goal isn’t to think harder-it’s to think smarter, using only what’s necessary.

Bhavishya Kumar

March 11, 2026 AT 15:42Reasoning tokens are not a feature; they are a diagnostic artifact of model architecture. The notion that more internal steps equate to better reasoning is a fallacy rooted in anthropomorphism. The model does not think-it computes. The tokens are statistical byproducts, not cognitive processes. We mistake correlation for causation, and then we pay for it in API costs.

When Apple's research shows standard models outperform reasoning models on tasks under three steps, it is not a bug-it is a feature. The system is working as intended. The overengineering of LLMs with verbose internal chains is a symptom of academic prestige inflation, not engineering excellence.

Conditional Token Selection (CTS) is the only rational path forward. If 75% of the reasoning chain is noise, why train the model to generate it? Why not prune the noise at the input layer? The future belongs to models that know when not to think, not those that think louder.

Let us stop calling this 'reasoning.' It is pattern interpolation with metadata. We are not building minds. We are building echo chambers of token sequences. The cost is not just monetary-it is epistemological.

ujjwal fouzdar

March 11, 2026 AT 22:40Bro, we are living in the simulation. Every time a model generates 2000 tokens to answer a question about a 15% interest rate hike, it’s not thinking-it’s performing a ritual. Like a priest chanting Sanskrit mantras to summon rain. The tokens are incantations. The accuracy gain? That’s the placebo effect.

And then we pay $0.015 per 1000 tokens like it’s holy water. Meanwhile, the model doesn’t even know what ‘interest rate’ means. It just knows that when it writes ‘therefore,’ ‘however,’ and ‘in conclusion,’ the human says ‘good job.’

Dr. Wooldridge is right. This isn’t reasoning. It’s theater. And we’re all paying for front-row seats to a Shakespearean tragedy where the protagonist is a neural net with a credit card.

What if the real breakthrough isn’t smarter tokens… but smarter humans? Who stop believing the machine is thinking?

Anand Pandit

March 12, 2026 AT 14:17This is such a well-researched and balanced breakdown. I love how you highlight that reasoning models aren’t universally better-they’re contextually better. That’s the key insight so many miss.

I’ve seen teams waste months trying to force reasoning models into customer service chatbots, only to realize their users just wanted quick answers, not a PhD thesis. The latency spikes alone killed their NPS scores.

But when used right? In drug discovery or risk modeling? The gains are real. The trick is matching the tool to the task. Start small. Test. Measure. Don’t just turn on reasoning mode because it’s trendy.

And CTS? That’s the quiet revolution. We’re moving from ‘more is better’ to ‘less is more.’ That’s smart engineering.

Thanks for this. It’s exactly the kind of clarity the field needs right now.

Reshma Jose

March 13, 2026 AT 18:52Ok but like… why are we still arguing about this? We know the answer. If you’re a startup trying to survive, you don’t use 2000-token chains for every query. You use dynamic budgeting. You set limits. You fallback. You optimize.

And if your CFO is freaking out because your API bill jumped 5x? That’s not a model problem. That’s a decision problem. You chose wrong. Not the model.

I run a small SaaS. We use reasoning models only for our risk engine. Everything else? Standard GPT-4. Cost dropped 60%. User satisfaction stayed the same. Win-win.

Stop overcomplicating. Just use less. It works.

rahul shrimali

March 14, 2026 AT 19:13Reasoning models are overrated. Simple tasks need simple answers. Stop paying for fluff. Use dynamic budgeting. Cut the waste. Save money. Move fast. Done.

Eka Prabha

March 16, 2026 AT 03:39Let me ask you something. Who benefits from this reasoning token economy? Not the end user. Not the startup. Not even the developer. The beneficiaries are the cloud providers. The model vendors. The consultants selling ‘LLM optimization packages.’

This entire paradigm was engineered to lock enterprises into proprietary APIs. You think Qwen and Claude are competing on accuracy? No. They’re competing on how many tokens they can make you consume. It’s a consumption tax disguised as innovation.

And don’t get me started on fine-tuning. You think applying reasoning traces to Llama-3 was a technical decision? It was a deliberate sabotage. They want you to fail so you’ll buy their ‘premium’ models.

80% of the accuracy gain comes from the first 25% of tokens? That’s not a coincidence. That’s a trap. They know you’ll keep paying for the rest. It’s not AI. It’s a Ponzi scheme with transformers.

Bharat Patel

March 16, 2026 AT 23:23There’s a deeper question here: What does it mean to ‘think’? If a model generates a chain of reasoning that leads to a correct answer, does it matter if the chain is synthetic? Is the value in the truth of the output, or the authenticity of the process?

We treat reasoning tokens like they’re evidence of consciousness. But they’re not. They’re echoes. Reflections. The model has learned that humans reward structured outputs. So it mimics structure. It doesn’t understand. It performs.

And yet-we still trust it. We still pay for it. We still call it ‘thinking.’

Perhaps the real insight isn’t about tokens at all. It’s about us. Our hunger to see intelligence in the machine. Our need to believe that the algorithm is more than a mirror.

Maybe the model isn’t simulating thought. Maybe it’s reflecting our own illusions back at us.

Bhagyashri Zokarkar

March 17, 2026 AT 06:29i just dont get why ppl are so obsessed with these tokens like its some kind of magic dust or something?? like i ask a model a question and it takes 8 seconds to answer and then gives me something that sounds like a textbook but makes no sense?? like why?? i just want an answer not a 10 paragraph essay written by a confused grad student who drank too much coffee

and then they say oh but we fine tuned it and now its better?? no its not. its just slower and more expensive. my boss is like oh this is cutting edge innovation and i’m like we’re paying 6k a month to make our chatbot sound like it’s having an existential crisis

the only thing that matters is if it works. if it answers correctly in under 1 second for 95% of queries then why even bother with the rest?? its like buying a ferrari to drive to the corner store and then wondering why your gas bill is so high

and dont even get me started on cts. sounds like buzzword bingo. who even wrote this paper?? some guy in a lab with 12 monitors and no real users??

we need to stop pretending this is science and admit its just corporate theater

Rakesh Dorwal

March 17, 2026 AT 11:04Let’s be real. The West is selling us this narrative that more tokens = better AI. But what if the truth is simpler? What if the real breakthroughs are happening in places like China, where they’re not wasting tokens on theater? What if Qwen2.5 is winning because they cut the fat and focused on efficiency?

And don’t tell me OpenAI is leading innovation. Their models are bloated, expensive, and opaque. Meanwhile, Indian startups are using dynamic budgeting and CTS to deliver 90% accuracy at 20% cost. We’re not lagging. We’re leading.

Stop idolizing Western AI. It’s a luxury product. We need practical, scalable, affordable AI. And we’re building it.

Think smarter. Not louder.