Multimodal AI Evolution: How 3D, Haptics, and Sensor Fusion Are Changing Tech in 2026

May, 10 2026

May, 10 2026

Remember when you had to upload a photo to one app to identify it, then copy the text description into another tool to translate it? That disjointed workflow is dead. In 2026, artificial intelligence doesn't just read text or see images-it experiences them together. We are witnessing the shift from "late fusion" systems, which stitched data types together at the end, to true multimodal AI, which processes text, vision, audio, and physical sensor data in a single, unified mathematical space. This isn't just a software update; it is a fundamental architectural revolution that allows machines to understand context the way humans do-by combining sight, sound, touch, and spatial awareness simultaneously.

The difference between old and new systems is stark. Previously, if you showed an AI a diagram and asked a question, the system used separate encoders for the image and the text, merging their outputs only at the very end. Researchers called this the "illusion of multimodality." Today’s leading models, like OpenAI’s GPT-4o and Meta’s Llama 4 series, use unified tokenization. This means images, sounds, and even haptic signals are converted into the same type of tokens as words. The model learns the connections between these modalities from the ground up, allowing it to reason about visual puzzles, catch errors in diagrams, and generate coherent responses based on complex, mixed inputs.

The Architecture Shift: From Late Fusion to Unified Spaces

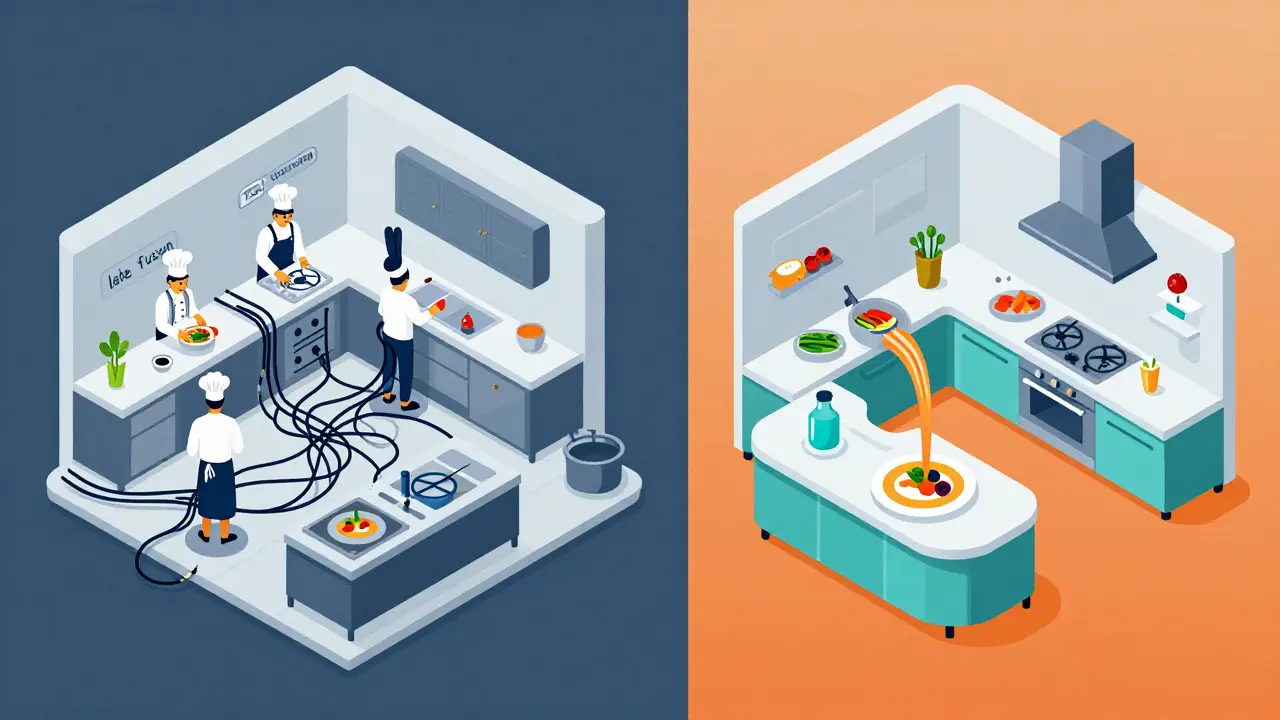

To understand why this matters, you have to look under the hood. For years, the industry relied on late fusion architectures. Think of it like a restaurant where the chef prepares the appetizer, the main course, and the dessert in completely different kitchens, only plating them together at the last second. Each component was optimized separately, but they never truly interacted during preparation.

Unified multimodal systems change the kitchen entirely. Instead of separate pipelines, all data types move through shared transformer layers. This requires dVAE (discrete Vector Quantized Variational Autoencoder) techniques to tokenize images and audio effectively. By forcing the model to reconstruct multiple modalities jointly during training, researchers ensure the AI captures shared structures across input types. The result is richer cross-modal representations. When vision is part of the pretraining objective rather than an add-on adapter, the model develops a deeper understanding of how objects relate to language and action.

| Feature | Late Fusion (Pre-2025) | Unified Multimodal (2026+) |

|---|---|---|

| Data Processing | Separate encoders for each modality | Shared transformer layers for all data types |

| Tokenization | Modality-specific tokens | Unified tokenization scheme |

| Reasoning Capability | Limited cross-modal inference | Deep contextual reasoning across inputs |

| Efficiency | High computational overhead for fusion networks | More efficient via mixture-of-experts models |

This architectural shift enables mixture-of-experts (MoE) models to handle diverse data without excessive computing power. By activating only the relevant neural pathways for specific tasks, these systems remain fast and cost-effective while delivering profound accuracy. Companies like Meta released models such as Llama 4 Scout and Llama 4 Maverick in late 2025 specifically to address this need for efficient, multi-modal processing.

Beyond Screens: Integrating 3D and Spatial Awareness

Text and images are flat. The real world is not. The next frontier for generative AI is 3D generation and spatial understanding. Traditional computer vision could identify objects in a 2D frame, but it struggled with depth, volume, and physical interaction. Modern multimodal models are now trained to understand three-dimensional space directly.

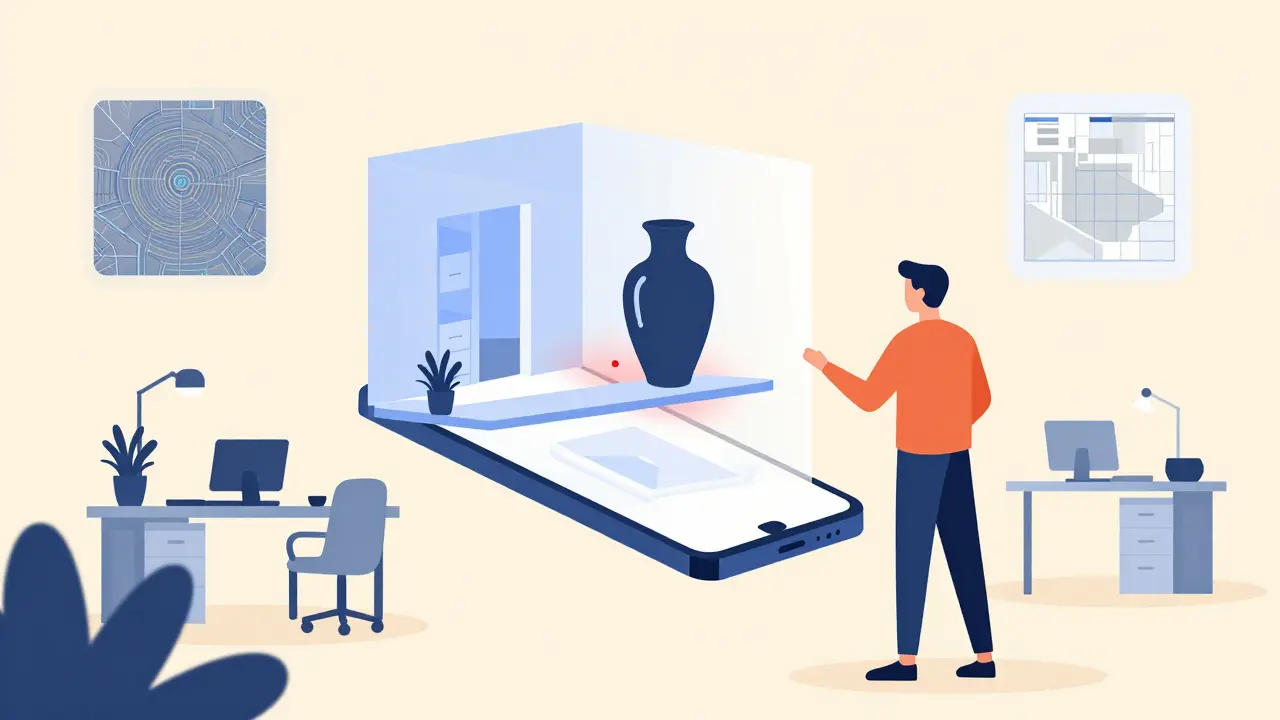

This capability transforms industries like architecture, gaming, and robotics. An AI can now take a textual description of a room, analyze existing structural blueprints, and generate a fully navigable 3D environment. More importantly, it understands physics. If you ask the AI to place a heavy vase on a thin shelf in its generated scene, it can predict whether the shelf will break. This requires the model to fuse visual data with implicit knowledge of material properties and gravity-a hallmark of true multimodal reasoning.

For developers, this means moving away from manual asset creation. Tools leveraging these models allow users to build digital twins of physical spaces by simply walking through them with a smartphone. The AI fuses camera feeds, LiDAR data, and motion sensors to create precise 3D maps instantly. This level of integration reduces development time for augmented reality (AR) applications by orders of magnitude.

Haptics: Adding Touch to the Digital Conversation

We have spent decades refining how AI sees and hears us. Now, we are teaching it to feel. Haptic feedback is no longer just about vibrating your phone when you type. In the context of multimodal AI, haptics represent a critical sensory input and output channel.

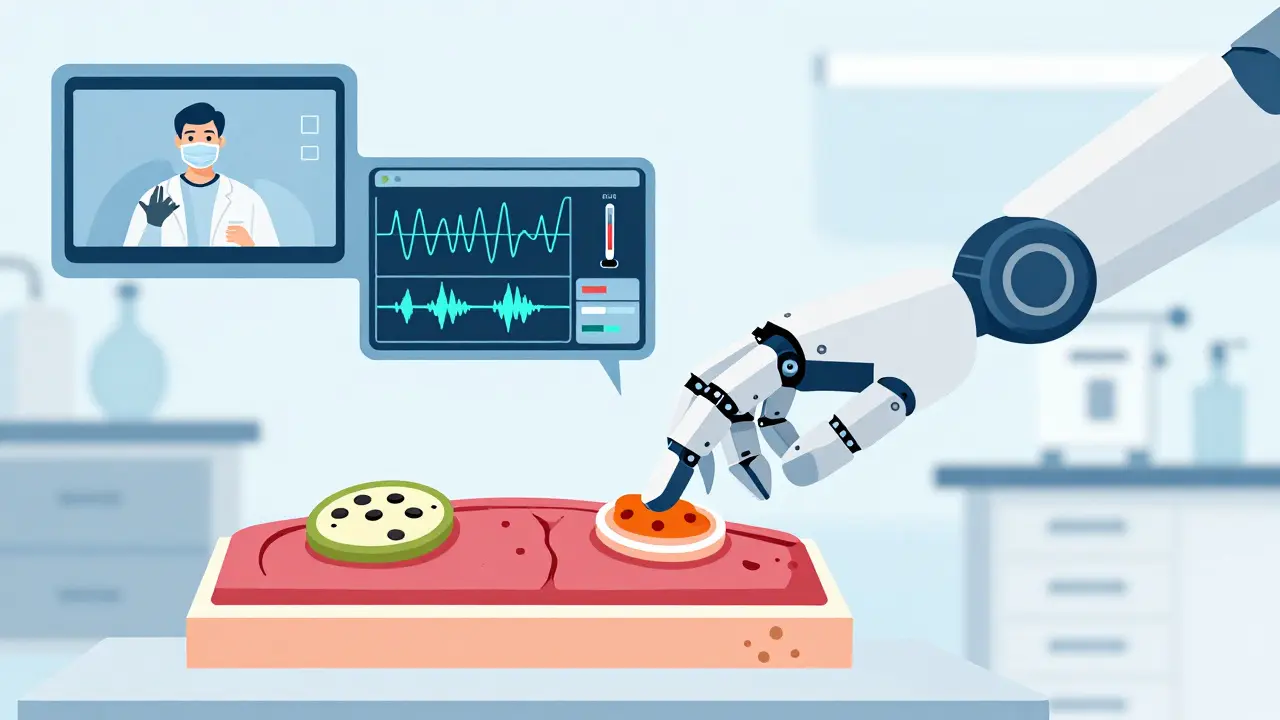

Imagine a surgeon using robotic arms to perform remote surgery. The AI doesn't just show the surgeon what the tissue looks like; it translates the tactile resistance of the tissue into haptic signals sent back to the surgeon's gloves. The AI processes video, force sensor data, and historical medical records simultaneously to provide real-time guidance. If the tissue feels abnormal, the system alerts the surgeon before visual cues become apparent.

In consumer tech, haptics enable more intuitive interactions. A virtual keyboard can simulate the distinct click of mechanical keys, or a navigation app can guide you through a dark building by sending directional pulses to your wrist. For generative AI, this opens up new creative possibilities. Designers can "feel" the texture of a digitally generated fabric or the weight of a virtual prototype, allowing for more refined product development cycles.

Sensor Fusion: The Brain of the IoT Era

If haptics bring touch to AI, sensor fusion brings environmental awareness. We are entering the "Sensor 4.0" age, where AI systems integrate data from Internet of Things (IoT) devices, chemical sensors, and industrial monitors. This goes far beyond traditional modalities.

Consider a smart factory. Temperature sensors, vibration detectors, and acoustic monitors stream data continuously. A late-fusion system might flag a high temperature or unusual noise independently. A unified multimodal AI, however, correlates these signals. It recognizes that a specific vibration pattern combined with a slight temperature rise indicates bearing failure weeks before it happens. This predictive maintenance saves millions in downtime.

Autonomous vehicles rely heavily on this principle. They don't just "see" the road; they fuse LiDAR point clouds, radar returns, camera feeds, and GPS data to build a comprehensive understanding of their surroundings. The AI must process these disparate inputs in real-time to make split-second decisions. As these models become more unified, they become safer and more reliable, reducing the cognitive load on human operators.

Market Growth and Commercial Reality

This isn't theoretical speculation. The market is moving fast. According to Grand View Research, the international multimodal AI market was valued at $1.73 billion in 2024 and is projected to reach $10.89 billion by 2030. This represents a compound annual growth rate (CAGR) of 36.8%. Why such explosive growth?

Organizations are tired of siloed data. They want systems that can process emails, analyze attached videos, listen to recorded meetings, and interpret sensor logs from their operations-all in one go. Zoom, for example, uses multimodal AI to enrich virtual meetings by analyzing audio prompts and visual input simultaneously, providing real-time transcription, sentiment analysis, and actionable summaries.

The competition is fierce. Google’s Gemini models demonstrated early on that multimodal capability could fit on-device, proving that unified processing isn't restricted to massive server farms. Apple and Microsoft are integrating similar capabilities into their operating systems, making multimodal interaction a standard feature rather than a niche experiment. For businesses, ignoring this trend means falling behind in efficiency and customer experience.

Challenges and Ethical Considerations

With great power comes great responsibility. Unified multimodal systems raise significant privacy and security concerns. If an AI can process everything you see, hear, and touch, who owns that data? The risk of deepfakes becomes exponentially higher when AI can generate synchronized video, audio, and even simulated haptic feedback.

Furthermore, there is the issue of bias. If a model is trained on biased datasets across multiple modalities, it can perpetuate stereotypes in subtle ways. For instance, a hiring tool that analyzes video interviews might unfairly penalize candidates based on non-verbal cues that correlate with cultural background rather than competence. Developers must implement rigorous auditing processes to ensure fairness across all input types.

Computational costs remain a barrier for smaller organizations. While mixture-of-experts models help, training and running unified multimodal systems still require significant resources. Cloud providers are responding by offering specialized AI chips optimized for these workloads, but access remains uneven.

What This Means for You

Whether you are a developer, a business leader, or a curious user, the rise of multimodal AI changes how you interact with technology. For developers, learning frameworks that support unified tokenization and sensor integration is essential. Python libraries like PyTorch and TensorFlow are evolving to better support these architectures.

For businesses, the opportunity lies in creating seamless customer experiences. Imagine a support chatbot that can see your broken appliance via your camera, hear the grinding noise you record, and feel the vibration patterns from your phone's accelerometer. It can diagnose the problem faster than any human technician. This level of service is becoming possible today.

The evolution from single-modality systems to unified multimodal architectures is not just a technical upgrade. It is a qualitative shift in how machines perceive and interact with the world. As we move further into 2026, expect AI to become less like a tool you command and more like a partner that understands context, nuance, and the physical world around you.

What is the difference between late fusion and unified multimodal AI?

Late fusion processes different data types (like text and images) separately using specialized encoders and combines them only at the end of the pipeline. Unified multimodal AI converts all data types into a shared mathematical space using unified tokenization, allowing the model to learn connections between modalities from the ground up through shared transformer layers.

How does sensor fusion improve AI decision-making?

Sensor fusion integrates data from multiple sources like LiDAR, cameras, radar, and IoT sensors into a single representation. This allows AI to detect complex patterns that individual sensors might miss. For example, combining vibration and temperature data can predict machinery failure earlier than either metric alone.

Can multimodal AI really understand 3D space?

Yes. Modern multimodal models are trained to understand depth, volume, and physical relationships. They can generate 3D environments from text descriptions and apply physics-based reasoning, such as predicting if an object will fall based on its placement and weight.

What role does haptic feedback play in generative AI?

Haptics add a tactile dimension to AI interactions. In professional settings, it allows for precise remote manipulation, like in robotic surgery. In consumer tech, it enhances user experience by simulating textures and physical responses, making digital interactions more intuitive and immersive.

Is unified multimodal AI available for small businesses?

While training these models requires significant resources, accessing them is becoming easier. Cloud providers offer APIs for multimodal processing, and models like Gemini Nano demonstrate that on-device multimodal capabilities are feasible. Small businesses can leverage these tools through third-party applications without building infrastructure from scratch.