Open-Source Trajectory in Generative AI: How Community Models Are Reshaping Governance and Access

Mar, 23 2026

Mar, 23 2026

The rise of open-source generative AI isn’t just about free models-it’s about who gets to control the future of artificial intelligence. Five years ago, cutting-edge AI was locked behind paywalls, corporate NDAs, and opaque training data. Today, open-source generative AI is the backbone of innovation for startups, researchers, and even hobbyists running models on laptops. But with this power comes complexity: licensing confusion, hardware demands, and fragmented governance. What’s really happening beneath the surface? And why does it matter for anyone using AI today?

How Open-Source Models Changed Everything

Before Meta released LLaMA in early 2023, most people thought high-performance AI required billions in funding and a team of PhDs. Then came the shift. Suddenly, anyone could download a model, tweak it, and run it locally. The domino effect was immediate. By 2025, over 68 major open-source projects were actively maintained under the Linux Foundation AI & Data umbrella alone. More than 100,000 developers from 3,000 companies were contributing. This wasn’t just open-source-it was a movement.

The real game-changer? Accessibility. A 7B-parameter LLaMA 3 model now runs on a MacBook Pro M2 in under five minutes using tools like Ollama. That’s not a demo-it’s what real users report on Reddit’s r/LocalLLaMA, a community of 487,000 members. For comparison, just three years ago, running any serious model required a server with multiple high-end GPUs. Now, it’s a matter of downloading an app and typing a prompt.

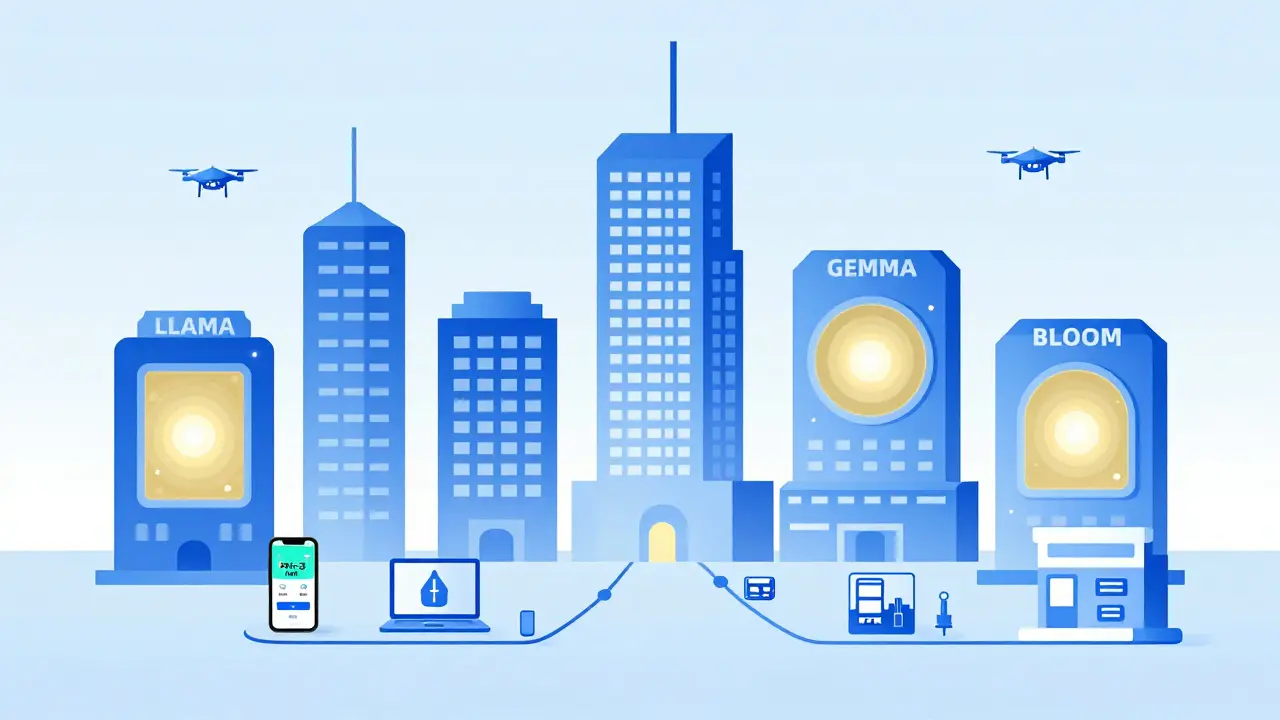

Leading Models and What They Can Actually Do

Not all open-source models are built the same. Each one serves a different purpose, and understanding their strengths helps you choose the right tool.

- LLaMA 3 (Meta, April 2025): With variants from 8B to 70B parameters, it leads in enterprise adoption, holding 41.7% of the open-source LLM market. It handles multilingual customer service, code generation, and reasoning tasks with 47.2% accuracy on the MMLU benchmark. Its 8B version needs only 16GB of VRAM-enough for most modern laptops.

- Stable Diffusion 3 (Stability AI, September 2025): The go-to for image generation. It produces 1024x1024 images at 4.7 images per second on an NVIDIA A100. Gamers and designers love its customization, though it still trails DALL-E 3 in photorealism (3.2/5 vs. 4.1/5 in human evaluations).

- BLOOM (BigScience, 2022): The most multilingual model, supporting 46+ languages. It scored 52.7% on cross-language reasoning tasks. But it’s huge-176 billion parameters-and needs 320GB of GPU memory. Only large organizations can run it fully.

- Gemma 2 (Google, June 2025): Designed for efficiency. Its 27B version scores 68.4% on HumanEval coding benchmarks and works across TensorFlow, PyTorch, and JAX. It’s optimized for consumer hardware, making it ideal for developers who want flexibility without high-end gear.

These aren’t just academic experiments. Companies are using them. A logistics startup in Poland replaced its proprietary chatbot with LLaMA 3 and cut costs by 70%. A graphic design studio in Mexico uses Stable Diffusion 3 to generate game assets for indie titles. BLOOM powers customer support for a global nonprofit that serves speakers of 20+ languages.

The Governance Problem: Licenses, Limits, and Legal Gray Zones

Here’s the catch: not all open-source AI is truly open. A 2025 analysis by NetApp Instaclustr found that 37% of open-source models had restrictions on commercial use. Some require you to ask permission. Others forbid use in certain industries like healthcare or finance. One model even banned use in any country under U.S. sanctions.

This isn’t a bug-it’s a feature of how decentralized projects evolve. Without a central authority, each team picks its own license. The result? 83 different licensing frameworks in use as of late 2025, according to Stanford HAI. That’s chaos for legal teams. A 2025 IBM survey found that 28% of companies delayed adopting open-source AI because they couldn’t figure out if they were allowed to use it.

Enter the OpenChain AI Working Group, launched in June 2025 with 47 major companies on board. Their goal? Standardize compliance. They’ve already simplified 87% of license processes for enterprise use. Still, many smaller teams don’t even know these standards exist. And that’s the real risk: a company using a model with a hidden restriction could face lawsuits years later.

Hardware Isn’t the Only Barrier-Quality Is

Running a model on your laptop sounds great-until you realize the fine-tuned version you downloaded is full of hallucinations. A 2025 evaluation by EleutherAI found that 22.3% of community-trained models performed worse than their original versions. One popular fine-tune for legal document summarization started inventing case law. Another for medical triage gave dangerously wrong advice.

And documentation? It’s a mess. LLaMA 3 scores 4.5/5 on Hugging Face for clarity. But models built for niche uses-like generating blockchain smart contracts-score only 2.8/5. Users report spending 42 hours on average just trying to get them to work. GitHub issues for BLOOM show 347 open problems around multilingual tokenization. LLaMA 3 has fewer, but still 189 issues, mostly about quantization accuracy.

Community support matters. LLaMA 3’s Discord server has 85,000 members and answers questions in 22 minutes on average. Less popular models? You might wait 14 hours. That’s not just inconvenient-it’s a dealbreaker for businesses needing fast fixes.

The Future: Smaller, Smarter, and More Specialized

The next wave isn’t about bigger models. It’s about making them work everywhere. Microsoft’s Phi-3-mini, with just 3.8 billion parameters, hits 69% of GPT-4’s performance and runs on smartphones. That’s not a gimmick-it’s the future. Edge AI, where models run on devices instead of clouds, is projected to grow at 45% annually through 2027.

Specialization is also accelerating. Healthcare models are growing at 62% per year. Financial models are being trained on private datasets with strict compliance guardrails. And instead of choosing one model, companies are building hybrid systems: using LLaMA 3 as a base, then fine-tuning it internally with proprietary data. Gartner found that 58% of enterprises are already doing this.

Even governance is evolving. The EU’s 2025 AI Act now requires transparency documentation for all foundation models used commercially. That means open-source projects must now publish training data sources, model limitations, and known risks. It’s not perfect-but it’s a step toward accountability.

What This Means for You

If you’re a developer: start with LLaMA 3 or Gemma 2. They’re well-documented, widely supported, and legally safe for most uses. Use Ollama or LM Studio to test them locally before deploying.

If you’re a business: don’t just download a model. Ask: What license is it under? Who maintains it? Is there active support? Have others used it in production? Don’t assume ‘open-source’ means ‘no risk.’

If you’re a user: you’re already benefiting. Your customer service chatbot, your image generator, your translation tool-many of them are powered by open models you’ve never heard of. That’s the quiet revolution: AI becoming invisible, reliable, and accessible.

The open-source trajectory in generative AI isn’t slowing down. It’s maturing. The wild west days are over. Now it’s about building systems that are not just powerful-but trustworthy, sustainable, and truly open.

Can I legally use open-source generative AI models for commercial purposes?

It depends on the license. Some models, like LLaMA 3 and Gemma 2, allow commercial use with few restrictions. Others, especially older or community-built variants, may require permission, prohibit use in certain industries, or ban deployment in specific countries. Always check the license file included with the model. Tools like the OpenChain AI Working Group’s compliance checklist can help you navigate this.

Do I need a powerful GPU to run open-source AI models?

No, not anymore. While large models like BLOOM (176B parameters) need multiple high-end GPUs, many modern models are designed for consumer hardware. The 8B version of LLaMA 3 runs on 16GB of VRAM-enough for a high-end laptop. Even smaller models like Phi-3-mini (3.8B) run on smartphones. Tools like Ollama and LM Studio optimize performance for low-resource devices.

Why do some open-source AI models perform worse than the original?

Community fine-tunes often use smaller or lower-quality datasets, or they skip proper evaluation. A 2025 study found 22.3% of community-modified models performed worse than their base versions. Some fine-tunes introduce hallucinations, bias, or instability. Always test any modified model thoroughly before deployment. Stick to well-documented, widely-used base models unless you have the expertise to validate changes.

Are open-source models safer than proprietary ones?

In terms of transparency, yes. With open models, you can inspect the code, check the training data, and verify security patches. This makes them more trustworthy for sensitive use cases. Proprietary models hide their inner workings, which can be risky if they contain hidden biases or backdoors. However, open-source doesn’t automatically mean secure-poorly maintained models can have unpatched vulnerabilities. Always use models from active, reputable communities.

What’s the biggest risk facing open-source AI today?

Fragmented governance. With over 80 different licensing frameworks and no universal standards, enterprises struggle to adopt open models safely. Legal teams can’t keep up. A single model might be fine for internal use but banned for customer-facing applications. Without clearer, unified guidelines, adoption will slow-even if the technology keeps improving.

Open-source generative AI is no longer a niche experiment. It’s the foundation of the next generation of tools. The question isn’t whether to use it-it’s how to use it wisely.

Thabo mangena

March 24, 2026 AT 10:07The rise of open-source generative AI represents a profound shift in the democratization of technological power. It is not merely a technical evolution but a moral imperative toward equitable access to knowledge and innovation. The fact that a student in Cape Town can now run a multilingual model on a secondhand laptop-without corporate permission-is nothing short of revolutionary. This trajectory must be nurtured, protected, and expanded. Governance frameworks must evolve to match the spirit of openness, not constrain it. We owe it to future generations to ensure that AI does not become another tool of exclusion, but one of empowerment.

Karl Fisher

March 26, 2026 AT 08:47Oh wow, LLaMA 3 runs on a MacBook? How quaint. I mean, I remember when we had to train models on 1000+ GPU clusters just to get a decent translation. Now we’re all just downloading apps like it’s a TikTok filter. And don’t even get me started on ‘community fine-tunes’-I’ve seen one that thought the Constitution was written in Klingon. The fact that this is considered progress is honestly embarrassing. Next up: AI-generated Shakespeare on a Casio calculator.

Buddy Faith

March 28, 2026 AT 01:44open source ai is a psyop

big tech lets you run models on your laptop so they can track your prompts

every time you type 'how do i fix my car' they build a profile

they're not giving you freedom they're harvesting your brain

llama 3? more like llama spy

the linux foundation is owned by lockheed martin

you think you're free but you're just another data point in the matrix

Scott Perlman

March 30, 2026 AT 01:09im just happy i can run something on my old laptop without needing a rocket ship

no more waiting for cloud services

no more paywalls

no more begging for api keys

if it works even a little bit its a win

people overthink this stuff too much

just try it

if it breaks you learn

if it helps you its worth it

Sandi Johnson

March 31, 2026 AT 15:21So let me get this straight-companies are delaying AI adoption because they can’t figure out if they’re allowed to use a model that’s technically ‘open’ but has a license written in legalese by a grad student who quit after three weeks? And we’re calling this ‘governance’? I’m not laughing, I’m crying. The only thing more chaotic than the licensing mess is the fact that anyone thought this was a good idea to begin with.

Eva Monhaut

April 1, 2026 AT 08:42What I find most beautiful is how this movement has quietly become a global collaboration-not led by corporations, but by teachers in Nairobi, hackers in Jakarta, and retirees in rural Ohio tinkering with models on Raspberry Pis. The real innovation isn’t in the parameters or the benchmarks-it’s in the community. Someone in Brazil fine-tuned BLOOM to help indigenous elders preserve oral histories. A high schooler in Detroit used Gemma 2 to build a tutor for ESL students. These aren’t side projects-they’re lifelines. The technology is powerful, but the humanity behind it? That’s what changes the world.

mark nine

April 3, 2026 AT 05:13the 8b llama on a mac thing is real

just tried it last night

ran for 3 hours straight asking it to write haikus about my cat

it got kinda weird by the 17th one

but yeah it works

no gpu needed

no cloud fees

just pure dumb luck and good software

people are scared of the license stuff

but honestly if you're not selling it or putting it in medical devices

you're probably fine

just don't be dumb

Tony Smith

April 4, 2026 AT 05:23It is imperative to acknowledge that the proliferation of open-source generative AI models, while laudable in intent, has inadvertently created a regulatory vacuum of unprecedented magnitude. The absence of standardized compliance protocols, coupled with the proliferation of over eighty distinct licensing frameworks, constitutes not merely inefficiency-but systemic risk. Enterprises are not merely hesitant; they are paralyzed. The notion that ‘open-source’ equates to ‘risk-free’ is not only erroneous-it is dangerously naïve. We must institutionalize governance-not through corporate fiat, but through international, consensus-driven standards. The future of AI cannot be left to Discord servers and GitHub issues. It must be codified.