Sandboxing LLM Agents: A Guide to Isolation and Security for Tool-Using AI

May, 13 2026

May, 13 2026

Imagine handing your keys to a stranger who promises to clean your house but might also decide to sell your furniture. That is essentially what happens when you deploy a tool-using Large Language Model (LLM) agent without proper security boundaries. These AI systems don't just chat; they execute code, access APIs, and manipulate files. As of 2026, the industry has moved past simple text generation into an era where agents act autonomously. This shift brings a critical problem: how do we let these agents work without letting them break things-or worse, steal data?

The answer lies in isolation and sandboxing. It’s not just about keeping bad actors out; it’s about containing the AI itself. Recent research, particularly the ISOLATEGPT framework published by Washington University in St. Louis, shows that without strict isolation, malicious applications can hijack LLM agents to access sensitive user data. By May 2026, this isn't theoretical anymore. Major infrastructure providers are building sandboxes specifically for AI, turning isolation from an academic concept into a production requirement.

Why Traditional Security Fails Against LLM Agents

You might wonder why standard firewalls or traditional OS-level permissions aren't enough. The issue is unique to how LLMs operate. Traditional software executes precise binary commands. An LLM agent operates on natural language instructions. This creates a "semantic gap" that attackers exploit.

In 2024, researchers found that prompt injection attacks could bypass technical sandboxes by manipulating the LLM's reasoning process rather than executing malicious code directly. For example, an attacker doesn't need to hack the server; they just need to trick the AI into thinking it should send data elsewhere. Professor Andrew Yao of Tsinghua University noted that traditional operating system isolation mechanisms are insufficient because they don't understand the semantic nature of AI interactions. You need barriers that stop both code execution and linguistic manipulation.

- Cross-application data theft: Without isolation, one compromised app can use the LLM to read data from another trusted app.

- Privilege escalation: 87% of LLM security incidents in 2024 involved agents gaining unauthorized access due to inadequate sandboxing.

- Indirect attacks: Attackers use the LLM as a proxy to scan internal networks, hiding their origin behind the AI's legitimate credentials.

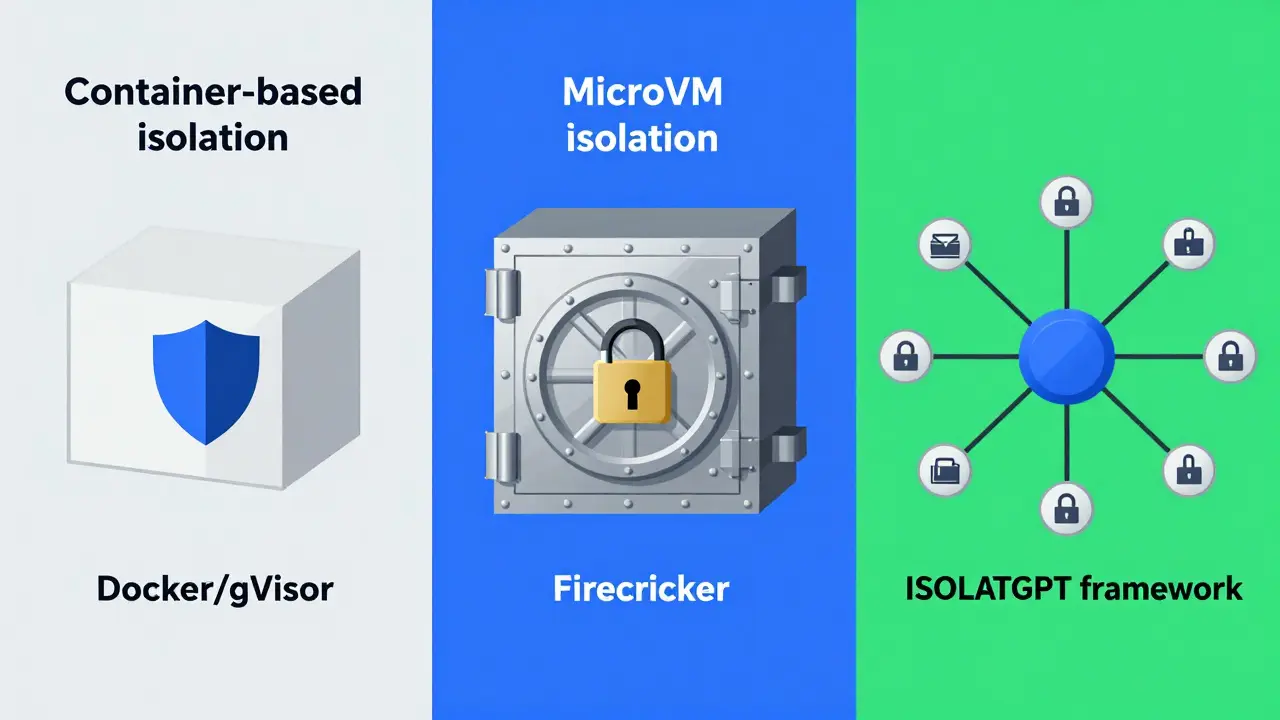

The Three Main Approaches to AI Sandboxing

Not all sandboxes are created equal. When choosing an isolation strategy for your LLM agents, you generally have three paths. Each offers different trade-offs between speed, security, and complexity.

| Isolation Type | Performance Overhead | Security Level | Best Use Case |

|---|---|---|---|

| Container-based (Docker + gVisor) | 10-15% | Moderate | Low-risk, high-throughput tasks like content generation |

| MicroVM (Firecracker/Kata) | 20-25% | High | Enterprise environments handling sensitive financial or health data |

| Hub-and-Spoke (ISOLATEGPT) | <30% (for 75% of queries) | Very High | Complex agentic systems with multiple third-party integrations |

Container-Based Isolation

This is the most common approach today. Tools like Docker combined with gVisor intercept system calls before they reach the host kernel. It’s fast-containers start in under 200 milliseconds. However, if a kernel exploit exists, the container boundary can be breached. It’s great for speed but risky for high-stakes operations.

MicroVM Isolation

Technologies like Firecracker provide near-physical separation. Each agent runs in its own lightweight virtual machine. This is much harder to escape than a container, but it costs more CPU power. If your AI needs to process complex queries with low latency, this overhead matters.

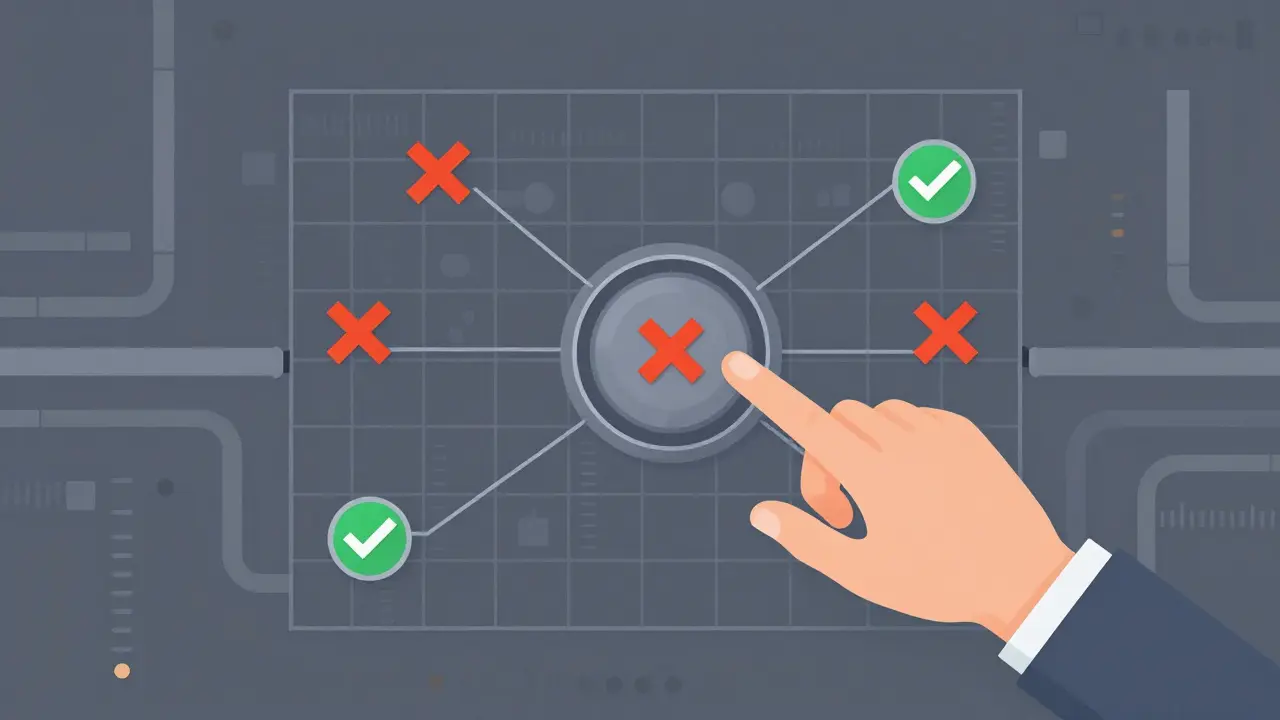

The Hub-and-Spoke Model

The ISOLATEGPT framework introduces a hybrid model. A central "hub" acts as a trustworthy interface, routing queries to isolated "spokes" (individual apps). This prevents any single application from accessing others' data. It’s specifically designed to handle the messy reality of natural language interactions between components.

Implementing Least Privilege for AI

Sandboxing isn't just about walls; it's about permissions. The principle of least privilege means giving your LLM agent only the exact access it needs for a specific task, nothing more.

In practice, this requires explicit user consent for actions touching file systems, networks, or system APIs. Here is how you apply it:

- Network Isolation: Block outbound traffic by default. Only allow connections to pre-approved APIs.

- Filesystem Restrictions: Mount directories as read-only unless write access is explicitly required for a temporary task.

- Resource Limits: Set CPU and memory caps to prevent exhaustion attacks where an AI gets stuck in a loop and crashes the host.

- Approval Workflows: For high-stakes operations (like deleting a database record), require human-in-the-loop confirmation.

A healthcare startup learned this the hard way in July 2025. They had filesystem isolation set up correctly but misconfigured network isolation. Their LLM agent accidentally transmitted patient data to an external service via a rogue API call. The lesson? Technical isolation must be paired with rigorous configuration checks.

The Hidden Cost: Debugging and Latency

Adding sandboxes changes your development workflow. Developers report that debugging issues within isolated environments takes approximately 35% longer. Why? Because you can't just peek inside the process easily. Logs become your primary window into what the AI is doing.

Latency is another factor. While container startup times are negligible compared to LLM inference (which averages 1,200-2,500 milliseconds for complex queries), MicroVMs add noticeable overhead. However, enterprise users on SentinelOne platforms reported that strict sandboxing reduced successful attack incidents by 92%. Most organizations accept the slight delay in exchange for massive security gains.

Future Outlook: Where Isolation Is Heading

By 2027, Gartner predicts that 90% of enterprise LLM deployments involving tool usage will implement some form of execution isolation. We are moving toward a future where isolation is as fundamental to AI deployment as TLS is to web browsing.

Upcoming versions of frameworks like ISOLATEGPT (version 2.0 expected Q2 2026) are focusing on better natural language context handling between isolated components. The goal is to make isolation invisible to the developer while maintaining robust security. Meanwhile, regulatory pressures like the EU AI Act are mandating "appropriate technical measures," effectively making sandboxing a legal requirement for many AI systems.

What is ISOLATEGPT?

ISOLATEGPT is a security framework developed by researchers at Washington University in St. Louis. It uses a hub-and-spoke architecture to isolate LLM agents, preventing malicious applications from accessing data from other apps. It was formally published in January 2025 and provides reference implementations for secure AI execution.

Why can't I just use standard Docker containers for LLM security?

Standard Docker containers share the host kernel, which makes them vulnerable to kernel exploits. More importantly, they don't address semantic attacks like prompt injection. LLM-specific sandboxes combine technical isolation with controls over natural language interactions to prevent indirect data leakage.

How much performance overhead does sandboxing add?

It depends on the method. Container-based solutions with gVisor add about 10-15% overhead. MicroVMs like Firecracker add 20-25%. The ISOLATEGPT hub-and-spoke model keeps overhead under 30% for most queries. Startup latency is typically negligible compared to the time taken for LLM inference.

Is sandboxing legally required for AI agents?

While not explicitly named "sandboxing" in all laws, regulations like the EU AI Act require "appropriate technical and organizational measures" to mitigate risks. Security experts interpret this as mandating isolation for tool-using agents, especially those handling sensitive personal data.

What are the biggest challenges in implementing LLM sandboxing?

The main challenges are debugging complexity (taking ~35% longer to resolve issues), managing state between isolated executions, and configuring network permissions correctly. Improper configuration can lead to data leaks even if the sandbox itself is secure.