Statistical NLP vs Neural NLP: Why Large Language Models Rewrote the Playbook

Mar, 19 2026

Mar, 19 2026

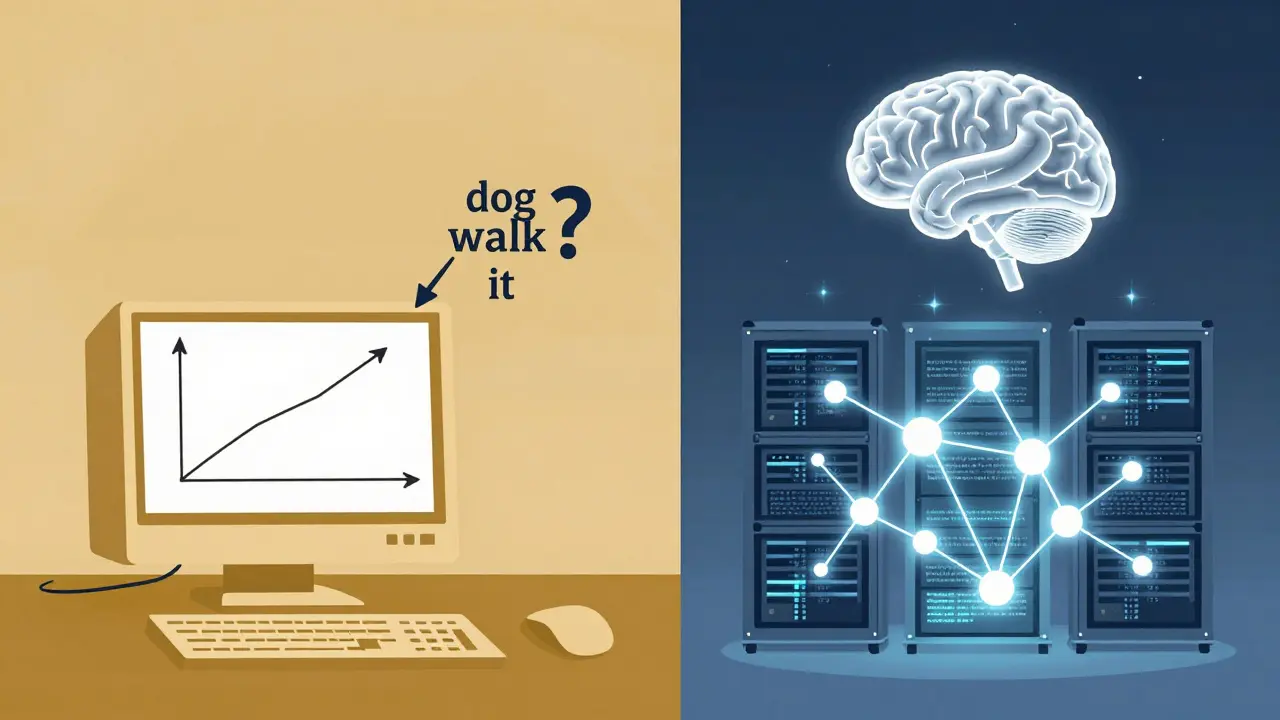

For decades, computers struggled to understand human language. Spellcheckers got better, autocorrect got smarter, but machines still couldn’t hold a real conversation. Then, around 2018, everything changed. The rise of large language models didn’t just improve NLP - it erased the old rulebook and started over. If you’re still using statistical methods for language tasks today, you might be working with tools designed for a different era.

What Was Statistical NLP?

Statistical NLP was the workhorse of language processing from the 1990s through the 2010s. It didn’t try to understand language like a person. Instead, it counted. It looked at millions of sentences and asked: "How often does word A come after word B?" Based on those patterns, it guessed the next word, corrected spelling, or tagged parts of speech. Think of it like predicting the next note in a song by listening to every song ever written. If 87% of the time, a C note was followed by an E, the system would pick E. Simple. Reliable. But limited. Models like Hidden Markov Models and n-gram language models were the backbone. Libraries like NLTK and spaCy made them accessible. They powered early chatbots, search autocomplete, and T9 texting. You could run them on a laptop. Training took hours, not weeks. And because every decision was based on clear counts and probabilities, you could trace why a model made a choice. That mattered - especially in healthcare, finance, or legal systems where you need to explain every output. But there was a flaw. These models didn’t understand context beyond a few words. If you said, "I took the dog for a walk because it was raining," a statistical model might think "it" referred to the dog. Why? Because "dog" was the last noun. It couldn’t grasp that rain is what makes people walk dogs. It had no sense of the bigger picture.The Neural NLP Revolution

Everything shifted in 2017 with a paper called "Attention Is All You Need." It introduced the Transformer, a new architecture that changed how machines processed language. Instead of looking at words one by one, Transformers looked at them all at once. They asked: "Which words in this sentence matter most to each other?" This wasn’t just an upgrade. It was a leap. Suddenly, machines could understand that "It" in the sentence above meant "the weather," not the dog. They could write poetry, summarize legal documents, and answer complex questions - not because they were programmed to, but because they learned patterns from massive amounts of text. BERT, GPT-2, and GPT-3 followed. By 2020, GPT-3 had 175 billion parameters. That’s 175 billion numbers the model adjusted during training to predict language. Statistical models? They had thousands or millions. The gap wasn’t just bigger - it was unimaginable. The results spoke for themselves. On the GLUE benchmark - a standard test for language understanding - BERT hit 93.2% accuracy. Statistical models maxed out around 70%. In medical text analysis, machine-learned models found 9 out of 26 key findings that rule-based systems missed. The difference wasn’t marginal. It was transformative.Why LLMs Won - And Where They Still Lose

Large language models dominate today because they’re better at almost everything: generating coherent text, answering open-ended questions, translating with nuance, even writing code. Companies like Babylon Health cut content creation time from three weeks to three hours using fine-tuned LLMs. Customer service bots now sound human. Developers use them to brainstorm ideas, debug code, or draft emails. But they’re not perfect. LLMs hallucinate. A 2023 Stanford study found that 18-25% of their outputs contain made-up facts. They amplify bias - one MIT study showed LLM-generated text had 37% higher bias than human-written text. And they’re expensive. Training GPT-3 cost $4.6 million. Running it requires servers with hundreds of gigabytes of memory. Most small businesses can’t afford it. And here’s the kicker: no one knows exactly how they make decisions. A 2022 study found that 78% of LLM decisions in medical applications couldn’t be traced back to training data. If a bank denies a loan based on an LLM’s analysis, can you explain why? In regulated industries, that’s a dealbreaker.

Where Statistical NLP Still Wins

If you work in healthcare, finance, or government - where explainability isn’t optional - statistical NLP is still alive. At Mayo Clinic, developers still use spaCy’s rule-based matchers to extract patient information. Why? Because every decision must be audited. Clinicians need to know: "Why did the system flag this term?" Statistical models give clear, traceable reasons. LLMs? They say "I think this patient has diabetes" - but can’t show you the evidence. They’re also cheaper. Running NLTK on a Raspberry Pi? Easy. Deploying GPT-3? You need cloud credits, API limits, and a budget. A 2023 Reddit thread from an NLP engineer at a hospital said it best: "I need to explain every decision to regulators. LLMs fail audit requirements." Statistical models also handle edge cases better. If a patient’s record says "hypertens.," a rule-based system can be trained to recognize it as "hypertension." An LLM might guess wrong - or hallucinate a diagnosis.The Hybrid Future

The future isn’t about choosing one over the other. It’s about combining them. Google’s Atlas model, released in 2023, uses traditional information retrieval to pull verified facts, then lets a neural model write a clear answer. The result? 34% fewer hallucinations. Microsoft’s Phi-2, a tiny 2.7-billion-parameter model, matches the performance of much larger LLMs by training on high-quality data - proving you don’t always need massive models. Experts agree. Dr. Yoshua Bengio, a Turing Award winner, says the future lies in neuro-symbolic systems - neural networks for pattern recognition, paired with symbolic rules for logic and precision. A 2018 Stanford study found that hybrid systems achieved 89.7% accuracy in medical text analysis - higher than either approach alone. By 2026, IDC predicts 65% of new enterprise NLP systems will be hybrid. That’s not a compromise. It’s the smartest path forward.What Should You Use?

Ask yourself:- Do you need to explain every decision? → Use statistical methods.

- Are you building a chatbot that writes marketing copy? → Use LLMs.

- Do you have limited computing power? → Stick with spaCy or NLTK.

- Are you working in healthcare or finance? → Start with rules, then add LLMs for enhancement.

- Do you need to generate long, creative text? → LLMs are your only real option.

Real-World Trade-offs

| Feature | Statistical NLP | Neural NLP (LLMs) |

|---|---|---|

| Parameter Size | Thousands to millions | Billions to trillions |

| Hardware Needed | Laptop or server | High-end GPU clusters |

| Context Awareness | Local (few words) | Global (entire document) |

| Long-Term Dependencies | Poor | Excellent |

| Interpretability | High - traceable logic | Low - "black box" |

| Accuracy (Language Tasks) | 60-75% | 85-95% |

| Training Cost | $100-$1,000 | $1M-$10M+ |

| Latency | Milliseconds | Seconds to minutes |

| Best For | Regulated industries, rule-based extraction, low-resource environments | Content generation, chatbots, translation, complex reasoning |

Statistical NLP isn’t dead. It’s just not the star anymore. And that’s okay. Sometimes, the quiet workhorse is more valuable than the flashy new machine.

Are statistical NLP methods still used today?

Yes. While large language models dominate headlines, statistical methods are still widely used in healthcare, finance, legal tech, and government systems where explainability and auditability matter. Tools like spaCy and NLTK remain popular for named entity recognition, rule-based text matching, and low-resource deployments. A 2022 HL7 report found that 85% of healthcare NLP applications still rely on rule-based or statistical components.

Why did LLMs replace statistical models in most applications?

LLMs outperformed statistical models on nearly every benchmark - especially in tasks requiring context, creativity, or long-range understanding. While statistical models could predict the next word based on the last few words, LLMs understood entire paragraphs. BERT scored 93.2% on the GLUE benchmark; statistical models rarely broke 75%. The jump in quality was so dramatic that companies abandoned older systems for LLMs in customer service, content creation, and search.

Can you use statistical and neural NLP together?

Absolutely. Hybrid systems are becoming the norm. Google’s Atlas model combines traditional search with neural generation to reduce hallucinations. At Stanford Medical Center, combining rule-based filters with machine learning boosted accuracy to 89.7%. Many enterprises now use statistical methods for data cleaning and validation, then feed results into LLMs for generation. This approach balances performance with reliability.

What are the biggest downsides of large language models?

LLMs have three major issues: they hallucinate (make up facts), they’re hard to explain (black box behavior), and they’re expensive to run. A 2023 Stanford study found 18-25% of LLM outputs contain false information. In regulated fields, this is dangerous. Training a single LLM like GPT-3 cost $4.6 million. Their environmental impact is also high - one training run can emit as much CO2 as five cars over their lifetime.

Is it worth learning statistical NLP today?

If you work in regulated industries, healthcare, or with limited resources, yes. Understanding statistical NLP helps you build more reliable systems, debug issues faster, and combine tools effectively. Even if you use LLMs, knowing how statistical models work lets you design better prompts, validate outputs, and create hybrid pipelines that are more trustworthy. Many job postings still list spaCy or NLTK as required skills.

Rajat Patil

March 20, 2026 AT 07:34Statistical NLP still saves lives in hospitals every day. I’ve seen systems flag a patient’s ‘hypertens.’ as hypertension and trigger an alert-no guesswork, no hallucination. When you’re dealing with real people, not chatbots, clarity matters more than flair.

LLMs are impressive, sure. But when a doctor needs to justify a decision to a regulator, they need to see the rule that triggered it. Not a black box saying ‘I think.’

deepak srinivasa

March 22, 2026 AT 01:59It’s wild how we keep acting like LLMs are the only way forward. I work in rural India with limited bandwidth. My NLP tool runs on a Raspberry Pi with NLTK. It doesn’t write poetry, but it extracts patient names from handwritten notes. That’s enough.

Why force a 175B-parameter model on a system that can’t even afford a decent internet connection?

pk Pk

March 22, 2026 AT 21:14Hey everyone, let’s not pit these against each other. I’ve built systems that use both-and they’re better together. Rule-based filters clean the data first, then I feed it to a tiny LLM. The result? Fewer errors, lower cost, and still human-readable logic.

At my hospital, we cut false positives by 40% using this hybrid. No need to choose. We’re not in a war. We’re in a toolkit.

Also-shoutout to spaCy. It’s still the MVP for named entity recognition. Don’t let the hype make you forget the basics.

NIKHIL TRIPATHI

March 24, 2026 AT 12:15Been in this field since 2015. Back then, we were proud if our n-gram model got 68% accuracy on POS tagging. Now? LLMs hit 93%. It’s like going from horse cart to SpaceX.

But here’s the thing-I still use statistical methods for preprocessing. Why? Because LLMs choke on typos, abbreviations, and messy medical notes. A simple rule: ‘hypertens.’ → ‘hypertension’-that’s 10 lines of code, not 10 million parameters.

Hybrid isn’t a compromise. It’s smart engineering. And honestly? Most companies don’t need GPT-4. They need something that works offline, on a budget, without hallucinating a patient’s diagnosis.

Shivani Vaidya

March 25, 2026 AT 20:49LLMs are flashy. Statistical models are quiet heroes.

I work in legal compliance. Every output must be traceable. If a system says ‘this contract is invalid,’ I need to show the exact rule that says so-not a neural net’s gut feeling.

My team still uses spaCy with custom rules. It’s not glamorous. But it passes audits. And that’s what matters.

Rubina Jadhav

March 27, 2026 AT 11:08My dad’s a doctor. He uses an old system to pull data from records. It doesn’t talk. It doesn’t write essays. It just finds ‘diab.’ and tags it as diabetes. Simple. Reliable. No drama.

LLMs? Too noisy. Too expensive. Too risky.

sumraa hussain

March 27, 2026 AT 21:24Bro. LLMs are like that one friend who shows up to every party with a new outfit and a 10-minute story about their ‘epiphany’-but forgets to pay the bill.

Statistical NLP? That’s the guy who shows up in the same hoodie every time, brings snacks, fixes your Wi-Fi, and never says ‘I think.’ He just does it.

And guess what? The hospital still calls him when the system crashes.

Respect the grind. The flashy stuff doesn’t run on a Raspberry Pi. But the quiet ones? They keep the lights on.