Transformer Architecture in Generative AI: A Practical Guide for Engineers

Mar, 1 2026

Mar, 1 2026

When you type a question into a chatbot and get a thoughtful, human-like answer in under a second, you're not interacting with magic. You're interacting with a transformer architecture. This is the engine behind every major generative AI model today - from ChatGPT to Claude to Gemini. If you're an engineer working with AI, understanding how transformers actually work isn't optional anymore. It's the baseline. Forget the hype. Let’s break down what’s inside, how it moves data, and why it outperforms everything that came before.

Why Transformers Replaced RNNs and CNNs

Before transformers, the go-to models for language were Recurrent Neural Networks (RNNs) and Convolutional Neural Networks (CNNs). RNNs processed text one word at a time, like reading a sentence letter by letter. That created a bottleneck. If a sentence had 100 words, the model had to make 100 sequential steps. Long-range dependencies - like connecting the subject of a sentence to its verb 50 words later - got lost. CNNs were faster but still couldn’t capture context across long distances efficiently. They treated local patterns well but missed the big picture. Transformers changed all that. Instead of processing text step by step, they look at the entire sequence at once. Think of it like reading a paragraph and understanding every word’s relationship to every other word in a single glance. That’s the power of self-attention a mechanism that lets each token in a sequence weigh its importance to every other token. This isn’t just an improvement. It’s a paradigm shift. By removing sequential constraints, transformers became massively parallelizable. That’s why they run efficiently on GPUs and scale to billions of parameters.The Three Core Components of a Transformer

Every transformer model, no matter how complex, is built from three essential parts:- Embedding Layer - This takes your raw text and turns it into numbers. Words are split into tokens small units like words or subwords, such as "un" and "der" from "understand". Each token is mapped to a dense vector (embedding) that captures meaning. Positional encoding is added to these vectors so the model knows word order - because without it, "dog bites man" and "man bites dog" would look identical.

- Transformer Blocks - These are the workhorses. Each block contains two main sub-layers: multi-head self-attention a technique that lets the model focus on different aspects of relationships between tokens simultaneously, and a feed-forward neural network a simple fully connected layer applied independently to each token position. These layers are stacked with residual connections a skip-link that helps gradients flow during training, enabling deeper networks and layer normalization a method to stabilize training by normalizing activations across features. The model repeats this block multiple times - GPT-2 uses 12, GPT-3 uses 96, and GPT-4 likely uses over 100.

- Unembedding Layer - After the final transformer block, the output vectors are passed through a linear layer that maps them back to the vocabulary size. This gives a probability distribution over all possible next tokens. The model picks the highest-probability token and repeats the process until the output is complete.

This structure is clean, modular, and scalable. You can swap out tokenizers, increase embedding dimensions, or add more layers without redesigning the whole system.

How Self-Attention Actually Works

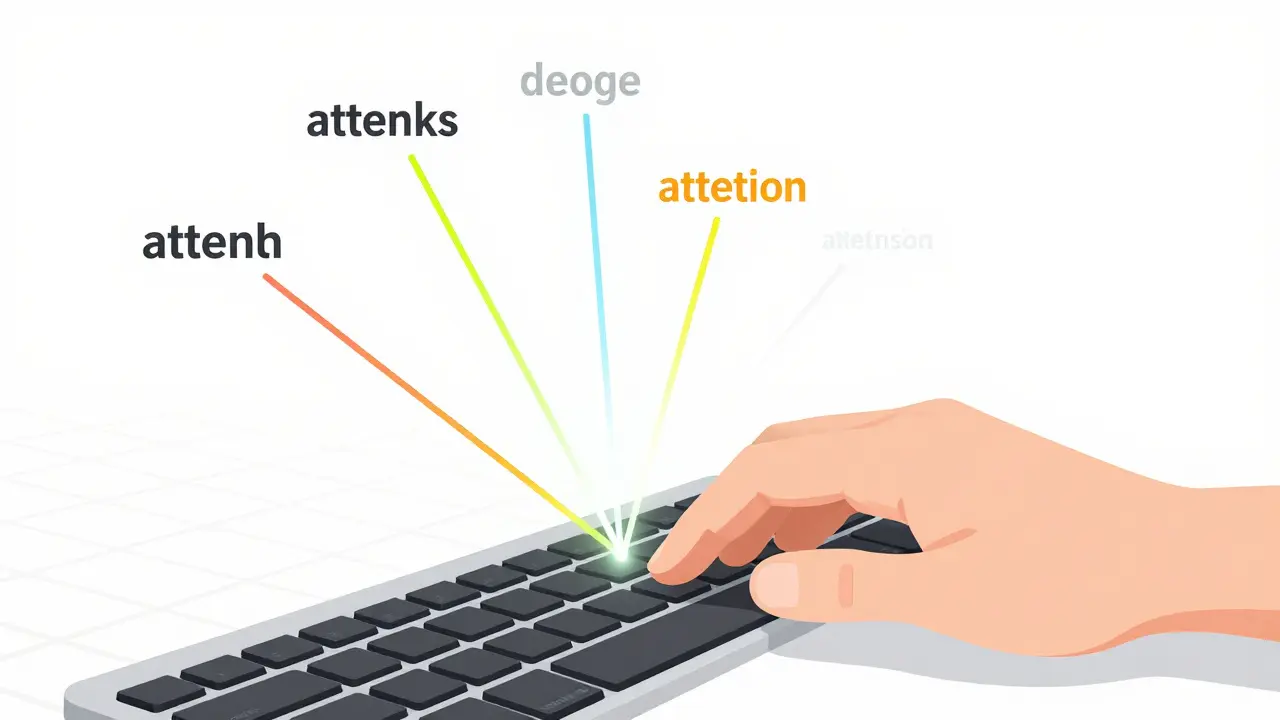

Self-attention is the secret sauce. Here’s how it works in practice:For each token, the model calculates three vectors: query, key, and value. These are learned weights derived from the token’s embedding. The model then computes a score between every query and every key - this tells it how much attention to pay to each token. The scores are passed through a softmax to turn them into probabilities. Finally, each value is weighted by its attention score and summed up to produce the output for that token.

Why is this powerful? Because it captures context dynamically. In the sentence "The cat sat on the mat because it was tired," the word "it" can now attend to "cat" and not "mat." The model doesn’t need to memorize rules - it learns which words matter together through training.

Multi-head attention takes this further. Instead of one attention mechanism, the model runs several in parallel. Each head learns a different kind of relationship - one might focus on syntax, another on semantics, another on entity references. The outputs are concatenated and projected into a final representation. This is why transformers outperform older models: they don’t just see relationships - they see many kinds of relationships at once.

Encoder vs. Decoder: Two Sides of the Same Coin

Not all transformers are built the same. There are two main architectures:- Encoder-only - Models like BERT use only encoder blocks. They’re great for understanding text. You feed in a sentence, and it outputs a rich representation of each token. That’s why BERT excels at tasks like sentiment analysis or question answering - it sees the whole context before making a decision.

- Decoder-only - Models like GPT are built from decoder blocks. They generate text one token at a time. Each new token can only attend to previous tokens (and the input), not future ones. That’s why they use causal masking a technique that hides future tokens during generation to enforce autoregressive order. This is perfect for chatbots, code generation, and creative writing.

- Encoder-decoder - Models like T5 or Google’s Gemini use both. The encoder processes the input (like a question), and the decoder generates the answer. This is ideal for translation or summarization.

As an engineer, your choice depends on your task. Need to classify text? Use an encoder. Need to generate answers? Use a decoder. Need to translate? Use both.

Pre-training and Transfer Learning: The Real Game-Changer

You don’t train a transformer from scratch on your dataset. That would cost millions and take months. Instead, you use transfer learning.Big companies like OpenAI and Google pre-train massive transformer models on hundreds of gigabytes of text - Wikipedia, books, code repositories, forums. This pre-training teaches the model the structure of language: grammar, facts, reasoning patterns. You don’t need labels. It’s unsupervised learning at scale.

Then, you fine-tune it. Take a pre-trained GPT model. Freeze the transformer blocks. Add a small classification head on top - maybe a single dense layer with softmax. Train it on your labeled data: customer reviews, support tickets, medical notes. In days, you get a model that understands your domain. This is why startups can compete with tech giants - they don’t need to train from scratch. They just need good data and the right fine-tuning strategy.

For engineers, this means: focus on data quality, not model size. A 7B parameter model fine-tuned on clean, domain-specific data often beats a 70B model trained on generic text.

Practical Tips for Engineers

Here’s what works in real-world deployments:- Use Hugging Face - It’s the go-to library for transformer models. You can load GPT-2, Llama, Mistral, or BERT with one line of code. No need to implement attention from scratch.

- Tokenization matters - Choose the right tokenizer. Byte Pair Encoding (BPE) is standard, but for code, you might prefer CodeBERT’s tokenizer. Test on your data.

- Don’t overfit - Transformers are powerful, but they memorize. Use dropout, early stopping, and validation metrics. Monitor loss on a held-out set.

- Monitor attention maps - Tools like Transformer Lens let you visualize which tokens the model attends to. If it’s ignoring key terms, your fine-tuning data might be biased.

- Use quantization - For deployment, convert weights from float32 to int8. You’ll lose 1-2% accuracy but cut memory usage by 75%. That’s critical for edge devices.

What Comes Next?

Transformers aren’t perfect. They’re slow to train, hungry for data, and can hallucinate. But they’re the foundation. Researchers are already building on them: sparse attention for efficiency, mixture-of-experts to reduce compute, and multimodal transformers that handle images, audio, and text together.For engineers today, the message is clear: learn how transformers work. Not just to use them - but to debug them, optimize them, and adapt them. The next breakthrough won’t come from a new architecture. It’ll come from someone who understands how to make transformers do something they weren’t designed for.

What’s the difference between self-attention and regular attention?

Regular attention, like in older seq2seq models, compares a query vector from the decoder to key vectors from the encoder - it’s cross-attention between two different sequences. Self-attention happens within a single sequence. Every token in the input compares itself to every other token in the same input. That’s why transformers can understand context without needing separate encoder and decoder layers - though they can still use both.

Why do transformers need positional encoding?

Transformers process all tokens at once, so they lose the natural order that RNNs get by processing one token after another. Positional encoding adds a unique vector for each position in the sequence - like a timestamp for words. These vectors are learned or calculated using sine and cosine functions, allowing the model to learn relationships based on distance and order, even without sequential processing.

Can transformers work with non-text data?

Yes. Transformers have been adapted for images (ViT), audio (Whisper), and even protein sequences. The key insight is that any sequence - whether pixels in a grid, time steps in a waveform, or amino acids in a protein - can be treated as tokens. You just need to convert them into embeddings and add positional information. That’s why vision transformers now outperform CNNs in many image tasks.

How much data do I need to fine-tune a transformer?

For most tasks, 500-5,000 labeled examples are enough if you’re using a pre-trained model. With less than 100 examples, you’ll likely overfit. With more than 10,000, you can experiment with full fine-tuning of all layers. The quality of data matters more than quantity - noisy labels hurt more than missing examples.

Are transformers the only option for generative AI?

No - GANs and VAEs still exist, especially for image generation. But for text, code, and multimodal generation, transformers dominate. They’re more scalable, easier to train with large datasets, and produce more coherent outputs. If you’re building a language model today, transformers are the only practical choice.

Kieran Danagher

March 2, 2026 AT 14:01Shivam Mogha

March 4, 2026 AT 06:56Natasha Madison

March 4, 2026 AT 08:01Sheila Alston

March 6, 2026 AT 03:06sampa Karjee

March 7, 2026 AT 15:15Patrick Sieber

March 8, 2026 AT 22:19poonam upadhyay

March 10, 2026 AT 01:45OONAGH Ffrench

March 11, 2026 AT 07:53mani kandan

March 12, 2026 AT 12:31