Why Output Tokens Cost More: The Computation Behind LLM Generation

Apr, 10 2026

Apr, 10 2026

If you've ever looked at the pricing page for an AI API, you probably noticed something annoying: generating text is significantly more expensive than reading it. As of 2026, output tokens typically cost between 2 to 8 times more than input tokens. For some premium models, you're paying an 8× multiplier just for the words the AI writes back to you. It feels like a random pricing tactic, but it's actually rooted in the brutal physics of how Large Language Models is a type of artificial intelligence trained on massive datasets to predict the next token in a sequence actually work. The difference isn't about profit margins; it's about the difference between a sprint and a marathon.

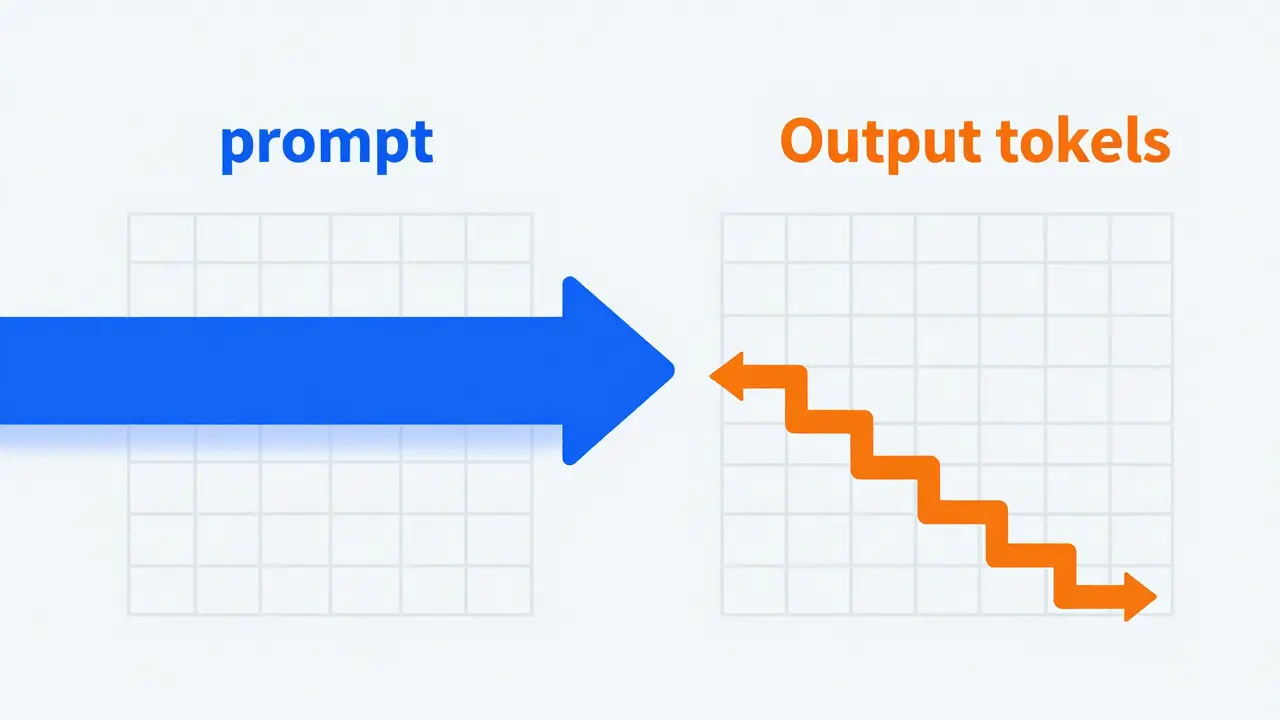

The Parallel vs. Sequential Divide

To understand the price gap, we have to look at how the model handles your prompt versus how it handles the response. When you send a prompt, the model uses parallel processing. It takes all your input tokens and pushes them through the neural network in one single forward pass. Think of this like a professional reader scanning a page; they can process huge chunks of information almost simultaneously.

Output generation is a completely different beast. LLMs use Autoregressive Generation, which is a process where the model predicts one token at a time, then feeds that token back into the input to predict the next one. There is no shortcut here. To produce a 100-word response, the model has to run the entire inference process 100 separate times. Each single word requires a full trip through the billions of parameters in the network. You aren't paying for the word; you're paying for the repeated execution of the entire model.

The Memory Tax and GPU Overhead

It gets more expensive as the conversation goes on. This is due to memory overhead. During output generation, the model has to keep a state of everything that has happened so far in the conversation. This consumes GPU Memory, the high-speed hardware required to hold the model's weights and active calculations. As the output grows, the "context window" expands, meaning every new token must be processed alongside every previous token.

Beyond the raw math, outputting text involves several heavy-duty techniques that input processing simply doesn't need:

- Beam Search: The model explores multiple potential word paths to find the most coherent sequence.

- Temperature Sampling: Adding randomness to make the AI sound more human and less robotic.

- Alignment Layers: Final checks to ensure the response follows safety guidelines and formatting rules.

All of these add layers of computational intensity. While input is a straightforward "read and understand" operation, output is a high-stakes "predict, verify, and refine" cycle that hogs expensive hardware time.

2026 Pricing Realities: The Numbers

The market pricing reflects these hardware demands. If you look at flagship models today, the gap is stark. For example, OpenAI's GPT-5.2 Pro charges $21 per million input tokens, but leaps to $168 per million for output-a massive 8× difference. Other industry leaders like Anthropic maintain a similar gap with their Claude 4 series, often landing on a 5× multiplier.

| Model Tier | Input Cost (per 1M tokens) | Output Cost (per 1M tokens) | Price Ratio |

|---|---|---|---|

| Ultra-Premium (e.g., GPT-5.2 Pro) | $21.00 | $168.00 | 8:1 |

| High-End (e.g., Claude Opus 4) | $15.00 | $75.00 | 5:1 |

| Standard Flagship | $2.50 | $10.00 | 4:1 |

The Hidden Cost of "Thinking"

If you're using the latest reasoning models, the bill gets even steeper. These models generate Reasoning Tokens, which are internal computational steps where the model "thinks" through a problem before delivering the final answer. These tokens are effectively invisible to the user but are computationally expensive to produce.

Reasoning tokens sit at the top of the cost hierarchy. Because they require multiple internal inference passes to verify logic and correct errors, a complex task using a reasoning model can cost 5 to 10 times more than the same task on a standard model. You're essentially paying for the AI to double-check its own work before it speaks.

How to Stop Wasting Your Budget

Since output tokens are the primary cost driver, the biggest wins in cost optimization come from reducing verbosity. Many developers make the mistake of leaving output tokens uncapped, allowing the model to ramble. A customer support bot handling a million monthly chats can easily waste thousands of dollars if the responses are unnecessarily wordy.

Here are a few concrete ways to bring those costs down:

- Set Strict max_tokens Limits: Force the model to be concise by capping the response length.

- Optimize Few-Shot Examples: If you provide examples in your prompt, keep the expected outputs short. Verbose examples teach the model to be verbose.

- Prompt for Conciseness: explicitly tell the model to "be brief" or "use bullet points." This reduces the number of autoregressive cycles required.

- Evaluate Model Tiers: Sometimes a more expensive model is actually cheaper overall because it solves the problem in 50 tokens, whereas a cheaper model fails and requires three 200-token retries.

The economics of 2026 are clear: the length of the completion is what balloons your invoice. By treating every generated token as a premium resource, you can build applications that are both capable and financially sustainable.

Why can't AI generate all output tokens at once?

Because LLMs are probabilistic. Each word depends on the words that came before it. The model cannot know what the fifth word is until it has decided what the first, second, third, and fourth words are. This sequential dependency is what makes autoregressive generation necessary and computationally expensive.

Are reasoning tokens charged differently than standard output tokens?

Yes, in most 2026 pricing models, reasoning tokens are the most expensive category. While they function similarly to output tokens (requiring a forward pass), they often involve more intensive internal loops to verify logic, which increases the GPU time required per token.

Does a larger context window increase the cost of output tokens?

Indirectly, yes. As the conversation grows longer, the model must process a larger amount of data for every new token it generates. While providers usually charge a flat per-token rate to keep things simple, the actual computational load on the GPU increases as the context window fills up.

Is it always cheaper to use a smaller model?

Not necessarily. Small models are cheaper per token, but they are more prone to hallucinations or failure. If a small model fails and requires a user to re-prompt three times, you might spend more in total tokens than if a high-end model got the answer right the first time.

What is the most effective way to reduce API bills?

Focus on the output. Since output tokens cost 4-8 times more than input tokens, limiting verbosity through system prompts and strict max_tokens constraints is the fastest way to cut costs without sacrificing a huge amount of quality.

Patrick Sieber

April 11, 2026 AT 23:38This breakdown makes a lot of sense. It is basically the difference between reading a script and actually performing the play in real-time.

Shivam Mogha

April 13, 2026 AT 22:02Very clear explanation.

Kieran Danagher

April 14, 2026 AT 11:02Oh wow, imagine actually thinking that limiting max_tokens is a 'strategy' rather than just a desperate attempt to stop the model from hallucinating endless loops of nonsense. Pure genius.

sampa Karjee

April 14, 2026 AT 20:51The sheer audacity of thinking a basic table suffices for such a complex economic shift is staggering. Most of you simply lack the intellectual rigor to understand that these multipliers are designed to gatekeep high-tier compute from the masses. It is a systemic filtration of quality. Only those with a genuine grasp of architectural efficiency can optimize these costs, while the rest of you just fumble with 'be brief' prompts like children playing with blocks. It is frankly embarrassing that we even have to explain the concept of autoregressive generation in 2026. The academic community has been shouting about the KV cache and memory bottlenecks for years. If you are just now realizing that output is more expensive, you are already obsolete in this industry. Get a grip on the fundamentals or stop pretending you are building 'AI applications' when you are just wrapping an API and hoping for the best. The disparity is a feature of the physics, not a bug in the pricing, and anyone unable to internalize this is wasting everyone's time.

mani kandan

April 16, 2026 AT 08:18The way these models dance through the latent space to produce a single token is truly a kaleidoscopic marvel of modern engineering. It is a fascinating trade-off between brilliance and budget.

Sheila Alston

April 16, 2026 AT 18:40It is honestly quite disheartening to see how we prioritize raw profit over the accessibility of knowledge. We should really be questioning why these companies are allowed to implement such predatory pricing structures on tools that are essentially becoming a basic human right for education and productivity. It feels wrong that a student might be penalized for a more verbose, thoughtful response simply because the 'physics' of a GPU makes it more expensive for a billionaire corporation.

poonam upadhyay

April 18, 2026 AT 06:30Lol look at these corporate shills pretending this is just about 'physics'!!! It is obviously a giant money-grab by the tech overlords to keep the small players crushed under a mountain of API bills!!!! Total scam vibe going on here!!!!

Natasha Madison

April 18, 2026 AT 12:50The pricing is just a cover for something bigger. They are tracking our token usage to map our cognitive patterns and feed them into a global surveillance grid. They don't need your money, they need your data architecture.

OONAGH Ffrench

April 19, 2026 AT 03:21the sequential nature of tokens is a reflection of linear time itself and the compute cost is just the tax we pay for simulating thought processes in a digital void

Rahul Borole

April 20, 2026 AT 21:45I strongly encourage all developers to implement the cost-optimization strategies mentioned. By refining your few-shot examples and utilizing the most appropriate model tier for each specific task, you can achieve a sustainable equilibrium between performance and expenditure. It is a professional necessity to master these efficiencies.