Best Tools and Platforms for Vibe Coding Workflows in 2025

Discover the top vibe coding tools in 2025 - Lovable, Cursor, v0 by Vercel, Tempo Labs, and Windsurf - and learn how to use AI to build apps faster without writing code from scratch.

Discover the top vibe coding tools in 2025 - Lovable, Cursor, v0 by Vercel, Tempo Labs, and Windsurf - and learn how to use AI to build apps faster without writing code from scratch.

Large language models power today's AI chatbots and tools. This article explains how they work - from tokenization and attention mechanisms to scaling and real-world limits - without hype or jargon.

Distributed transformer inference enables large language models to run across multiple GPUs using tensor and pipeline parallelism. Learn how these techniques work, their trade-offs, and why they're essential for modern LLM deployment.

Clean Architecture in vibe-coded projects keeps AI-generated code from mixing frameworks with business logic. Learn how to structure your app so frameworks stay at the edges - and your code stays maintainable.

LLM red teaming is offensive security testing that finds hidden risks in AI models before attackers do. Learn how prompt injection, jailbreaks, and data leaks are caught-and how to start testing your own models today.

Vibe coding adoption is surging, with 84% of developers using AI tools-but only 9% trust them for production code. Learn the real stats, top platforms, security risks, and how to use AI coding safely in 2025.

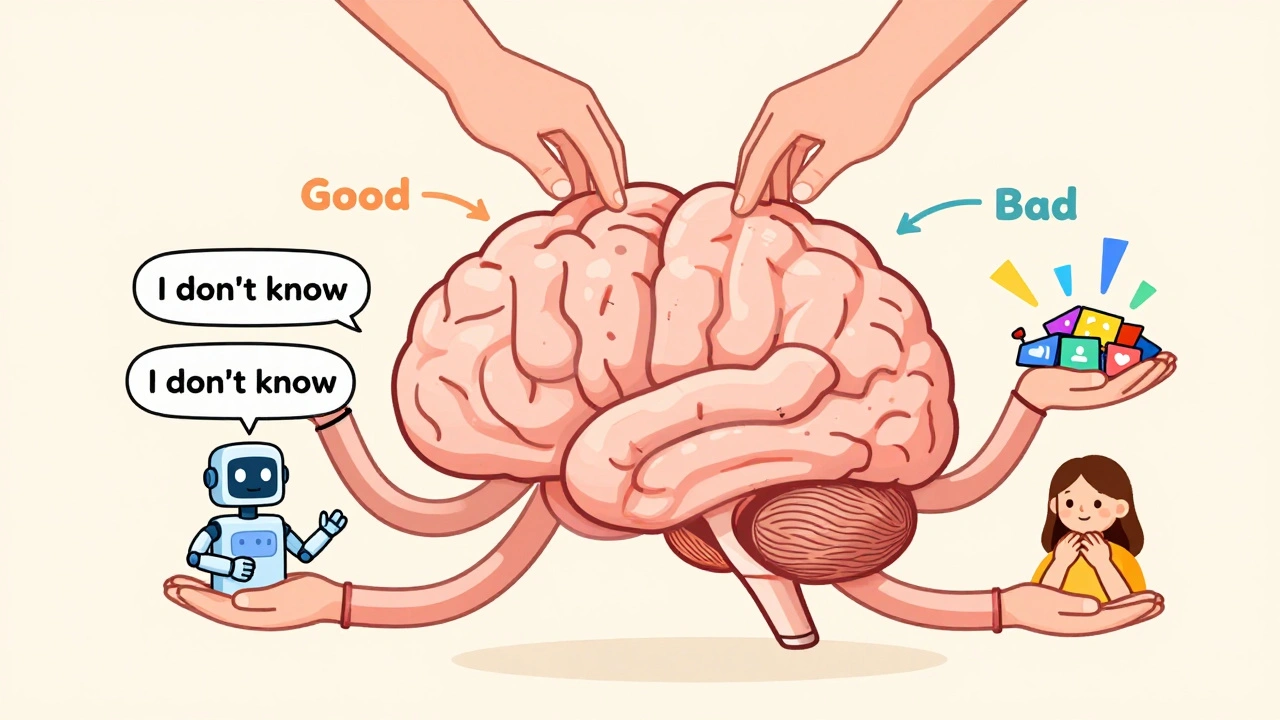

Preference tuning with human feedback is how modern AI learns to behave ethically and helpfully. Learn how RLHF works, its real costs, where it succeeds, where it fails, and what's coming next.

Vibe coding lets anyone build analytics with prompts, but hidden changes and data drift create serious risks. Learn the essential metrics dashboards must track in 2025 to avoid costly errors and ensure reliable AI-driven insights.

Chain-of-thought prompting improves large language models' reasoning by making them show their step-by-step logic. Learn how it works, when to use it, and why it's transforming AI applications in 2025.

Learn how to write precise LLM instructions that prevent hallucinations and security risks in factual tasks. Discover the five pillars of prompt hygiene used by top healthcare and legal teams to ensure accuracy and safety.

Sparse attention and Performer variants let LLMs process long sequences efficiently by reducing memory use from terabytes to gigabytes. Learn how they work, where they shine, and which models to use.

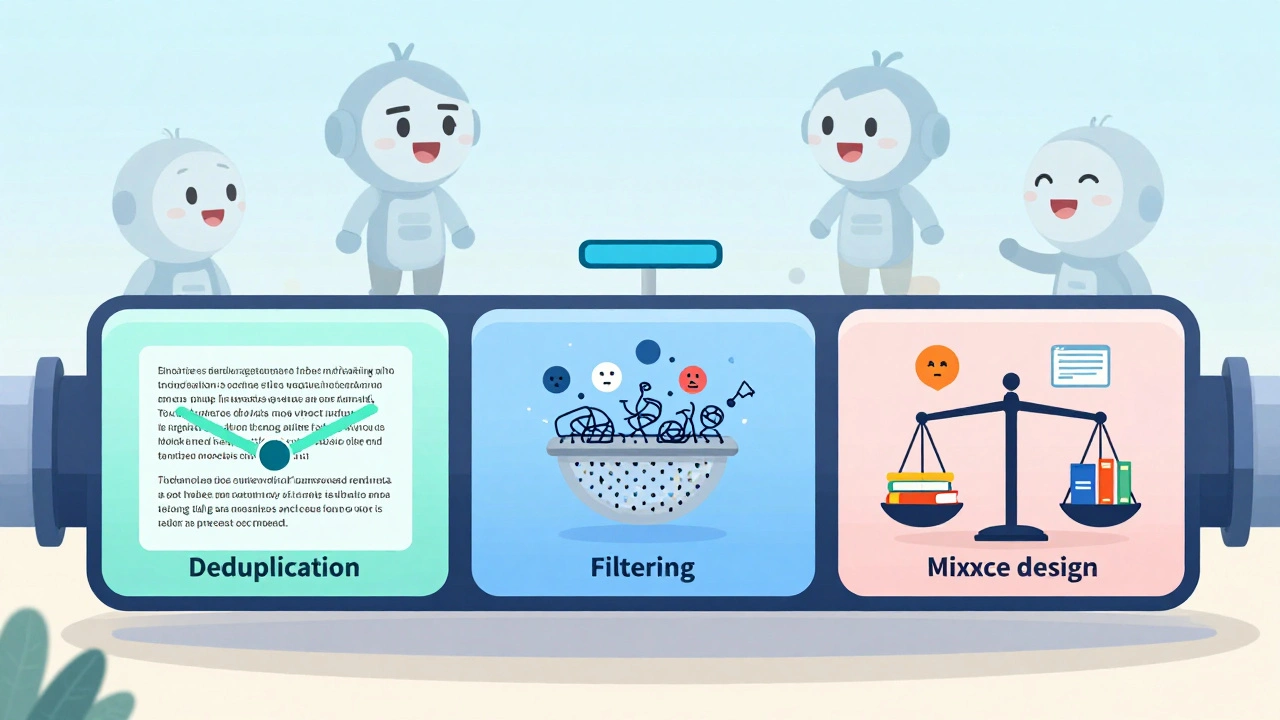

Training data pipelines for generative AI use deduplication, filtering, and mixture design to turn raw data into high-quality training sets. Poor pipelines lead to flawed models-here’s how to build them right.