Understanding Attention Head Specialization in Large Language Models

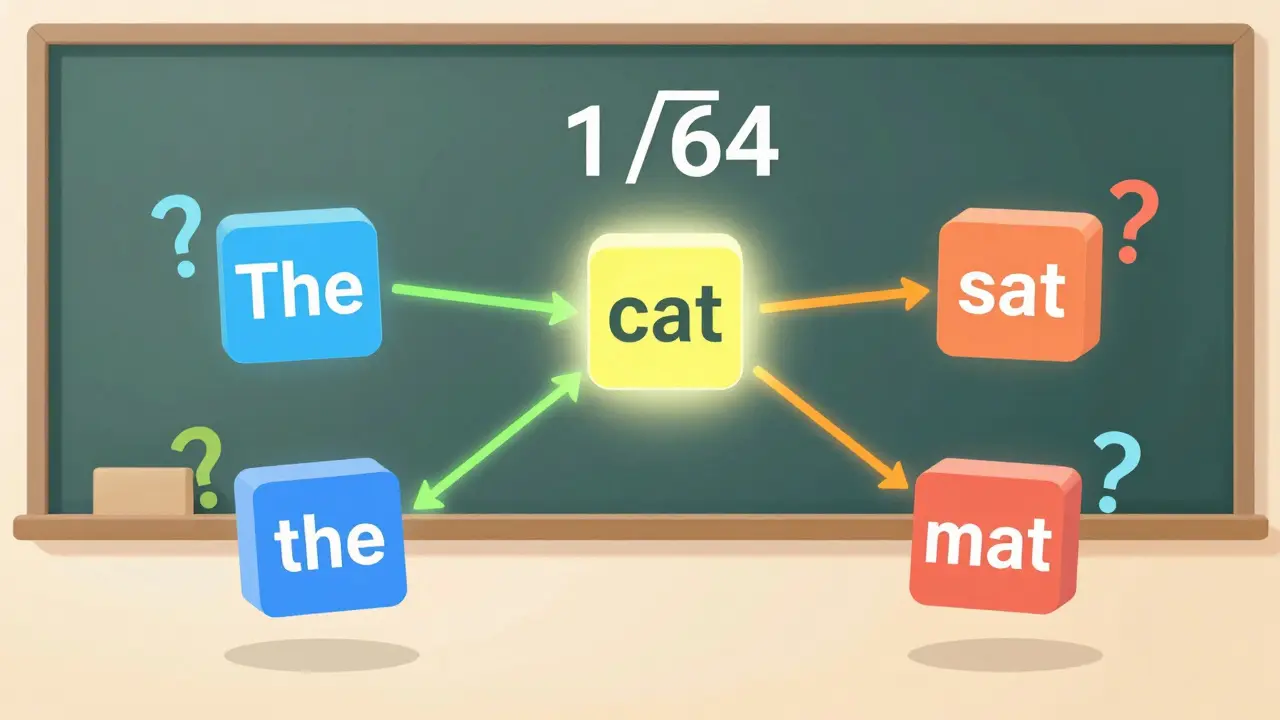

Attention head specialization allows large language models to process multiple linguistic patterns simultaneously-like grammar, coreference, and reasoning-boosting performance on complex tasks. Learn how these specialized heads work, what they do, and why they're critical to modern AI.