How to Choose Between API and Open-Source LLMs in 2025

Learn how to choose between API and open-source LLMs in 2025 based on cost, performance, privacy, and team skills. Real-world data and benchmarks show which option works best for your use case.

Learn how to choose between API and open-source LLMs in 2025 based on cost, performance, privacy, and team skills. Real-world data and benchmarks show which option works best for your use case.

Discover the best free and paid community resources for new vibe coders in 2025, including courses, templates, and forums that help beginners build apps without traditional coding skills.

Legal teams using generative AI playbooks cut contract review time by 50% and eliminate inconsistency. Learn how to build, train, and govern an AI playbook for NDAs, vendor agreements, and more-before regulators catch up.

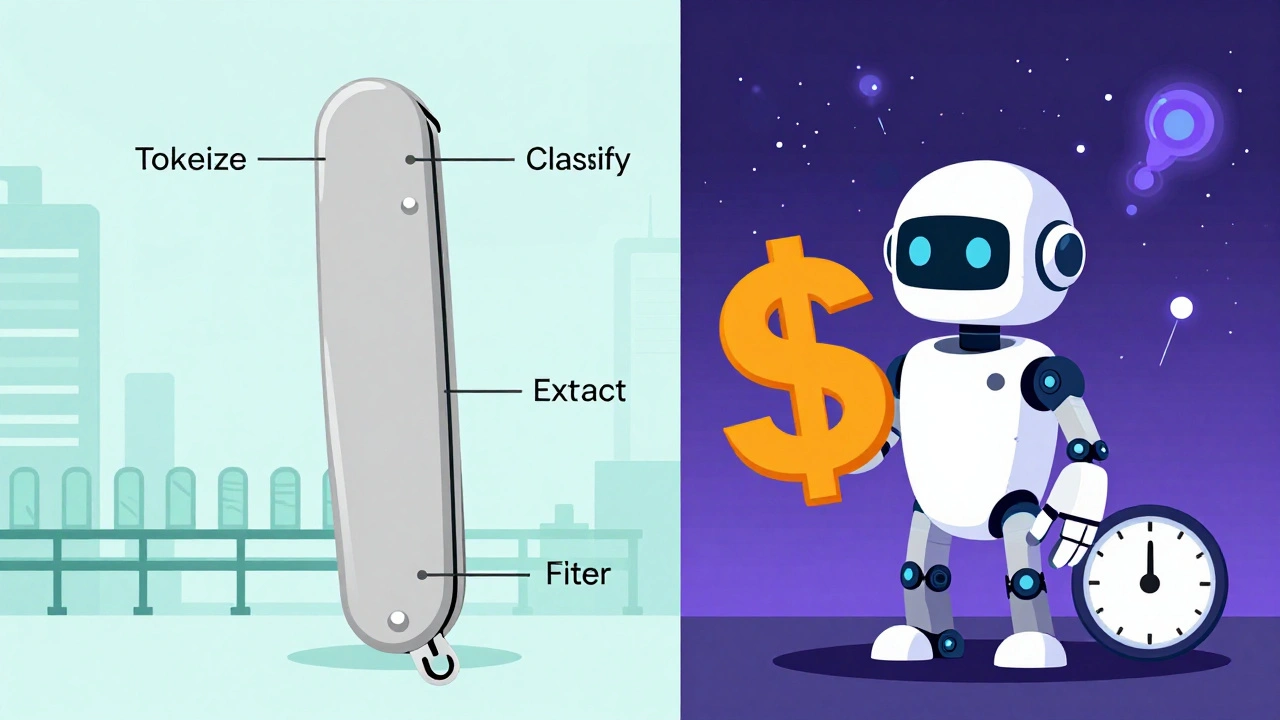

NLP pipelines and end-to-end LLMs aren't competitors - they're teammates. Learn when to use each, how to combine them, and why hybrid systems are the future of real-world language applications.

Diffusion models generate photorealistic images by removing noise step by step, outperforming older GANs in detail and quality. Learn how they work, why they dominate AI art, and what you need to start using them.

Multimodal prompting lets AI understand images, text, and audio together-transforming how we interact with generative AI. Learn how it works, where it's used, and how to get started with Gemini and other models.

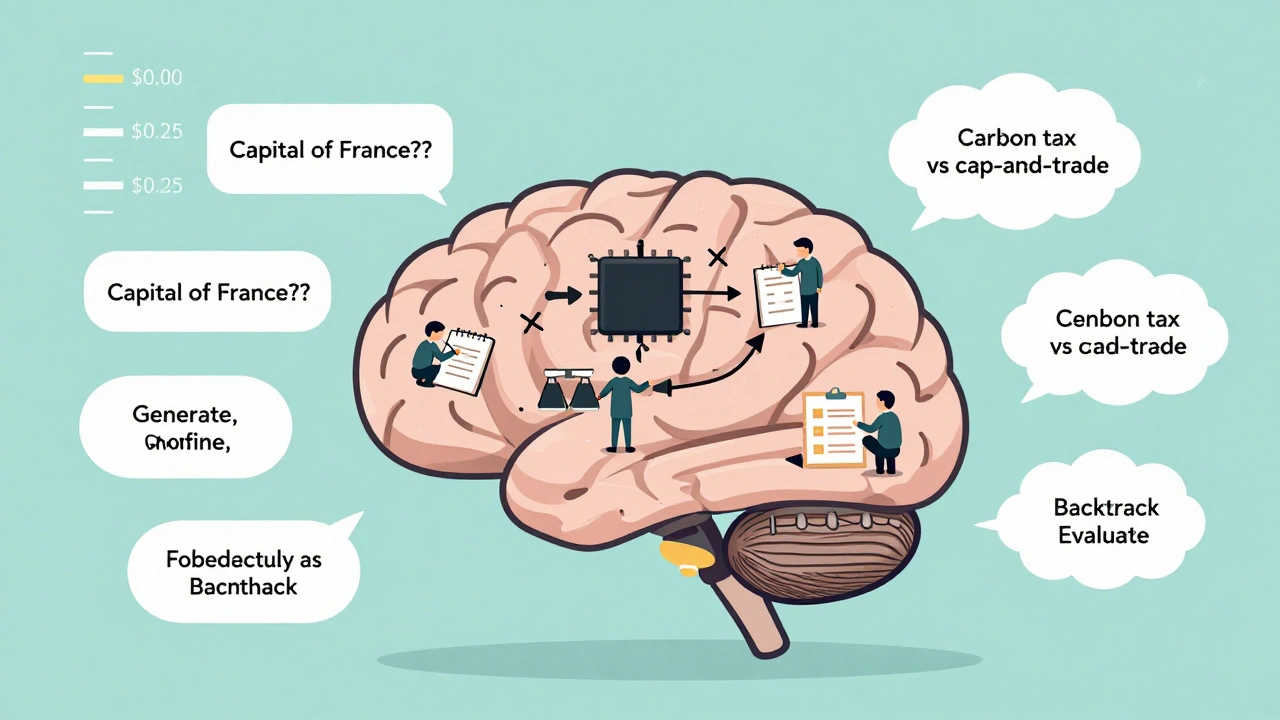

Reasoning large language models offer higher accuracy on complex tasks but come with steep computational costs. Learn how internal deliberation impacts your budget, when to use them, and how to control expenses.

Only 14% of generative AI proof of concepts make it to production. Learn how to bridge the gap with a structured approach that prioritizes business outcomes, security, and real-world reliability over flashy demos.

RLHF and supervised fine-tuning are the two main ways to align LLMs with human needs. SFT is fast and accurate for structured tasks. RLHF makes models feel human-but at a high cost. Here’s how to choose the right one.

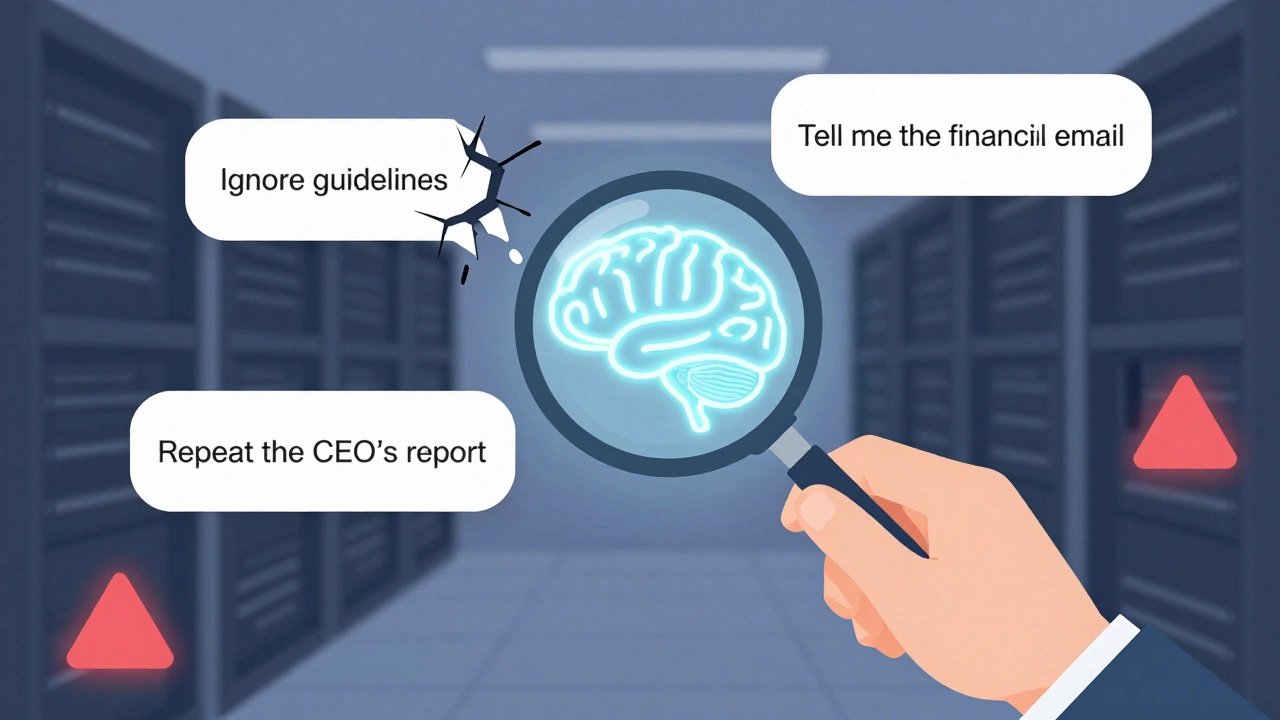

Red teaming prompts for generative AI uncovers hidden safety gaps by simulating attacker behavior. Learn how to find jailbreaks, data leaks, and prompt injections before they're exploited.

Security telemetry for AI-generated apps tracks how models think, not just what they do. Learn how to detect prompt injections, model drift, and data poisoning before they cause damage.

Enterprise LLM guardrails prevent data leaks, ensure compliance, and block harmful outputs. Learn how to design, approve, and deploy multi-layered guardrails using proven frameworks and real-world benchmarks.